🛣️ Week 10 - Lab Roadmap

Introduction to Natural Language Processing in Python

📚 Preparation: Loading packages and data

⚙️ Setup

⚠️ Windows Users: Some libraries in this lab, particularly

bertopicandsentence-transformers, may stall or hang when imported in standard Jupyter Notebook or VSCode. If you experience this, we recommend opening and running this file in JupyterLab instead (jupyter labfrom your terminal). JupyterLab handles multiprocessing-based imports more reliably on Windows.

Downloading the student notebook

Click on the below button to download the student notebook.

Downloading the data

Download the datasets we will use for this lab.

Use the links below to download this dataset:

Install missing libraries:

First, install all required packages using conda:

# Core data science libraries

conda install -c conda-forge pandas numpy matplotlib seaborn

# Text processing and NLP

conda install -c conda-forge wordcloud textblob nltk spacy

# Machine learning libraries

conda install -c conda-forge scikit-learn lightgbm xgboost shap

# Modern NLP and topic modeling

conda install -c conda-forge bertopic sentence-transformers

# Visualization

conda install -c conda-forge plotly

# Install spaCy English model

python -m spacy download en_core_web_sm💡 Prefer conda over pip where possible —

conda-forgebuilds are compiled against consistent native libraries and tend to avoid the DLL/dependency conflicts that can causebertopicandsentence-transformersto stall on Windows. If a package is not available onconda-forge, fall back topip install <package>afterwards.

Import required libraries:

# Core data manipulation and analysis

import pandas as pd

import numpy as np

import matplotlib.pyplot as plt

import seaborn as sns

import warnings

warnings.filterwarnings('ignore')

# Text processing and NLP

import re

import string

from wordcloud import WordCloud

from textblob import TextBlob

import nltk

from nltk.corpus import stopwords

from nltk.tokenize import word_tokenize

from nltk.stem import WordNetLemmatizer

import spacy

# Machine learning and model evaluation

from sklearn.model_selection import train_test_split

from sklearn.feature_extraction.text import CountVectorizer, TfidfVectorizer

from sklearn.metrics import (

classification_report,

confusion_matrix,

f1_score

)

from sklearn.ensemble import RandomForestClassifier

from sklearn.linear_model import LogisticRegression

from lightgbm import LGBMClassifier

# Model interpretation and explainability

import shap

# Modern topic modeling

from bertopic import BERTopic

from sentence_transformers import SentenceTransformer

# Advanced visualization

import plotly.express as px

import plotly.graph_objects as go

from plotly.subplots import make_subplots

# Download necessary NLTK data (run once)

print("Downloading NLTK data...")

nltk.download('punkt', quiet=True)

nltk.download('punkt_tab', quiet=True)

nltk.download('stopwords', quiet=True)

nltk.download('wordnet', quiet=True)

nltk.download('omw-1.4', quiet=True)

print("NLTK downloads complete!")

# Load spaCy model (make sure it's installed: python -m spacy download en_core_web_sm)

try:

nlp = spacy.load("en_core_web_sm")

print("spaCy model loaded successfully!")

except OSError:

print("⚠️ Please install spaCy English model: python -m spacy download en_core_web_sm")

nlp = None

# Set plotting style

plt.style.use('seaborn-v0_8')

plt.rcParams['figure.figsize'] = (10, 6)

plt.rcParams['font.size'] = 12

print("All libraries imported successfully! 🎉")🥅 Learning objectives

- Develop new data structures needed for NLP (e.g. document feature matrix, corpus)

- Visualise text output using wordclouds and keyness plots

- Perform feature selection to aid in classifying sentiment

- Create and validate topic models using Latent Dirichlet Allocation

A new data set: European Central Bank (ECB) statements (5 minutes)

# Load data

# Convert sentiment to categorical labels

# Remove any missing valuesDataset shape: (2563, 3)

Columns: ['text', 'sentiment', 'sentiment_label']

Sentiment distribution:

sentiment_label

Negative 1609

Positive 954

Name: count, dtype: int64| text | sentiment | sentiment_label | |

|---|---|---|---|

| 0 | target2 is seen as a tool to promote the furth… | 1 | Positive |

| 1 | the slovak republic for example is now home to… | 1 | Positive |

| 2 | the earlier this happens the earlier economic … | 1 | Positive |

| 3 | the bank has made essential contributions in k… | 1 | Positive |

| 4 | moreover the economic size and welldeveloped f… | 1 | Positive |

Today, we will be looking at a data set of statements from the European Central Bank.

text: The ECB statement text.sentiment: Numeric sentiment label (1 = positive, 0 = negative).sentiment_label: Categorical sentiment label (Positive/Negative).

The column we are going to analyze in detail is text which contains ECB statements that we can analyze for sentiment and topics.

Enter Natural Language Processing with Python! (25 minutes)

Python offers excellent libraries for natural language processing. We’ll use a combination of nltk, spacy, and scikit-learn for text preprocessing and feature extraction.

Text Preprocessing

First, let’s create a comprehensive preprocessing function adapted for financial/economic text:

def preprocess_text(text, remove_stopwords=True, lemmatize=True):

"""

Comprehensive text preprocessing function for ECB statements

"""

if pd.isna(text):

return ""

# Convert to lowercase

text = str(text).lower()

# Remove URLs, mentions, hashtags

text = re.sub(r'http\S+|www\S+|https\S+', '', text, flags=re.MULTILINE)

text = re.sub(r'@\w+|#\w+', '', text)

# Remove punctuation but keep decimal points for financial data

text = re.sub(r'[^\w\s\.]', '', text)

# Remove extra whitespace

text = ' '.join(text.split())

if remove_stopwords:

# Remove stopwords

stop_words = set(stopwords.words('english'))

# Add custom stopwords for ECB statements

custom_stopwords = {'said', 'one', 'would', 'also', 'get', 'go', 'see', 'well', 'may', 'could'}

stop_words.update(custom_stopwords)

tokens = word_tokenize(text)

tokens = [token for token in tokens if token not in stop_words and len(token) > 2]

text = ' '.join(tokens)

if lemmatize and nlp is not None:

# Lemmatization using spaCy

doc = nlp(text)

text = ' '.join([token.lemma_ for token in doc if not token.is_stop and len(token.text) > 2])

return text

# Apply preprocessing

ecb_data['text_clean'] = ecb_data['text'].apply(preprocess_text)

# Remove empty texts after cleaning

ecb_data = ecb_data[ecb_data['text_clean'].str.len() > 0].reset_index(drop=True)

print(f"Dataset shape after cleaning: {ecb_data.shape}")Dataset shape after cleaning: (2563, 4)Creating Document-Term Matrix

We’ll use scikit-learn’s CountVectorizer to create our document-term matrix that:

- Limits to the top 1,000 features

- Has a minimum document frequency of 5 documents

- Remembers terms that appear in over 95% of the documents

- Includes bigrams AND

- Keeps words with at least three characters (use

token_pattern = r'\b[a-zA-Z]{3,}\b')

# Create vectorizer with parameters suitable for financial text

vectorizer = CountVectorizer(

max_features=1000, # Limit to top 1000 features

min_df=5, # Minimum document frequency

max_df=0.95, # Remove terms that appear in >95% of documents

ngram_range=(1, 2), # Include bigrams for financial terms

token_pattern=r'\b[a-zA-Z]{3,}\b' # Words with at least 3 characters

)

# Fit and transform the cleaned text

doc_term_matrix = vectorizer.fit_transform(ecb_data['text_clean'])

feature_names = vectorizer.get_feature_names_out()

print(f"Document-term matrix shape: {doc_term_matrix.shape}")

print(f"Number of features: {len(feature_names)}")

print(f"Sample features: {feature_names[:20]}")Document-term matrix shape: (2563, 1000)

Number of features: 1000

Sample features: ['ability' 'able' 'accelerate' 'access' 'accompany' 'account'

'account deficit' 'accountability' 'accumulation' 'achieve' 'act'

'action' 'activity' 'actually' 'add' 'addition' 'additional' 'address'

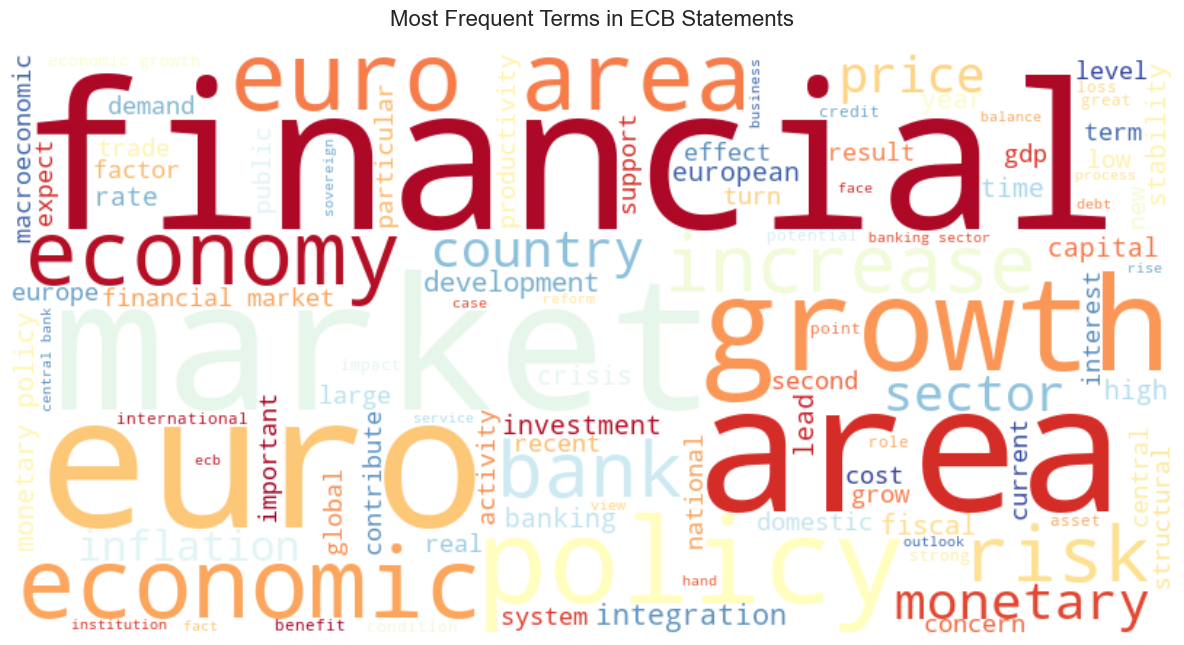

'adjust' 'adjustment']Word Cloud Visualization

Let’s create a word cloud to visualize the most frequent terms in ECB statements:

# Calculate term frequencies

term_freq = np.array(doc_term_matrix.sum(axis=0)).flatten()

term_freq_dict = dict(zip(feature_names, term_freq))

# Create word cloud

plt.figure(figsize=(12, 8))

wordcloud = WordCloud(

width=800, height=400,

background_color='white',

max_words=100,

colormap='RdYlBu' # Economic color scheme

).generate_from_frequencies(term_freq_dict)

plt.imshow(wordcloud, interpolation='bilinear')

plt.axis('off')

plt.title('Most Frequent Terms in ECB Statements', fontsize=16, pad=20)

plt.tight_layout()

plt.show()

💡Insight: Try deleting the lines of code that remove stopwords. How does this change the visualisation? Are there any other stopwords you’d remove aside from the ones we’ve already removed from the data?

Token Length Analysis by Sentiment

Let’s analyze the distribution of statement lengths by sentiment:

# Calculate number of tokens per statement

ecb_data['n_tokens'] = ecb_data['text_clean'].str.split().str.len()

# Create histogram❓Question: How does token count for documents vary by sentiment?

Supervised learning example: using tokens as features to identify positive ECB sentiment (30 minutes)

We can use our machine learning skills to predict whether an ECB statement expresses positive or negative sentiment.

Predictive Modeling Setup

# Convert sparse matrix to dense array and create DataFrame

X = pd.DataFrame(doc_term_matrix.toarray(), columns=feature_names)

y = ecb_data['sentiment'] # Use numeric labels (1 = positive, 0 = negative)

print(f"Feature matrix shape: {X.shape}")

print(f"Target distribution:\n{y.value_counts()}")

# Create train-test splitBuilding a Light Gradient Boosted Model

LightGBM and XGBoost are both gradient boosting algorithms that build sequential decision trees, but LightGBM is typically faster and more memory-efficient, especially on large datasets. The key difference is in how they grow trees: XGBoost grows trees level-by-level (depth-wise), while LightGBM grows leaf-by-leaf (choosing the split that reduces error most), which often leads to faster training with similar or better accuracy. Try building a model with the following hyperparameters:

feature_fraction = mtry(defined in the code)- 2,000 estimators

- Learning rate of 0.01

- Feature importance type set to “gain”

# Calculate mtry (square root of number of features / number of features)

mtry = int(np.sqrt(X_train.shape[1]))/X_train.shape[1]

# Create LightGBM model

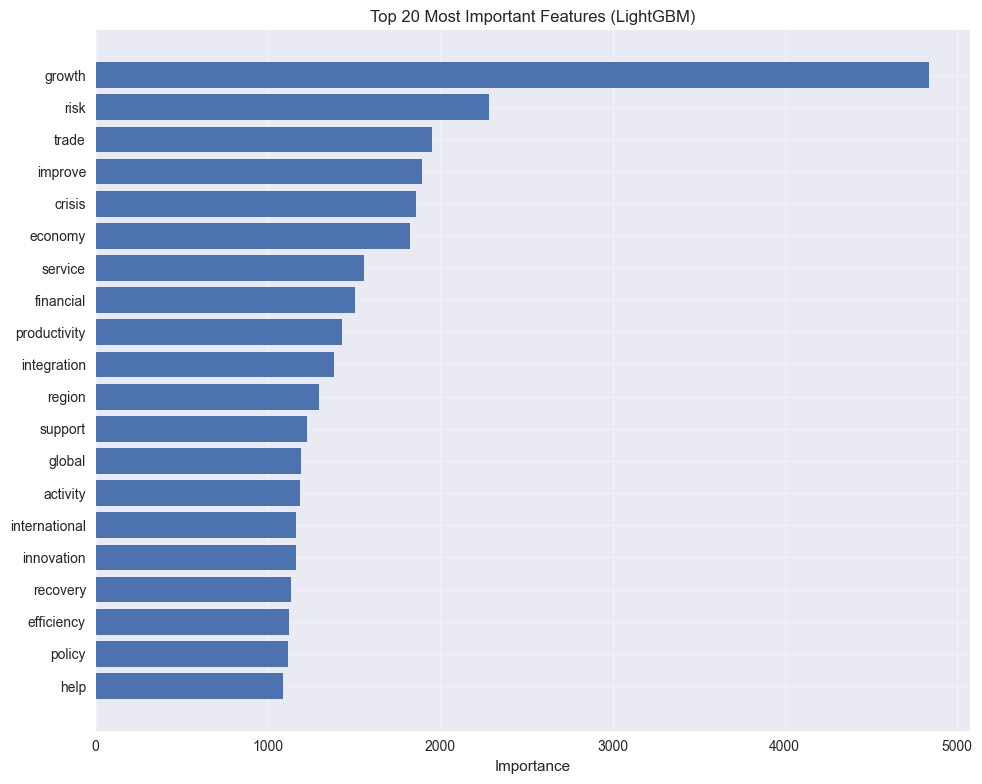

# Fit the modelVariable Importance Analysis

Variable importance plots show which features (variables) contribute most to a model’s predictions. They rank features by how much they improve the model’s performance, helping you understand what drives your predictions. How to read them:

- Features are listed vertically (top = most important)

- Bar length or score shows relative importance

- Longer bars = that feature has more influence on predictions

Why they’re useful:

- Interpretability: Understand what your model relies on

- Feature selection: Identify which variables you can drop

- Domain validation: Check if important features make sense for your problem

- Debugging: Spot if the model is using unexpected/problematic features

📝 Note: Unlike Lasso regression coefficients, variable importance scores only tell you how much a feature matters, not which direction (positive or negative effect). A highly important feature could be pushing predictions either way!

# Get feature importances

feature_importance = pd.DataFrame({

'feature': feature_names,

'importance': lgb_model.feature_importances_

}).sort_values('importance', ascending=True).tail(20)

# Create feature importance plot

plt.figure(figsize=(10, 8))

plt.barh(range(len(feature_importance)), feature_importance['importance'])

plt.yticks(range(len(feature_importance)), feature_importance['feature'])

plt.xlabel('Importance')

plt.title('Top 20 Most Important Features (LightGBM)')

plt.grid(True, alpha=0.3)

plt.tight_layout()

plt.show()

print("Top 10 most important features:")

print(feature_importance.tail(10)[['feature', 'importance']])

Top 10 most important features:

feature importance

502 integration 1385.382330

720 productivity 1427.101442

354 financial 1506.942541

832 service 1558.899241

264 economy 1823.397919

178 crisis 1857.511730

463 improve 1892.166666

942 trade 1949.947062

809 risk 2285.369395

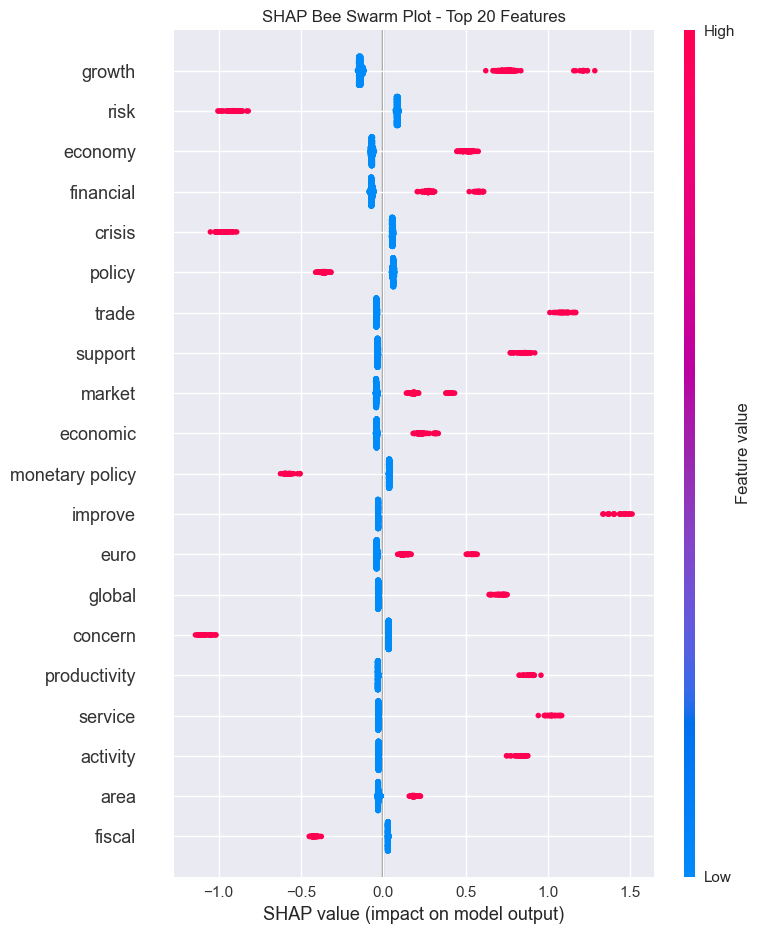

428 growth 4834.307652SHAP (Shapley) Values

SHAP values extend variable importance by providing two critical insights: - Influence by observation: See how each feature contributed to individual predictions, not just overall importance. This lets you explain “why did the model predict THIS specific statement as negative?” - Direction of influence: SHAP values are signed (positive or negative), showing whether a feature pushed the prediction up or down. For example, you can see that “high positive_words_count increased the probability of positive sentiment by 0.15” for a specific statement.

# Create SHAP explainer

explainer = shap.TreeExplainer(lgb_model)

shap_values = explainer.shap_values(X_train.iloc[:1000])

# Bee swarm plot

plt.figure(figsize=(12, 8))

shap.summary_plot(

shap_values,

X_train.iloc[:1000],

feature_names=feature_names,

plot_type="dot",

max_display=20,

show=False,

)

plt.title("SHAP Bee Swarm Plot - Top 20 Features")

plt.xlabel("SHAP value (impact on model output)")

plt.tight_layout()

plt.show()

🗣️ CLASSROOM DISCUSSION:

Which features are most important for predicting ECB sentiment? What economic themes emerge?

Model Evaluation

Let’s no evaluate the model using a confusion matrix.

# Make predictions

# Create a Confusion matrix

# Calculate F1 score❓Question: How well does our model perform on the test set?

BERTopic: A Modular Pipeline

BERTopic isn’t a single model so much as it’s a pipeline that combines multiple techniques:

- Sentence Transformers: Creates dense vector embeddings that capture semantic meaning of documents

- UMAP (Uniform Manifold Approximation and Projection): Reduces embedding dimensions while preserving document relationships

- HDBSCAN (Hierarchical Density-Based Clustering): Groups similar documents into clusters

- c-TF-IDF (class-based TF-IDF): Extracts representative words for each topic cluster

- CountVectorizer (optional): Tokenizes and vectorizes text for the c-TF-IDF step

This modular design means you can swap components (e.g., use different embeddings or clustering algorithms) while keeping the overall framework. Each model handles a specific step: embeddings → dimensionality reduction → clustering → topic representation.

🛑 Why we keep stopwords in BERTopic: Unlike traditional bag-of-words approaches, BERTopic uses sentence transformers that need full sentences (including stopwords like “the”, “is”, “will”) to capture semantic context and relationships. The c-TF-IDF component automatically downweights common words, so we get better embeddings without losing interpretability. We only filter stopwords when displaying topic words to humans, not during modeling!

# Filter for longer statements

statements_for_topics = ecb_data[ecb_data["text"].str.len() > 30].copy()

def preprocess_for_bertopic(text):

"""Enhanced preprocessing for BERTopic with financial stopword removal"""

if pd.isna(text):

return ""

# Basic cleaning

text = str(text).lower()

text = re.sub(r"http\S+|www\S+|https\S+", "", text, flags=re.MULTILINE)

text = re.sub(r"[^\w\s]", " ", text)

text = " ".join(text.split())

# Remove stopwords

stop_words = set(stopwords.words("english"))

custom_stopwords = {

"ecb", "bank", "central", "said", "one", "would", "also",

"get", "go", "see", "well", "may", "could", "will", "shall",

"percent", "per", "cent", "euro", "european", "committee",

"council", "meeting", "decision", "policy", "monetary"

}

stop_words.update(custom_stopwords)

# Tokenize and remove stopwords

tokens = word_tokenize(text)

tokens = [token for token in tokens if token not in stop_words and len(token) > 2]

text = " ".join(tokens)

return text

statements_for_topics["text_bertopic"] = statements_for_topics["text"].apply(preprocess_for_bertopic)

statements_for_topics = statements_for_topics[statements_for_topics["text_bertopic"].str.len() > 15]

print(f"Number of statements for topic modeling: {len(statements_for_topics)}")# Filter for longer statements

statements_for_topics = ecb_data[ecb_data["text"].str.len() > 30].copy()

def preprocess_for_bertopic(text):

"""Basic preprocessing for BERTopic without stopword removal"""

if pd.isna(text):

return ""

# Basic cleaning

text = str(text).lower()

text = re.sub(r"http\S+|www\S+|https\S+", "", text, flags=re.MULTILINE)

text = re.sub(r"[^\w\s]", " ", text)

text = " ".join(text.split())

return text

statements_for_topics["text_bertopic"] = statements_for_topics["text"].apply(preprocess_for_bertopic)

statements_for_topics = statements_for_topics[statements_for_topics["text_bertopic"].str.len() > 15]

print(f"Number of statements for topic modeling: {len(statements_for_topics)}")Running BERTopic

# Data diagnostics

print("Data diagnostics:")

print(f"Number of documents: {len(statements_for_topics)}")

print(f"Average text length: {statements_for_topics['text_bertopic'].str.len().mean():.1f}")

# Clean data

statements_clean = statements_for_topics[statements_for_topics['text_bertopic'].str.len() > 10].copy()

print(f"Documents after cleaning: {len(statements_clean)}")

# BERTopic model

topic_model = BERTopic(

language="english",

calculate_probabilities=False,

verbose=True,

min_topic_size=10

)

try:

all_texts = statements_clean['text_bertopic'].tolist()

print(f"Running topic modeling with {len(all_texts)} documents...")

topics_final = topic_model.fit_transform(all_texts)

print(f"Topic modeling successful! Found {len(topic_model.get_topic_info())} topics")

# Show topic info

topic_info = topic_model.get_topic_info()

print(f"\nFinal result: {len(topic_info)} topics found")

print("\nTopic overview:")

print(topic_info)

except Exception as e:

print(f"Error with topic modeling: {e}")2026-03-09 09:25:18,249 - BERTopic - Embedding - Transforming documents to embeddings.

Data diagnostics:

Number of documents: 2563

Average text length: 171.7

Documents after cleaning: 2563

Running topic modeling with 2563 documents...

Batches: 100%|██████████| 81/81 [00:08<00:00, 10.01it/s]

2026-03-09 09:25:28,762 - BERTopic - Embedding - Completed ✓

2026-03-09 09:25:28,762 - BERTopic - Dimensionality - Fitting the dimensionality reduction algorithm

2026-03-09 09:25:43,610 - BERTopic - Dimensionality - Completed ✓

2026-03-09 09:25:43,612 - BERTopic - Cluster - Start clustering the reduced embeddings

2026-03-09 09:25:43,710 - BERTopic - Cluster - Completed ✓

2026-03-09 09:25:43,715 - BERTopic - Representation - Fine-tuning topics using representation models.

2026-03-09 09:25:43,778 - BERTopic - Representation - Completed ✓

Topic modeling successful! Found 44 topics

Final result: 44 topics found

Topic overview:

Topic Count Name \

0 -1 1000 -1_the_in_of_and

1 0 180 0_euro_area_the_to

2 1 138 1_financial_integration_and_capital

3 2 95 2_fiscal_sustainability_finances_public

4 3 72 3_inflation_expectations_outlook_that

5 4 69 4_banks_profitability_bank_resolution

6 5 68 5_ecb_ecbs_the_of

7 6 67 6_area_euro_growth_recovery

8 7 59 7_monetary_policy_the_to

9 8 53 8_euro_financial_markets_integration

10 9 51 9_demand_domestic_consumption_growth

11 10 51 10_trade_area_eu_euro

12 11 44 11_interest_rates_on_low

13 12 41 12_crisis_that_the_it

14 13 40 13_banking_sector_consolidation_integration

15 14 28 14_deficit_gdp_account_us

16 15 28 15_emerging_economies_global_world

17 16 27 16_productivity_growth_factor_total

18 17 27 17_risk_downside_risks_could

19 18 26 18_investors_complex_risk_ratings

20 19 26 19_balance_sovereign_sheets_banks

21 20 24 20_ageing_population_public_finances

22 21 24 21_fiscal_is_policies_euro

23 22 23 22_retail_sepa_payment_payments

24 23 22 23_policymakers_decisions_accountability_policy

25 24 21 24_growth_economic_employment_wellbeing

26 25 20 25_investment_market_scale_integration

27 26 19 26_union_monetary_emu_economic

28 27 19 27_price_stability_upside_risks

29 28 18 28_macroprudential_to_instruments_prudential

30 29 17 29_loans_lending_credit_corporations

31 30 16 30_assets_these_funds_triggered

32 31 15 31_european_integration_market_services

33 32 15 32_globalisation_crossborder_goods_trade

34 33 15 33_macroeconomic_programmes_policies_was

35 34 14 34_banking_system_positive_nonbanks

36 35 13 35_central_bankers_banks_credibility

37 36 12 36_leverage_derivatives_haircuts_leveraged

38 37 12 37_challenges_european_banking_sector

39 38 12 38_foreign_direct_fdi_investment

40 39 11 39_stability_financial_unwinding_implications

41 40 11 40_diffusion_technologies_new_technological

42 41 10 41_considerations_personal_my_however

43 42 10 42_central_inflation_challenge_distortion

Representation \

0 [the, in, of, and, to, is, as, that, for, have]

1 [euro, area, the, to, that, of, in, for, is, as]

2 [financial, integration, and, capital, markets...

3 [fiscal, sustainability, finances, public, tax...

4 [inflation, expectations, outlook, that, on, t...

5 [banks, profitability, bank, resolution, to, l...

6 [ecb, ecbs, the, of, monetary, policy, that, t...

7 [area, euro, growth, recovery, economic, in, h...

8 [monetary, policy, the, to, in, is, that, anal...

9 [euro, financial, markets, integration, area, ...

10 [demand, domestic, consumption, growth, recove...

11 [trade, area, eu, euro, integration, the, glob...

12 [interest, rates, on, low, rate, real, would, ...

13 [crisis, that, the, it, great, financial, of, ...

14 [banking, sector, consolidation, integration, ...

15 [deficit, gdp, account, us, 2010, current, in,...

16 [emerging, economies, global, world, asia, gro...

17 [productivity, growth, factor, total, in, by, ...

18 [risk, downside, risks, could, prices, form, p...

19 [investors, complex, risk, ratings, risks, mod...

20 [balance, sovereign, sheets, banks, sheet, deb...

21 [ageing, population, public, finances, fiscal,...

22 [fiscal, is, policies, euro, area, discipline,...

23 [retail, sepa, payment, payments, systems, ele...

24 [policymakers, decisions, accountability, poli...

25 [growth, economic, employment, wellbeing, sust...

26 [investment, market, scale, integration, firms...

27 [union, monetary, emu, economic, the, unions, ...

28 [price, stability, upside, risks, analysis, me...

29 [macroprudential, to, instruments, prudential,...

30 [loans, lending, credit, corporations, loan, n...

31 [assets, these, funds, triggered, redemptions,...

32 [european, integration, market, services, infr...

33 [globalisation, crossborder, goods, trade, lin...

34 [macroeconomic, programmes, policies, was, int...

35 [banking, system, positive, nonbanks, intercon...

36 [central, bankers, banks, credibility, communi...

37 [leverage, derivatives, haircuts, leveraged, m...

38 [challenges, european, banking, sector, proble...

39 [foreign, direct, fdi, investment, inward, out...

40 [stability, financial, unwinding, implications...

41 [diffusion, technologies, new, technological, ...

42 [considerations, personal, my, however, views,...

43 [central, inflation, challenge, distortion, ba...

Representative_Docs

0 [on the other hand if the functioning of the c...

1 [first the nature of the inflation shock we ar...

2 [as i have already mentioned financial integra...

3 [however the benefits from expansionary polici...

4 [the economy may then enter on a selfsustainin...

5 [at the same time market valuations of banks h...

6 [a recent ecb study on eu banking structures e...

7 [the ongoing economic expansion of the euro ar...

8 [while the first phase of the crisis can be in...

9 [the euro and the financial markets the creati...

10 [the recovery has been driven almost entirely ...

11 [this is mainly due to more sustained growth i...

12 [on the asset side the flattening of the term ...

13 [the view that crisis resolution mechanisms we...

14 [this work is particularly relevant for the on...

15 [the issue of a large and growing us current a...

16 [all in all the growth in the economic weight ...

17 [most studies point to the potential competiti...

18 [in principle such effects should normally be ...

19 [similarly inadequate information about the qu...

20 [the justification for this threshold is twofo...

21 [the prospective budgetary costs of population...

22 [the fiscal component of sound finances failin...

23 [it acts as an engine for creating a more inte...

24 [apart from the wellknown recognition and deci...

25 [to build with passion and vigour a shared fut...

26 [this could take the form of continued consoli...

27 [during the years leading up to emu indeed sev...

28 [in addition our monetary analysis points to u...

29 [the capital and borrowerbased macroprudential...

30 [moreover net demand for all types of loans ha...

31 [18 such spirals could be triggered if funds w...

32 [at the european level authorities are well aw...

33 [if we define globalisation as the increasing ...

34 [this development was unwarranted given the un...

35 [in addition as was shown yesterday there is s...

36 [incidentally the identification of the distur...

37 [15 for example taking the financial reporting...

38 [one of the practical problems when following ...

39 [gross foreign direct investment fdi transacti...

40 [for years the problem of the sustainability o...

41 [for instance a recent ecb survey of large eur...

42 [however all this has to be weighed against th...

43 [while my comments so far about technical prog...Exploring Topics

# Define stopwords

stop_words = set(stopwords.words("english"))

# Display top words for each topic (filtered)

print("\nECB Topics and their representative words:")

for topic_num in range(min(8, len(topic_info) - 1)):

if topic_num != -1:

topic_words = topic_model.get_topic(topic_num)

# Filter out stopwords, keeping enough to get 10 non-stopword words

words = []

for word, _ in topic_words:

if word not in stop_words:

words.append(word)

if len(words) == 10:

break

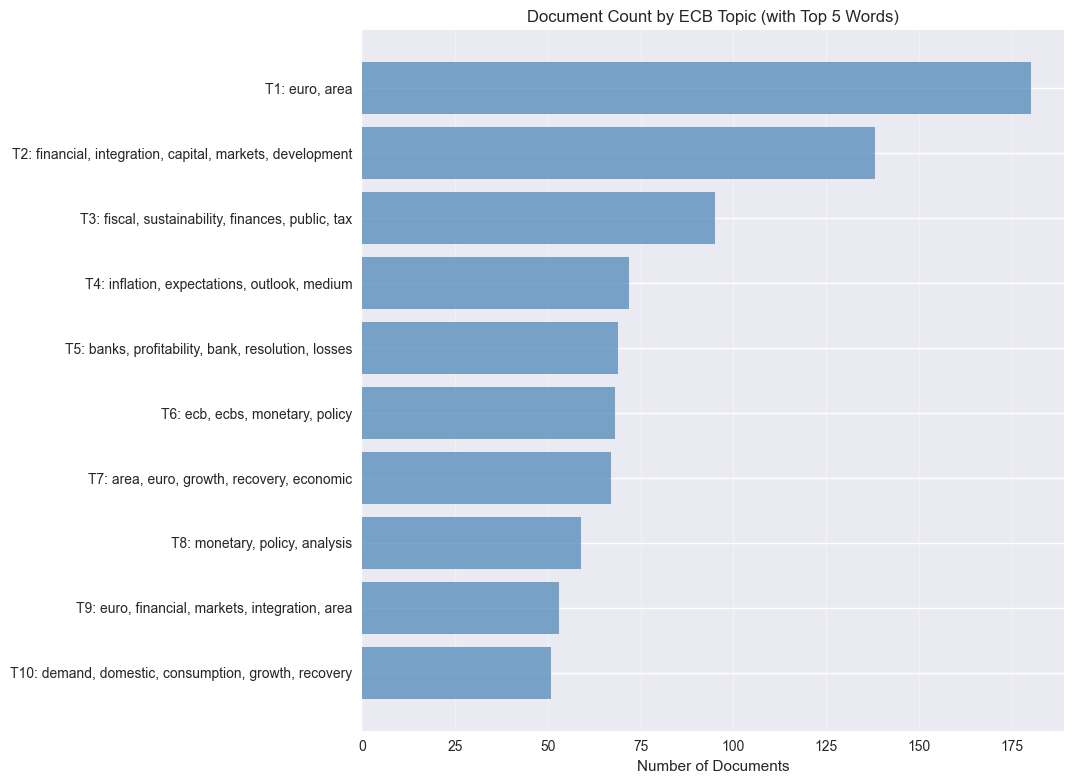

print(f"Topic {topic_num+1}: {', '.join(words)}")ECB Topics and their representative words:

Topic 1: euro, area

Topic 2: financial, integration, capital, markets, development, growth, allocation

Topic 3: fiscal, sustainability, finances, public, tax, imbalances

Topic 4: inflation, expectations, outlook, medium

Topic 5: banks, profitability, bank, resolution, losses, would

Topic 6: ecb, ecbs, monetary, policy

Topic 7: area, euro, growth, recovery, economic, demand, domestic

Topic 8: monetary, policy, analysis❓Question: What do each of these topics mean?

Topic Visualizations

# Create visualizations

try:

# Topic word scores

fig1 = topic_model.visualize_barchart(top_k_topics=min(8, len(topic_info)-1), n_words=10, height=400)

fig1.show()

# Topic similarity

fig2 = topic_model.visualize_topics(height=600)

fig2.show()

except Exception as e:

print(f"Visualization error: {e}")

print("Creating alternative visualizations...")

# Alternative: horizontal bar plot with topic word labels

plt.figure(figsize=(12, 8))

topic_counts = topic_info[topic_info['Topic'] != -1].head(10)

if len(topic_counts) > 0:

# Sort by topic number to ensure proper order

topic_counts = topic_counts.sort_values('Topic')

# Create topic labels with top 5 words (filtered for stopwords, adding 1 to topic numbers)

topic_labels = []

for topic_num in topic_counts['Topic']:

try:

topic_words = topic_model.get_topic(topic_num)

# Filter out stopwords and get top 5 remaining words

top_words = []

for word, _ in topic_words:

if word not in stop_words:

top_words.append(word)

if len(top_words) == 5:

break

label = f"T{topic_num + 1}: {', '.join(top_words)}" # Add 1 to topic number

topic_labels.append(label)

except:

topic_labels.append(f"T{topic_num + 1}: (words unavailable)") # Add 1 to topic number

# Reverse the order so Topic 1 (originally 0) is at the top

topic_labels_reversed = topic_labels[::-1]

counts_reversed = topic_counts['Count'].values[::-1]

# Create horizontal bar chart

y_pos = range(len(topic_counts))

plt.barh(y_pos, counts_reversed, color='steelblue', alpha=0.7)

# Customize the plot

plt.yticks(y_pos, topic_labels_reversed)

plt.xlabel('Number of Documents')

plt.title('Document Count by ECB Topic (with Top 5 Words)')

plt.grid(True, alpha=0.3, axis='x')

# Adjust layout to accommodate longer labels

plt.tight_layout()

plt.subplots_adjust(left=0.4) # Make room for topic labels

plt.show()

else:

print("No topics available for visualization")Visualization error: BERTopic.visualize_barchart() got an unexpected keyword argument 'top_k_topics'

Creating alternative visualizations...

BERTopic by sentiment

# Filter for positive and negative sentiments

positive_data = statements_for_topics[statements_for_topics['sentiment_label'] == 'Positive'].copy()

negative_data = statements_for_topics[statements_for_topics['sentiment_label'] == 'Negative'].copy()

print(f"Positive statements: {len(positive_data)}")

print(f"Negative statements: {len(negative_data)}")

# Function to run BERTopic on a subset

def run_bertopic_by_sentiment(data, sentiment_type):

"""Run BERTopic on statements filtered by sentiment"""

if len(data) < 10:

print(f"\nNot enough {sentiment_type} statements for topic modeling (minimum 10 required)")

return None, None

print(f"\n{'='*60}")

print(f"BERTopic Analysis - {sentiment_type} Sentiment")

print(f"{'='*60}")

# Prepare documents

documents = data['text_bertopic'].tolist()

# Initialize BERTopic

vectorizer_model = CountVectorizer(min_df=2, max_df=0.95)

topic_model = BERTopic(

vectorizer_model=vectorizer_model,

min_topic_size=10,

nr_topics='auto',

verbose=True

)

# Fit the model

topics, probabilities = topic_model.fit_transform(documents)

# Get topic info

topic_info = topic_model.get_topic_info()

print(f"\nNumber of topics found: {len(topic_info) - 1}") # -1 to exclude outlier topic

print(f"\nTopic distribution:")

print(topic_info.head(10))

# Display top words for each topic (filtered for stopwords)

stop_words = set(stopwords.words("english"))

print(f"\n{sentiment_type} Topics and their representative words:")

for topic_num in range(min(8, len(topic_info) - 1)):

if topic_num != -1:

topic_words = topic_model.get_topic(topic_num)

# Filter out stopwords and get up to 10 remaining words

words = []

for word, _ in topic_words:

if word not in stop_words:

words.append(word)

if len(words) == 10:

break

print(f"Topic {topic_num}: {', '.join(words)}")

return topic_model, topic_info

# Run BERTopic for Positive sentiment

positive_model, positive_info = run_bertopic_by_sentiment(positive_data, "Positive")

# Run BERTopic for Negative sentiment

negative_model, negative_info = run_bertopic_by_sentiment(negative_data, "Negative")2026-03-09 09:38:03,082 - BERTopic - Embedding - Transforming documents to embeddings.

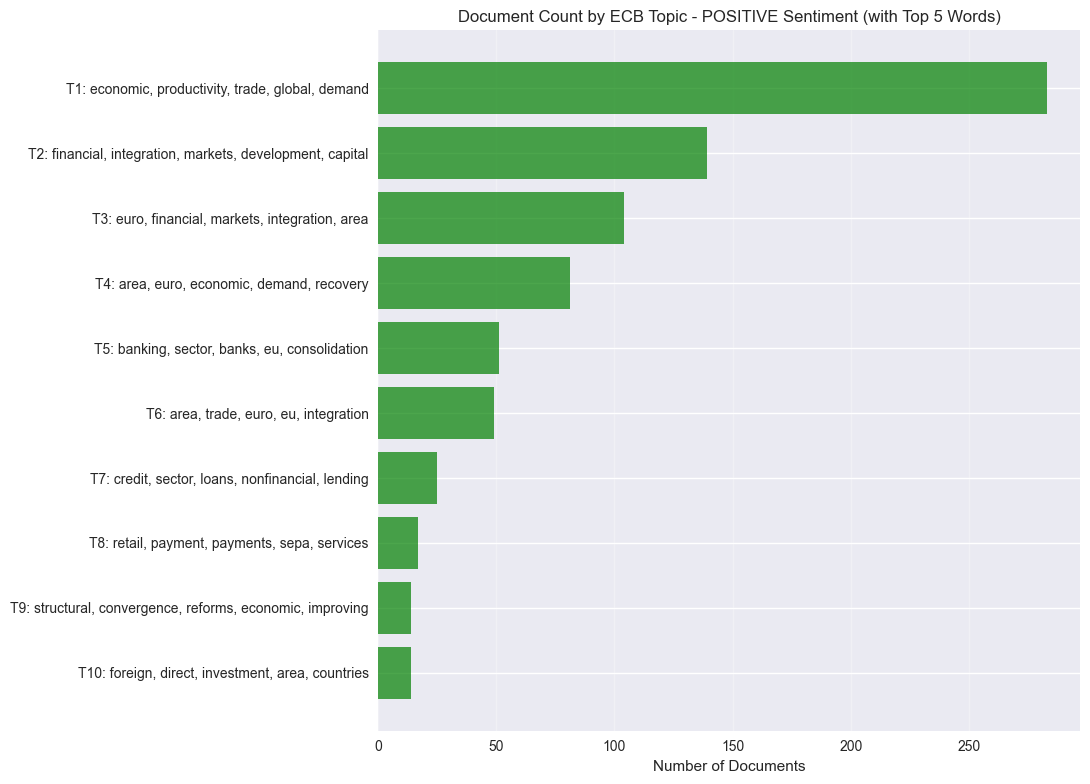

Positive statements: 954

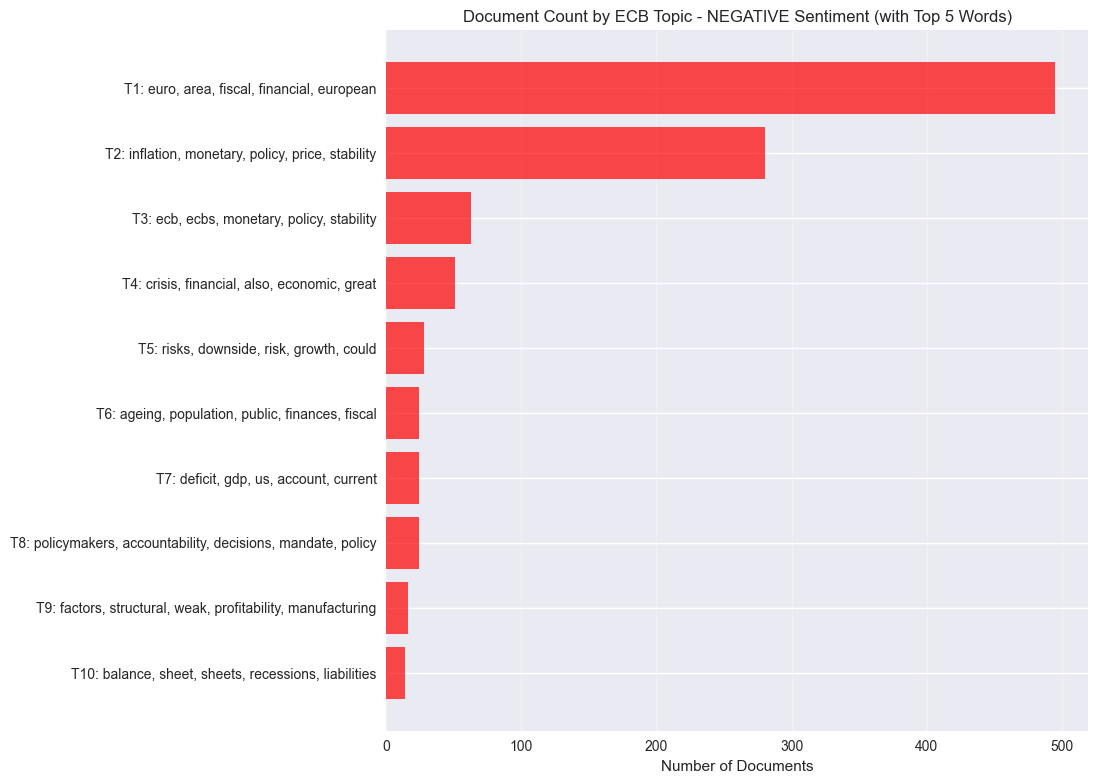

Negative statements: 1609

============================================================

BERTopic Analysis - Positive Sentiment

============================================================

Batches: 100%|██████████| 30/30 [00:02<00:00, 10.79it/s]

2026-03-09 09:38:07,543 - BERTopic - Embedding - Completed ✓

2026-03-09 09:38:07,543 - BERTopic - Dimensionality - Fitting the dimensionality reduction algorithm

2026-03-09 09:38:08,524 - BERTopic - Dimensionality - Completed ✓

2026-03-09 09:38:08,524 - BERTopic - Cluster - Start clustering the reduced embeddings

2026-03-09 09:38:08,577 - BERTopic - Cluster - Completed ✓

2026-03-09 09:38:08,579 - BERTopic - Representation - Extracting topics using c-TF-IDF for topic reduction.

2026-03-09 09:38:08,626 - BERTopic - Representation - Completed ✓

2026-03-09 09:38:08,626 - BERTopic - Topic reduction - Reducing number of topics

2026-03-09 09:38:08,630 - BERTopic - Representation - Fine-tuning topics using representation models.

2026-03-09 09:38:08,651 - BERTopic - Representation - Completed ✓

2026-03-09 09:38:08,651 - BERTopic - Topic reduction - Reduced number of topics from 13 to 13

2026-03-09 09:38:08,704 - BERTopic - Embedding - Transforming documents to embeddings.

Number of topics found: 12

Topic distribution:

Topic Count Name \

0 -1 153 -1_has_market_for_investment

1 0 283 0_economic_productivity_is_has

2 1 139 1_financial_integration_markets_development

3 2 104 2_euro_financial_markets_integration

4 3 81 3_area_euro_economic_demand

5 4 51 4_banking_sector_banks_eu

6 5 49 5_area_trade_euro_eu

7 6 25 6_credit_sector_loans_nonfinancial

8 7 17 7_retail_payment_payments_sepa

9 8 14 8_structural_convergence_reforms_economic

Representation \

0 [has, market, for, investment, as, euro, finan...

1 [economic, productivity, is, has, by, trade, g...

2 [financial, integration, markets, development,...

3 [euro, financial, markets, integration, area, ...

4 [area, euro, economic, demand, recovery, activ...

5 [banking, sector, banks, eu, consolidation, is...

6 [area, trade, euro, eu, integration, europe, w...

7 [credit, sector, loans, nonfinancial, lending,...

8 [retail, payment, payments, sepa, services, sy...

9 [structural, convergence, reforms, economic, i...

Representative_Docs

0 [lending rates for euro area firms and househo...

1 [moreover in many of these emerging economies ...

2 [financial integration is a key factor in the ...

3 [the euro has acted as a catalyst for the inte...

4 [economic activity in the euro area is also ex...

5 [these findings are particularly relevant for ...

6 [external factors such as more sustained growt...

7 [the transmission of the improvement in banks ...

8 [sizeable financial benefits are expected from...

9 [so taken together structural reforms promote ...

Positive Topics and their representative words:

Topic 0: economic, productivity, trade, global, demand

Topic 1: financial, integration, markets, development, capital, economic, system, international

Topic 2: euro, financial, markets, integration, area, market, european, currency, introduction

Topic 3: area, euro, economic, demand, recovery, activity, labour, domestic

Topic 4: banking, sector, banks, eu, consolidation, integration, intermediation, european

Topic 5: area, trade, euro, eu, integration, europe, within, global

Topic 6: credit, sector, loans, nonfinancial, lending, corporations, funding, bank

Topic 7: retail, payment, payments, sepa, services, systems, competition, innovation

============================================================

BERTopic Analysis - Negative Sentiment

============================================================

Batches: 100%|██████████| 51/51 [00:04<00:00, 10.63it/s]

2026-03-09 09:38:15,051 - BERTopic - Embedding - Completed ✓

2026-03-09 09:38:15,051 - BERTopic - Dimensionality - Fitting the dimensionality reduction algorithm

2026-03-09 09:38:18,315 - BERTopic - Dimensionality - Completed ✓

2026-03-09 09:38:18,317 - BERTopic - Cluster - Start clustering the reduced embeddings

2026-03-09 09:38:18,402 - BERTopic - Cluster - Completed ✓

2026-03-09 09:38:18,404 - BERTopic - Representation - Extracting topics using c-TF-IDF for topic reduction.

2026-03-09 09:38:18,451 - BERTopic - Representation - Completed ✓

2026-03-09 09:38:18,451 - BERTopic - Topic reduction - Reducing number of topics

2026-03-09 09:38:18,451 - BERTopic - Representation - Fine-tuning topics using representation models.

2026-03-09 09:38:18,496 - BERTopic - Representation - Completed ✓

2026-03-09 09:38:18,498 - BERTopic - Topic reduction - Reduced number of topics from 27 to 16

Number of topics found: 15

Topic distribution:

Topic Count Name \

0 -1 531 -1_for_would_by_be

1 0 495 0_euro_area_fiscal_for

2 1 280 1_inflation_monetary_policy_price

3 2 63 2_ecb_ecbs_monetary_policy

4 3 51 3_crisis_financial_also_economic

5 4 28 4_risks_downside_risk_growth

6 5 24 5_ageing_population_public_finances

7 6 24 6_deficit_gdp_us_account

8 7 24 7_policymakers_accountability_decisions_their

9 8 16 8_factors_structural_weak_profitability

Representation \

0 [for, would, by, be, financial, it, which, an,...

1 [euro, area, fiscal, for, be, with, it, financ...

2 [inflation, monetary, policy, price, stability...

3 [ecb, ecbs, monetary, policy, for, was, with, ...

4 [crisis, financial, also, economic, it, great,...

5 [risks, downside, risk, growth, could, place, ...

6 [ageing, population, public, finances, for, fi...

7 [deficit, gdp, us, account, current, 2010, uni...

8 [policymakers, accountability, decisions, thei...

9 [factors, structural, weak, profitability, man...

Representative_Docs

0 [the euro area entered the crisis with an inco...

1 [not only do banks in the affected countries o...

2 [there is also the risk that monetary policy i...

3 [first some commentators have stated that sinc...

4 [it is my contention that the main driver of t...

5 [1 yet it was clear that the loss of growth mo...

6 [the prospective budgetary costs of population...

7 [thus from a pure accounting perspective the u...

8 [21 clearly policymakers who rely exclusively ...

9 [in addition to these structural factors the c...

Negative Topics and their representative words:

Topic 0: euro, area, fiscal, financial, european

Topic 1: inflation, monetary, policy, price, stability, rates

Topic 2: ecb, ecbs, monetary, policy, stability, governing

Topic 3: crisis, financial, also, economic, great, lehman

Topic 4: risks, downside, risk, growth, could, place, threat, global, form, prices

Topic 5: ageing, population, public, finances, fiscal, longterm, demographic, pension, costs

Topic 6: deficit, gdp, us, account, current, 2010, united, increase, oil, deficits

Topic 7: policymakers, accountability, decisions, mandate, policy, would, makers, assessments# Visualize Positive Topics

if positive_model is not None:

print("\n" + "="*60)

print("POSITIVE SENTIMENT VISUALIZATIONS")

print("="*60)

try:

fig1 = positive_model.visualize_barchart(top_k_topics=min(8, len(positive_info)-1), n_words=10, height=400)

fig1.show()

fig2 = positive_model.visualize_topics(height=600)

fig2.show()

except Exception as e:

print(f"Visualization error: {e}")

print("Creating alternative visualization...")

plt.figure(figsize=(12, 8))

topic_counts = positive_info[positive_info['Topic'] != -1].head(10)

if len(topic_counts) > 0:

topic_counts = topic_counts.sort_values('Topic')

stop_words = set(stopwords.words("english"))

topic_labels = []

for topic_num in topic_counts['Topic']:

try:

topic_words = positive_model.get_topic(topic_num)

top_words = []

for word, _ in topic_words:

if word not in stop_words:

top_words.append(word)

if len(top_words) == 5:

break

label = f"T{topic_num + 1}: {', '.join(top_words)}"

topic_labels.append(label)

except:

topic_labels.append(f"T{topic_num + 1}: (words unavailable)")

topic_labels_reversed = topic_labels[::-1]

counts_reversed = topic_counts['Count'].values[::-1]

y_pos = range(len(topic_counts))

plt.barh(y_pos, counts_reversed, color='green', alpha=0.7)

plt.yticks(y_pos, topic_labels_reversed)

plt.xlabel('Number of Documents')

plt.title('Document Count by ECB Topic - POSITIVE Sentiment (with Top 5 Words)')

plt.grid(True, alpha=0.3, axis='x')

plt.tight_layout()

plt.subplots_adjust(left=0.4)

plt.show()

# Visualize Negative Topics

if negative_model is not None:

print("\n" + "="*60)

print("NEGATIVE SENTIMENT VISUALIZATIONS")

print("="*60)

try:

fig1 = negative_model.visualize_barchart(top_k_topics=min(8, len(negative_info)-1), n_words=10, height=400)

fig1.show()

fig2 = negative_model.visualize_topics(height=600)

fig2.show()

except Exception as e:

print(f"Visualization error: {e}")

print("Creating alternative visualization...")

plt.figure(figsize=(12, 8))

topic_counts = negative_info[negative_info['Topic'] != -1].head(10)

if len(topic_counts) > 0:

topic_counts = topic_counts.sort_values('Topic')

stop_words = set(stopwords.words("english"))

topic_labels = []

for topic_num in topic_counts['Topic']:

try:

topic_words = negative_model.get_topic(topic_num)

top_words = []

for word, _ in topic_words:

if word not in stop_words:

top_words.append(word)

if len(top_words) == 5:

break

label = f"T{topic_num + 1}: {', '.join(top_words)}"

topic_labels.append(label)

except:

topic_labels.append(f"T{topic_num + 1}: (words unavailable)")

topic_labels_reversed = topic_labels[::-1]

counts_reversed = topic_counts['Count'].values[::-1]

y_pos = range(len(topic_counts))

plt.barh(y_pos, counts_reversed, color='red', alpha=0.7)

plt.yticks(y_pos, topic_labels_reversed)

plt.xlabel('Number of Documents')

plt.title('Document Count by ECB Topic - NEGATIVE Sentiment (with Top 5 Words)')

plt.grid(True, alpha=0.3, axis='x')

plt.tight_layout()

plt.subplots_adjust(left=0.4)

plt.show()============================================================

POSITIVE SENTIMENT VISUALIZATIONS

============================================================

Visualization error: BERTopic.visualize_barchart() got an unexpected keyword argument 'top_k_topics'

Creating alternative visualization...

============================================================

NEGATIVE SENTIMENT VISUALIZATIONS

============================================================

Visualization error: BERTopic.visualize_barchart() got an unexpected keyword argument 'top_k_topics'

Creating alternative visualization...

🗣️ CLASSROOM DISCUSSION:

- Which ECB topics seem most coherent and economically meaningful?

- What advantages does BERTopic offer for central bank communication analysis?

Key Differences: BERTopic vs Traditional Methods for Financial Text

| Aspect | Traditional (LDA) | BERTopic |

|---|---|---|

| Topic Number | Manual selection (K) | Automatic optimization |

| Text Representation | Bag-of-words | Transformer embeddings |

| Financial Jargon | Struggles with specialized terms | Better semantic understanding |

| Economic Context | Limited context awareness | Rich contextual relationships |

| Policy Language | Word co-occurrence patterns | Semantic policy relationships |

💡 TAKEAWAY: BERTopic’s transformer-based approach is particularly valuable for financial and economic text analysis. Central bank communications often contain nuanced policy language and technical economic concepts that benefit from BERTopic’s semantic understanding.

Keyness Analysis: Positive vs Negative ECB Sentiment

Keyness is a corpus linguistics technique that identifies which words are statistically unusually frequent in one text group compared to another; here, positive vs negative ECB statements.

Unlike simple word frequency, keyness tells you which terms are distinctive to each sentiment, not merely common overall. A word like financial appears frequently in both groups, so it has low keyness. A word like crisis that appears disproportionately in negative statements has high keyness for that group.

We’ll use log-likelihood (G²) as the keyness statistic. It is robust for unequal corpus sizes (here ~954 positive vs ~1,609 negative statements) and is standard practice in corpus linguistics.

📐 Log-likelihood formula: \[G^2 = 2 \sum O_i \ln\left(\frac{O_i}{E_i}\right)\] where \(O_i\) is the observed count and \(E_i\) is the expected count under the null hypothesis of equal relative frequency. A higher G² = more distinctive. The sign (positive/negative) tells you which group the term favours.

# Split corpus by sentiment

pos_texts = ecb_data[ecb_data['sentiment_label'] == 'Positive']['text_clean'].tolist()

neg_texts = ecb_data[ecb_data['sentiment_label'] == 'Negative']['text_clean'].tolist()

# Build a shared vocabulary from both corpora

cv_keyness = CountVectorizer(max_features=2000, min_df=5, ngram_range=(1, 1),

token_pattern=r'\b[a-zA-Z]{3,}\b')

cv_keyness.fit(pos_texts + neg_texts)

vocab = cv_keyness.get_feature_names_out()

# Get term frequencies for each group

pos_matrix = cv_keyness.transform(pos_texts)

neg_matrix = cv_keyness.transform(neg_texts)

pos_freq = np.array(pos_matrix.sum(axis=0)).flatten() # count per term in positive

neg_freq = np.array(neg_matrix.sum(axis=0)).flatten() # count per term in negative

# Corpus totals

total_pos = pos_freq.sum()

total_neg = neg_freq.sum()

total = total_pos + total_neg

# Log-likelihood (G²) keyness

def log_likelihood(o1, o2, total1, total2):

"""Compute signed log-likelihood keyness for each term.

Positive = term favours corpus 1 (positive sentiment).

Negative = term favours corpus 2 (negative sentiment).

"""

n = total1 + total2

e1 = total1 * (o1 + o2) / n

e2 = total2 * (o1 + o2) / n

# Guard against log(0)

ll = np.where(

(o1 > 0) & (o2 > 0),

2 * (o1 * np.log(o1 / e1) + o2 * np.log(o2 / e2)),

np.where(o1 > 0, 2 * o1 * np.log(o1 / e1), 2 * o2 * np.log(o2 / e2))

)

# Sign: positive if over-represented in corpus 1 (positive sentiment)

sign = np.where(o1 / total1 >= o2 / total2, 1, -1)

return sign * ll

keyness_scores = log_likelihood(pos_freq, neg_freq, total_pos, total_neg)

keyness_df = pd.DataFrame({

'term' : vocab,

'keyness' : keyness_scores,

'pos_count' : pos_freq.astype(int),

'neg_count' : neg_freq.astype(int),

'pos_freq_pct': (pos_freq / total_pos * 100).round(4),

'neg_freq_pct': (neg_freq / total_neg * 100).round(4),

}).sort_values('keyness', ascending=False)

print('Top 15 keywords for POSITIVE sentiment:')

print(keyness_df.head(15)[['term','keyness','pos_count','neg_count']].to_string(index=False))

print()

print('Top 15 keywords for NEGATIVE sentiment:')

print(keyness_df.tail(15).sort_values('keyness')[['term','keyness','pos_count','neg_count']].to_string(index=False))# ── Diverging bar chart of top keywords per sentiment ──

n = 15

top_pos = keyness_df.head(n).copy()

top_neg = keyness_df.tail(n).sort_values('keyness').copy()

plot_df = pd.concat([top_neg, top_pos]).reset_index(drop=True)

colors = ['#d73027' if k < 0 else '#4575b4' for k in plot_df['keyness']]

fig, ax = plt.subplots(figsize=(10, 9))

bars = ax.barh(plot_df['term'], plot_df['keyness'], color=colors, edgecolor='white', linewidth=0.4)

ax.axvline(0, color='black', linewidth=0.8)

ax.set_xlabel('Log-likelihood keyness (G²)', fontsize=12)

ax.set_title('Keyness: Positive vs Negative ECB Sentiment\n'

'(blue = distinctive of positive | red = distinctive of negative)',

fontsize=13, pad=12)

ax.grid(axis='x', alpha=0.3)

plt.tight_layout()

plt.show()❓ Question: Which terms are most distinctive of positive vs negative ECB statements? Do the results match your intuitions about central bank communication?

Summary and Next Steps

In this lab, we’ve explored text analysis applied to European Central Bank statements:

- Financial text preprocessing using Python’s NLP libraries

- Economic document-term matrices for sentiment and topic analysis

- Supervised learning for ECB sentiment classification

- Model interpretation using SHAP values for financial text features

- Modern topic modeling of central bank communications with BERTopic

Key Applications for Financial Text Analysis:

- Central bank communication analysis - Policy stance detection

- Market sentiment analysis - Economic outlook assessment

- Financial news analysis - Automated sentiment scoring

- Regulatory text mining - Policy theme extraction

Extensions to consider:

- Time series analysis of ECB sentiment over economic cycles

- Cross-lingual analysis of multilingual central bank communications

- Aspect-based sentiment analysis for specific policy areas

- Integration with economic indicators for predictive modeling