✅ A Possible Model Solution for the W08 Summative

What follows is a possible solution for the W08 summative. Note that I purposely avoided very elaborate solutions here and avoided optimizing the performance of the models to death. I tried to go for rather straightforward but justified solutions.

⚙️ Setup

import pandas as pd

import numpy as np

import missingno as msno

import matplotlib.pyplot as plt

import matplotlib.ticker as mticker

import plotly.express as px

from plotly.subplots import make_subplots

import plotly.graph_objects as go

import seaborn as sns

from statsmodels.stats.outliers_influence import variance_inflation_factor

from sklearn.linear_model import LinearRegression, LogisticRegression

from sklearn.preprocessing import StandardScaler

from sklearn.model_selection import TimeSeriesSplit, RandomizedSearchCV,cross_validate, GridSearchCV

from sklearn.pipeline import Pipeline

from sklearn.feature_selection import SequentialFeatureSelector

from sklearn.metrics import (

r2_score, mean_absolute_error, mean_squared_error,

f1_score, precision_score, recall_score,

roc_auc_score, average_precision_score,

balanced_accuracy_score, roc_curve,

precision_recall_curve, confusion_matrix

)

from imblearn.ensemble import BalancedRandomForestClassifier

import shap

# Importing LightGBM

import lightgbm as lgb

import warnings

warnings.filterwarnings('ignore')

plt.style.use('seaborn-v0_8-whitegrid')Part 1: predicting next-month gold returns

1.3 Construction and finalisation of the dataset

1.3.1 Constructing the target variable

We begin by loading the main monthly dataset and constructing the monthly gold return series.

gold = pd.read_csv('data/gold_price_prediction_dataset.csv', parse_dates=['date'])

gold = gold.sort_values('date').reset_index(drop=True)

# Compute monthly percentage return: r_t = 100 * (P_t - P_{t-1}) / P_{t-1}

gold['gold_return'] = 100 * gold['gold_price'].pct_change()

# The TARGET is next-month return: r_{t+1}

# Shift gold_return back by one row so that row t holds the return realised at t+1

gold['target'] = gold['gold_return'].shift(-1)Why this alignment?

Each row of our modelling dataset represents month \(t\). The predictors observed in month \(t\) should be paired with the outcome observed in month \(t+1\) i.e the return that a trader, having seen this month’s data, would be trying to predict. Pairing predictors with the contemporaneous return would be a data leakage error: in a real forecasting setting, the return for month \(t\) is only realised at the end of month \(t\), after the predictor data has been observed. This is one of the most common errors in financial forecasting exercises and must be verified explicitly.

Verification:

jan20 = gold[gold['date'] == '2020-01-01'].iloc[0]

feb20 = gold[gold['date'] == '2020-02-01'].iloc[0]

computed_return = 100 * (feb20['gold_price'] - jan20['gold_price']) / jan20['gold_price']

print(f"Jan 2020 gold price: {jan20['gold_price']:.2f}")

print(f"Feb 2020 gold price: {feb20['gold_price']:.2f}")

print(f"Computed Feb 2020 return: {computed_return:.4f}")

print(f"Value of 'target' in Jan 2020 row: {jan20['target']:.4f}")Jan 2020 gold price: 1552.40

Feb 2020 gold price: 1566.70

Computed Feb 2020 return: 0.9212

Value of 'target' in Jan 2020 row: 0.9212The January 2020 row carries a target of 0.9212, that is exactly the return realised in February 2020, confirming correct one-month-ahead alignment.

1.3.2 Constructing real yield

gold['real_yield'] = gold['us_10y_yield'] - gold['us_10y_breakeven_inflation']Economic rationale:

Gold pays no coupon or dividend, unlike bonds or stocks, it generates no income while you hold it. This means the question “should I hold gold?” is always being implicitly compared to “what else could I earn instead?” The answer depends on real yields: the interest rate on government bonds after adjusting for expected inflation.

Here is the logic in plain terms. Suppose you are deciding between holding gold and holding a US government inflation-protected bond (called a TIPS). If real yields are high — say, +2% per year — the bond pays you a real return while gold pays nothing. Gold is then costly to hold. But if real yields are negative — say, −1% per year, which happened from 2020 to 2021 — the “safe” bond is actually eroding your purchasing power. Suddenly gold, which at least holds its value, looks more attractive, and demand rises.

This is why the gold–real yield relationship is an empirically robust regularity in financial economics (Erb and Harvey 2013): when real yields fall, gold tends to rally; when real yields rise, gold tends to decline, and this pattern is especially pronounced when real rates are very low or negative. The three biggest gold rallies in our sample — post‑2008, post‑COVID, and 2022–2023 — all coincided with periods of deeply negative real yields driven by the Federal Reserve’s stimulus policies.

Using the nominal 10‑year yield alone would conflate two very different effects: real yields rising (typically bad for gold) and inflation expectations rising (typically good for gold, to the extent that gold serves as an inflation hedge). Constructing real yield as the nominal yield minus breakeven inflation cleanly separates these two economically distinct forces, which is why we construct it explicitly in Section 1.3.2.

(Baur, Lucey, and McDermott 2010) provide empirical evidence that gold acts as a safe haven for investors in developed economies, particularly during periods of market stress, which is consistent with gold’s appeal when real interest rates are low or negative.

1.3.3 Integrating financial conditions (NFCI)

The NFCI is published weekly. Since our gold dataset is monthly, we must aggregate to monthly frequency before merging.

nfci = pd.read_csv('data/nfci-data-series-csv.csv')

nfci['date'] = pd.to_datetime(nfci['Friday_of_Week'])

nfci['year_month'] = nfci['date'].dt.to_period('M')

# Aggregate to monthly: take the mean of all weekly readings within each calendar month

nfci_monthly = nfci.groupby('year_month')['NFCI'].mean().reset_index()

nfci_monthly['date'] = nfci_monthly['year_month'].dt.to_timestamp()

nfci_monthly = nfci_monthly.drop(columns='year_month')

nfci_monthly = nfci_monthly.rename(columns={'NFCI': 'nfci_monthly'})

# Merge into main dataset

gold = gold.merge(nfci_monthly, on='date', how='left')Justification for taking the monthly mean:

Within any given month, conditions in credit, risk and leverage markets fluctuate week to week. A month’s mean NFCI summarises the average financial tightness experienced throughout that month, which is more representative of the macro environment than any single reading (e.g. the first or last week). An alternative would be to take the end-of-month reading to mimic the information available at a specific point in time; however, since gold prices themselves are measured at the start of the month in this dataset, averaging the full month’s readings is the more natural choice. We keep only the headline NFCI index rather than its sub-components to avoid introducing highly correlated features.

1.3.4 Dataset assessment

# Drop the last row (no target for the most recent month)

modelling_df = gold.dropna(subset=['target']).copy()

print(f"Time coverage: {modelling_df['date'].min().date()} to {modelling_df['date'].max().date()}")

print(f"Observations: {len(modelling_df)}")

print(f"\nMissing values per column:")

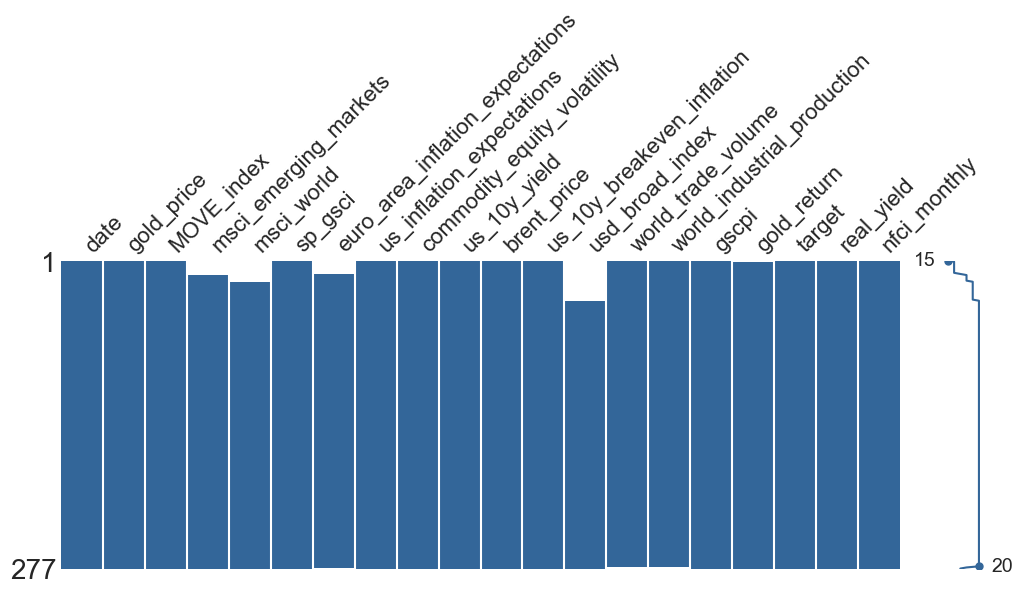

print(modelling_df.isnull().sum()[modelling_df.isnull().sum() > 0])Time coverage: 2003-01-01 to 2026-01-01

Observations: 277

Missing values per column:

msci_emerging_markets 13

msci_world 19

euro_area_inflation_expectations 13

usd_broad_index 36

world_trade_volume 2

world_industrial_production 2

gold_return 1Coverage:

There are 277 monthly observations from January 2003 to January 2026, covering several distinct macro regimes:the 2008 Global Financial Crisis, the 2011 Eurozone debt crisis, the 2020 COVID shock, and the 2022–2023 inflation surge. We drop the final row of the raw dataset because, by construction, the target is the next-month gold return; for the most recent month in the sample, that future return has not yet been observed, so the target is missing and the row cannot be used for supervised learning.

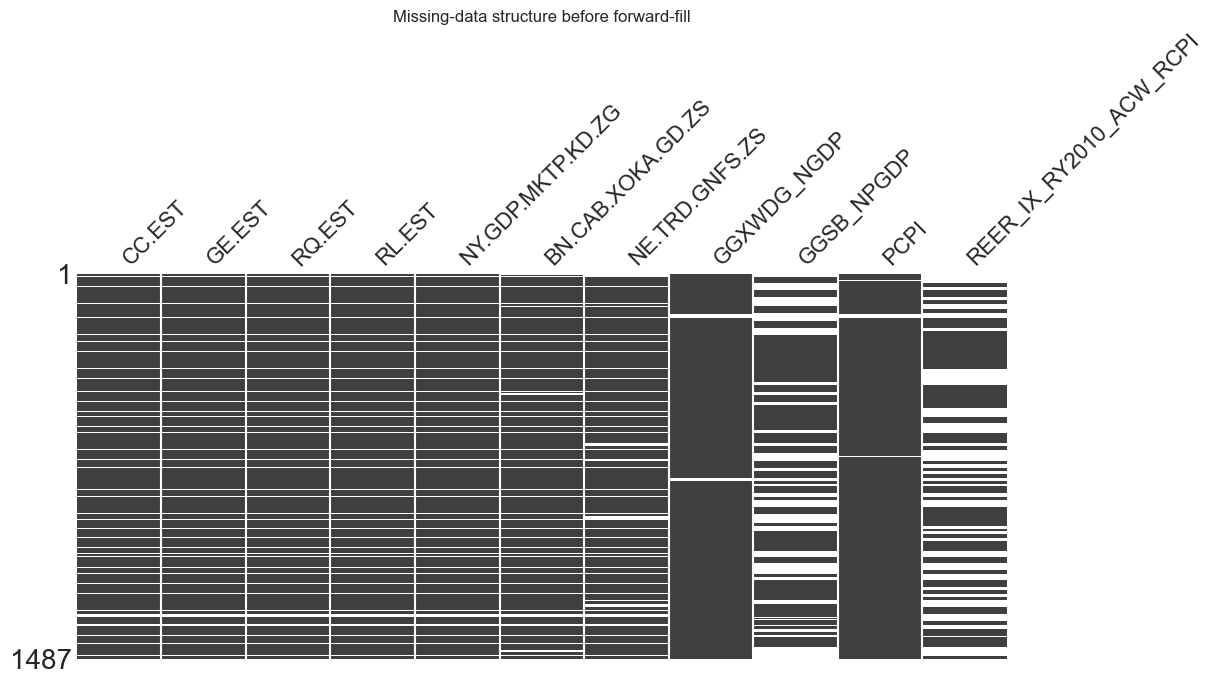

Handling structural missingness and feature selection

msno.matrix(modelling_df, figsize=(12, 4), color=(0.2, 0.4, 0.6))

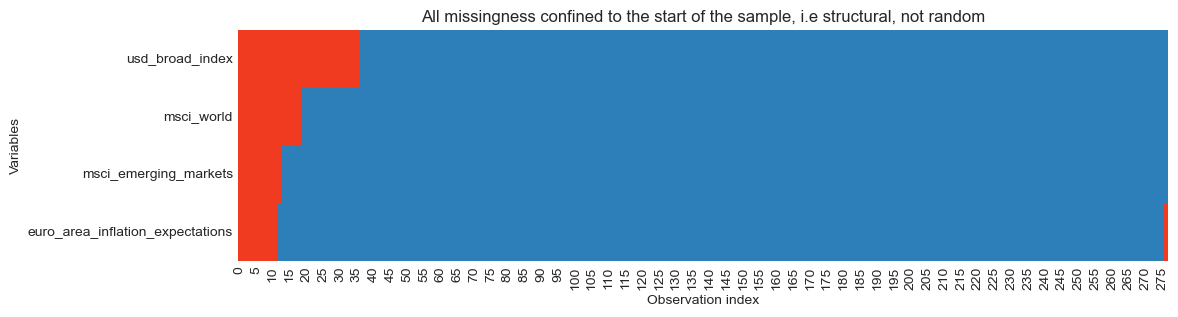

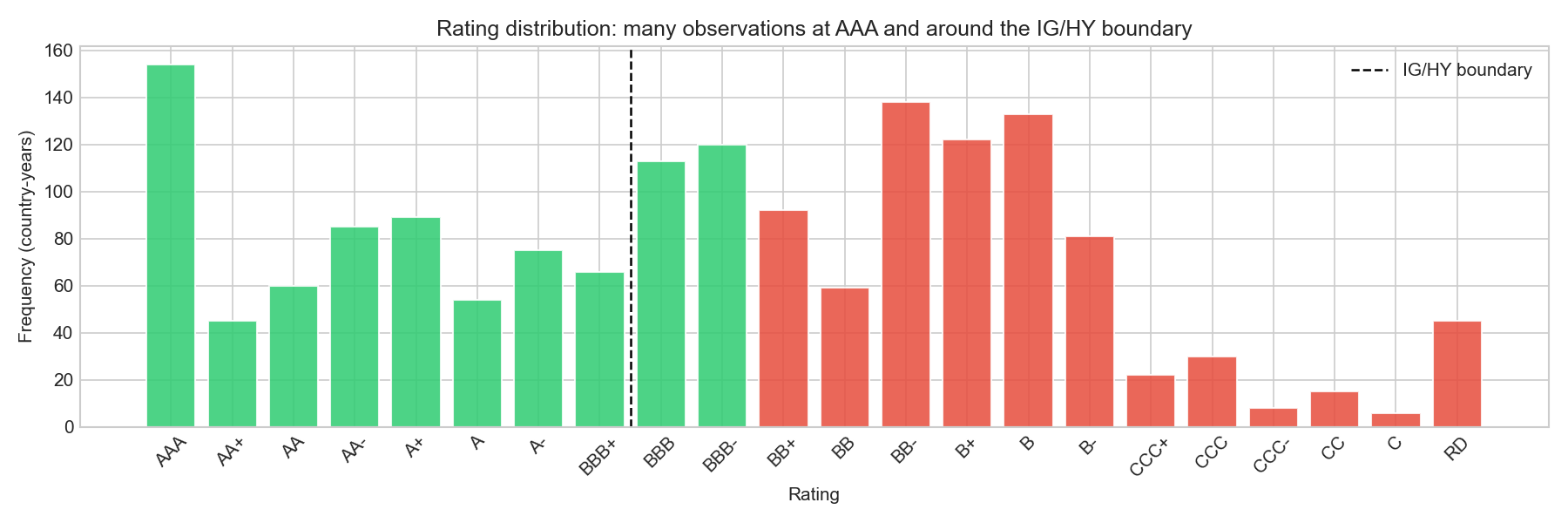

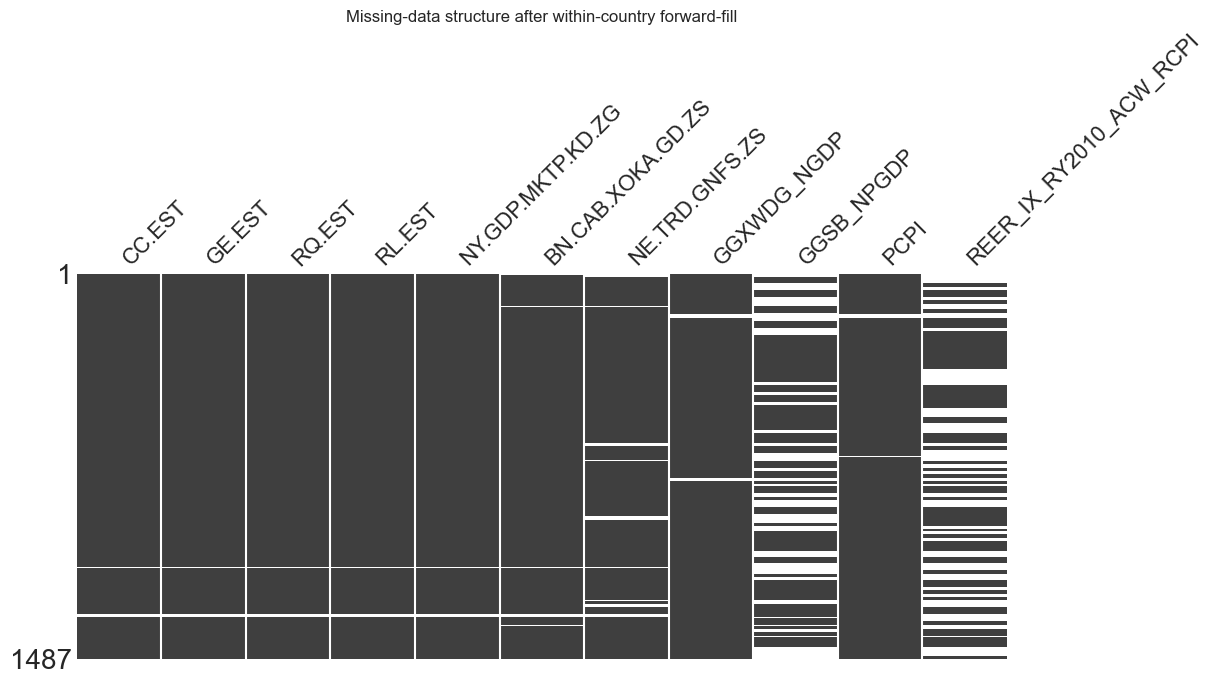

Several predictors contain missing values concentrated at the beginning of the sample. This pattern reflects data availability rather than stochastic missingness: some macro-financial series (such as the trade-weighted USD Broad Index or MSCI coverage) began being systematically recorded only after the early 2000s.

When missing values arise because a variable did not yet exist, the missingness is structural rather than stochastic. In such cases, imputing values would amount to fabricating observations that were never recorded, which can distort statistical relationships and artificially inflate the effective sample size. For this reason, imputation is not appropriate here.

The missingness pattern is easy to visualise:

missing_cols = [

"usd_broad_index",

"msci_world",

"msci_emerging_markets",

"euro_area_inflation_expectations"

]

plt.figure(figsize=(12, 3))

sns.heatmap(

modelling_df[missing_cols].isna().T,

cmap=["#2c7fb8", "#f03b20"],

cbar=False

)

plt.title("All missingness confined to the start of the sample, i.e structural, not random")

plt.xlabel("Observation index")

plt.ylabel("Variables")

plt.show()

The heatmap confirms that missing observations occur only at the start of the dataset, confirming that this is a series start-date issue rather than random missing data. The missingness is best interpreted as MAR (Missing At Random) conditional on time (the probability of missingness depends on observation date, not on the gold return outcome).

Sample cost of including the USD index

Including predictors with missing values reduces the effective training sample, because rows containing missing predictors are removed during model fitting. To make this trade-off explicit, we compare the number of training observations available with and without the usd_broad_index variable (i.e the variable with the most missing values).

# 80/20 chronological split

split_idx = int(len(modelling_df) * 0.8)

train = modelling_df.iloc[:split_idx].copy()

test = modelling_df.iloc[split_idx:].copy()

features_with_usd = ['real_yield', 'usd_broad_index', 'nfci_monthly', 'MOVE_index', 'gscpi']

features_without_usd = ['real_yield', 'nfci_monthly', 'MOVE_index', 'gscpi']

n_with = train[features_with_usd + ['target']].dropna().shape[0]

n_without = train[features_without_usd + ['target']].dropna().shape[0]

print(f"Training obs WITH usd_broad_index: {n_with} (dropped {len(train) - n_with})")

print(f"Training obs WITHOUT usd_broad_index: {n_without} (dropped {len(train) - n_without})")Training obs WITH usd_broad_index: 185 (dropped 36)

Training obs WITHOUT usd_broad_index: 221 (dropped 0)Including usd_broad_index removes the first 36 months of observations (2003–2005), reducing the effective training sample by about 16%.

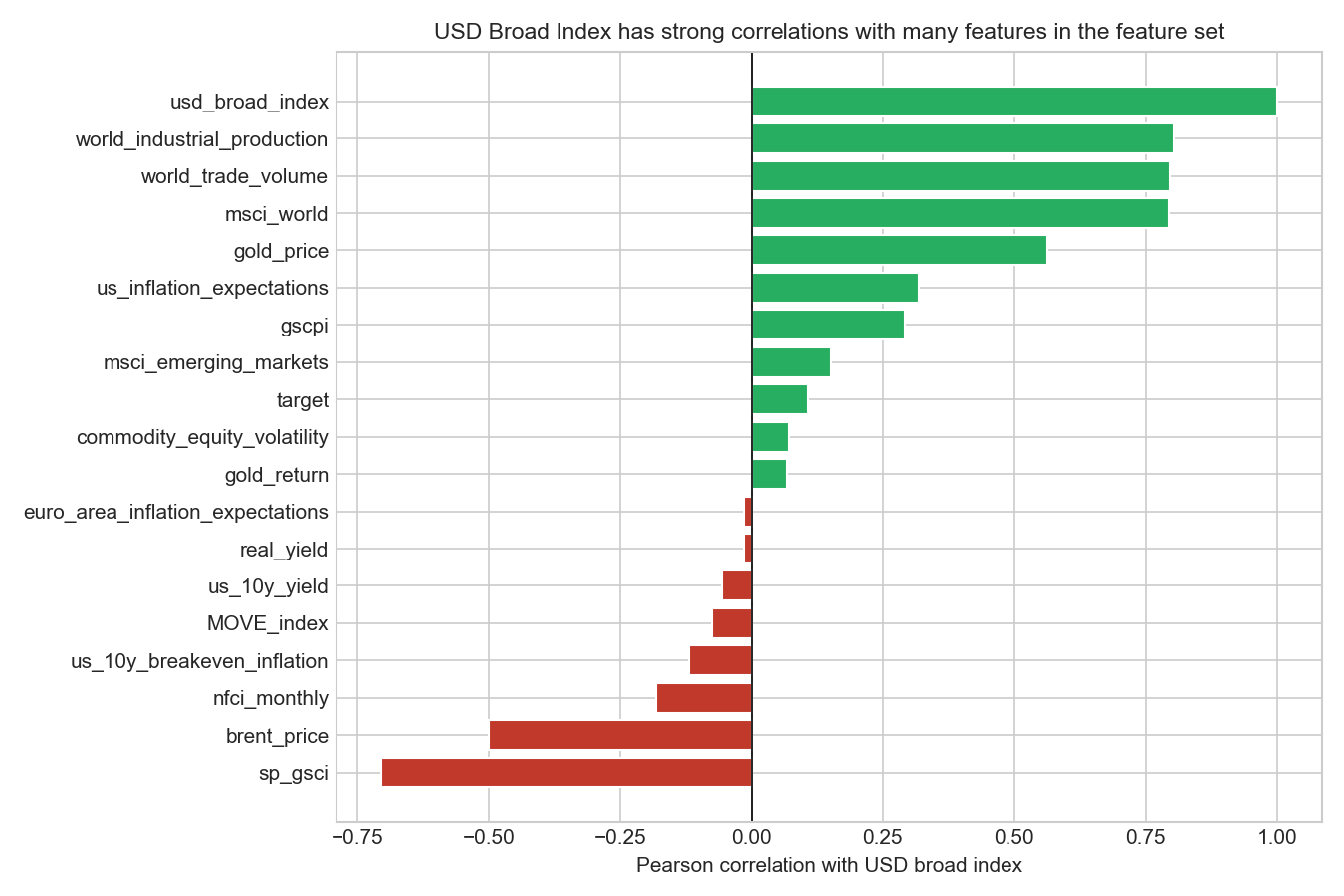

Does the USD index add distinct information?

corr_usd = modelling_df.corr(numeric_only=True)['usd_broad_index'].sort_values()

fig, ax = plt.subplots(figsize=(9, 6))

colors = ['#c0392b' if c < 0 else '#27ae60' for c in corr_usd]

ax.barh(corr_usd.index, corr_usd.values, color=colors, edgecolor='white')

ax.axvline(0, color='black', linewidth=0.8)

ax.set_title('USD Broad Index has strong correlations with many features in the feature set',

fontsize=11)

ax.set_xlabel('Pearson correlation with USD broad index')

plt.tight_layout()

plt.savefig('w08-correlations-usd.png', dpi=150)

plt.show()

Before deciding whether to keep the variable, we examine whether the USD broad index contains information that is distinct from the rest of the feature set. Pairwise correlations show that the USD index is strongly related to several other predictors, especially msci_world, world_trade_volume, world_industrial_production, and sp_gsci, indicating substantial overlap with broader global macro-financial conditions. At the same time, its correlation with variables such as real_yield and nfci_monthly is relatively modest, so it is not simply a duplicate of any single predictor. Overall, the evidence suggests that the USD index may add some information, but much of its variation is already reflected elsewhere in the dataset. Given that retaining it would remove around 16% of available observations, we prioritise sample size and historical coverage over the potentially limited incremental signal from this variable.

Final feature decision

In this analysis we choose to exclude usd_broad_index from the baseline model.

Although the variable is theoretically relevant — gold is priced in USD and often moves inversely to the dollar —, the additional information it may provide is likely incremental rather than essential, while the sample loss it introduces is substantial.

Preserving the longer sample is particularly valuable in a time-series context, where the number of observations is limited and different macro-financial regimes may appear over time. The early-2000s period (2003–2006) corresponds to the pre-crisis expansion preceding the 2007–2008 financial turmoil, and retaining these observations allows the model to be estimated across a broader range of macroeconomic conditions.

This illustrates a common modelling trade-off in applied work: balancing theoretical completeness against sample size and data consistency. When predictors introduce structural missingness concentrated at the start of the sample, it is often preferable to prioritise the longest clean dataset unless the variable is essential for the research question.

1.4 Data exploration

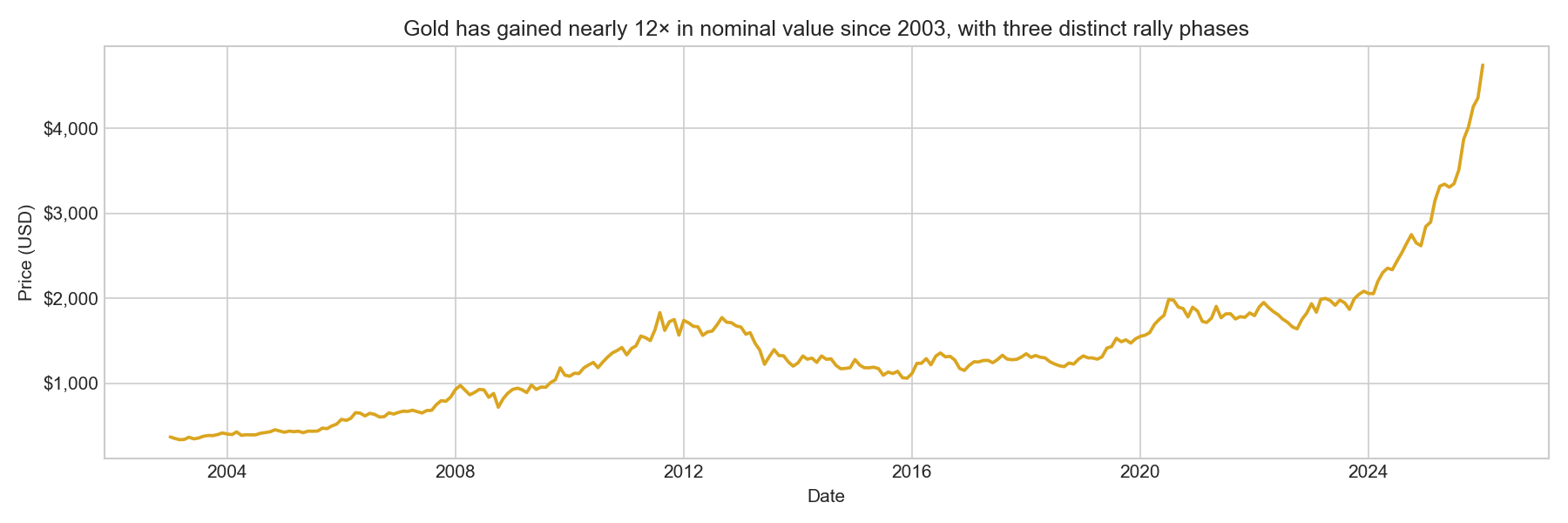

Plot 1: Gold prices over time

fig, ax = plt.subplots(figsize=(12, 4))

ax.plot(modelling_df['date'], modelling_df['gold_price'], color='goldenrod', linewidth=1.8)

ax.set_title('Gold has gained nearly 12× in nominal value since 2003, with three distinct rally phases',

fontsize=12)

ax.set_xlabel('Date')

ax.set_ylabel('Price (USD)')

ax.yaxis.set_major_formatter(mticker.FuncFormatter(lambda x, _: f'${x:,.0f}'))

plt.tight_layout()

plt.savefig('w08-gold-price.png', dpi=150)

plt.show()

Gold has appreciated from roughly $370 in early 2003 to above $4,500 by early 2026, a nearly twelve-fold increase in nominal terms. Three major upswings are visible.

The 2008–2012 safe-haven rally was driven by the global financial crisis and its aftermath: quantitative easing, real yields turning deeply negative, and widespread loss of confidence in fiat currency. The 2018–2022 rally began with trade war anxiety and the Fed’s pivot toward easing, accelerated through the 2020 pandemic shock (when unprecedented monetary stimulus drove real yields to historic lows), and culminated in the 2022 inflation surge. The 2023–2026 surge is the most dramatic in the sample, driven by central bank gold-buying (especially from China and the Middle East), geopolitical risk following Russia’s invasion of Ukraine, and renewed concerns about reserve currency diversification.

This long-run trend reminds us that, while we are modelling returns (which are approximately stationary), the level series is clearly non-stationary. Working in return space is the right choice and avoids spurious regression.

Plot 2: Monthly gold returns

fig, ax = plt.subplots(figsize=(12, 4))

ax.bar(

modelling_df['date'],

modelling_df['gold_return'],

color=['#c0392b' if r < 0 else '#27ae60' for r in modelling_df['gold_return']],

width=20,

alpha=0.8

)

ax.axhline(0, color='black', linewidth=0.8, linestyle='--')

ax.set_title('Monthly returns are approximately mean-zero with volatility clustering around crisis periods',

fontsize=11)

ax.set_xlabel('Date')

ax.set_ylabel('Return (%)')

plt.tight_layout()

plt.savefig('w08-gold-returns.png', dpi=150)

plt.show()

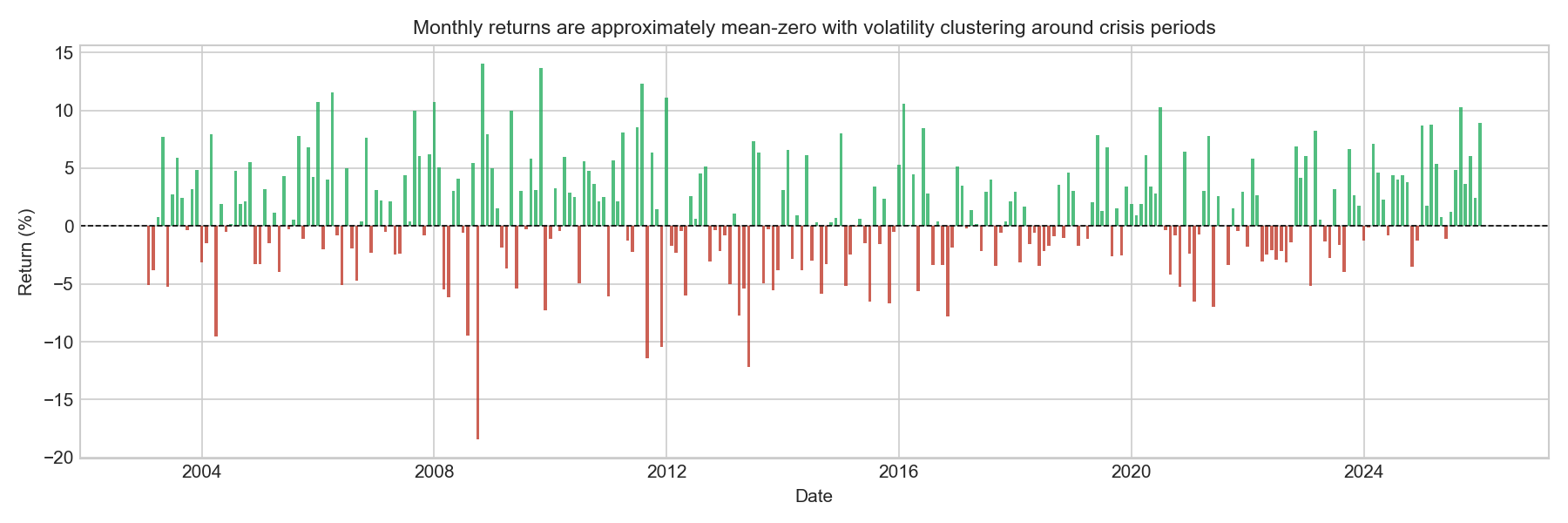

Monthly gold returns fluctuate around zero, with no clear long-run trend in the mean. This behaviour is typical of financial return series: while asset prices may trend over time, returns themselves are usually approximately mean-zero.

The series also exhibits clear volatility clustering, where large movements (both positive and negative) tend to occur close together, followed by periods of relatively small fluctuations. This pattern is a well-known stylised fact of financial markets and reflects the tendency of financial volatility to occur in bursts rather than being evenly distributed through time.

Several episodes of heightened activity are visible. In particular, the 2008–2009 global financial crisis and the 2011–2012 period surrounding the Eurozone sovereign-debt crisis and the peak of the gold market show sequences of unusually large monthly gains and losses. These clusters suggest that market turbulence tends to occur in bursts associated with major macro-financial events, rather than being evenly distributed through time. This has a direct implication for modelling: a linear regression trained on pooled data will estimate average relationships across different volatility regimes and will systematically underestimate the magnitude of returns during the most turbulent periods.

Plot 3: Rolling volatility

To examine how the variability of returns evolves over time, we compute a 12-month rolling standard deviation of monthly returns.

rolling_vol = modelling_df['gold_return'].rolling(12).std()

fig, ax = plt.subplots(figsize=(12, 4))

ax.plot(modelling_df['date'], rolling_vol, linewidth=2)

# Major crises

for start, end in [

('2008-09-01', '2009-07-01'),

('2011-08-01', '2012-10-01'),

('2020-03-01', '2021-06-01')

]:

ax.axvspan(pd.Timestamp(start), pd.Timestamp(end), alpha=0.18, color='#F4C430')

# Secondary volatility episodes

secondary_periods = {

('2005-10-01', '2007-02-01'): 'Commodity boom',

('2012-12-01', '2013-07-01'): 'Gold crash',

('2015-10-01', '2016-05-01'): 'China slowdown',

('2018-03-01', '2019-10-01'): 'Trade tensions'

}

for (start, end), label in secondary_periods.items():

ax.axvspan(pd.Timestamp(start), pd.Timestamp(end), alpha=0.04, color='steelblue')

ax.text(pd.Timestamp(start), 0.6, label, fontsize=8, alpha=0.9)

ymax = rolling_vol.max()

ax.annotate("Global Financial Crisis",

xy=(pd.Timestamp('2009-02-01'), ymax * 0.95),

xytext=(pd.Timestamp('2006-06-01'), ymax * 1.05),

arrowprops=dict(arrowstyle="->"), fontsize=9)

ax.annotate("Eurozone crisis / gold peak",

xy=(pd.Timestamp('2012-01-01'), ymax * 0.82),

xytext=(pd.Timestamp('2014-01-01'), ymax * 1.05),

arrowprops=dict(arrowstyle="->"), fontsize=9)

ax.annotate("COVID market shock",

xy=(pd.Timestamp('2021-02-01'), ymax * 0.55),

xytext=(pd.Timestamp('2017-06-01'), ymax * 0.9),

arrowprops=dict(arrowstyle="->"), fontsize=9)

ax.set_ylim(0, ymax * 1.15)

ax.set_title("Gold return volatility spikes during major financial shocks: three distinct crisis regimes visible",

fontsize=11)

ax.set_xlabel("Date")

ax.set_ylabel("12-Month Rolling Std. Dev. of Returns")

ax.grid(axis='y', alpha=0.3)

plt.tight_layout()

plt.savefig('w08-rolling-vol.png', dpi=150)

plt.show()

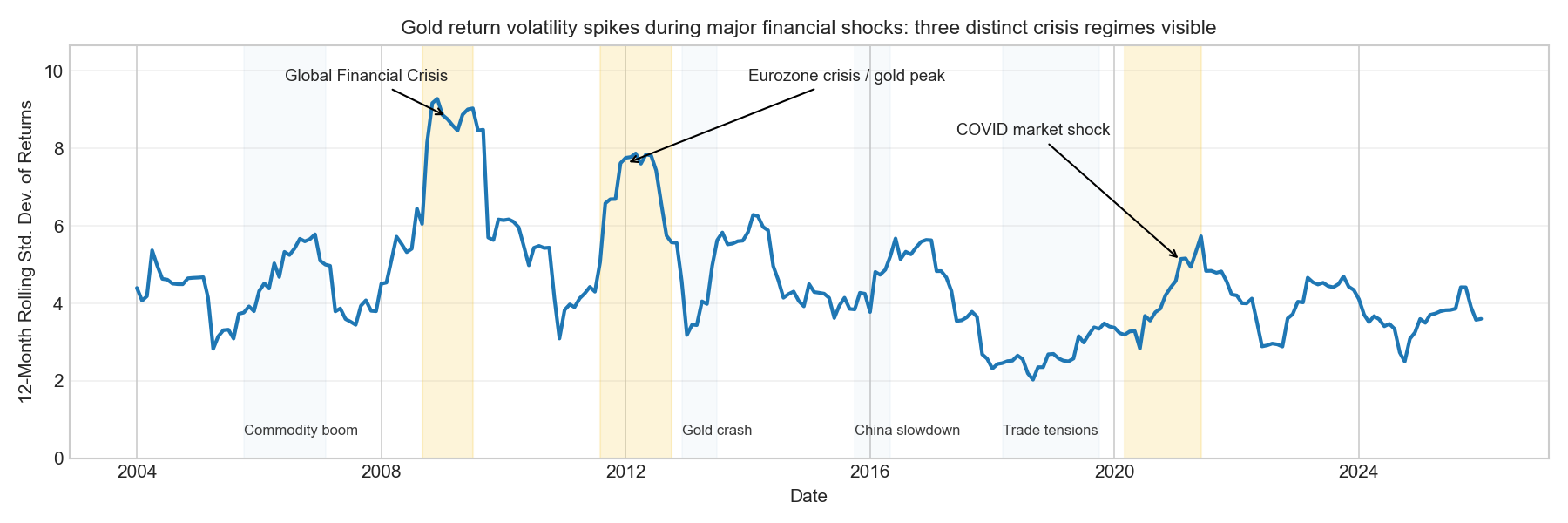

The rolling-volatility plot confirms that the return series is characterised by volatility regimes rather than a steady long-term trend.

Volatility rises sharply during the 2008–2009 global financial crisis, reaching its highest levels in the sample. A second pronounced spike occurs during the 2011–2012 Eurozone sovereign-debt crisis, which coincides with the peak of the gold market.

The plot also highlights several shorter episodes of elevated volatility linked to macro-financial developments, including the mid-2000s commodity boom, the 2013 gold market crash (triggered by the Fed’s “taper tantrum”), the 2015–2016 China growth slowdown, and the 2018–2019 US–China trade tensions. These periods illustrate that gold volatility often increases during times of global economic uncertainty.

After around 2014, volatility becomes more moderate, though temporary spikes still occur, most notably during the 2020 COVID-19 market shock.

This regime structure has a direct modelling implication: a linear regression trained on pooled data is estimating an average relationship across very different volatility environments, and the model’s errors will be disproportionately large during the volatile crisis periods i.e precisely the months that are most economically interesting. We will verify this explicitly when examining residuals in Section 1.5.3, where crisis-period residuals are expected to be visibly larger than those in calm periods.

Plot 4: Months with the largest absolute returns

top5 = modelling_df.nlargest(5, 'gold_return')[['date', 'gold_return']].copy()

bot5 = modelling_df.nsmallest(5, 'gold_return')[['date', 'gold_return']].copy()

extreme = pd.concat([top5, bot5]).sort_values('gold_return', ascending=False)

print(extreme.to_string(index=False)) date gold_return

2008-11-01 14.035088

2009-11-01 13.638985

2011-08-01 12.291564

2006-04-01 11.556162

2012-01-01 11.079908

2004-04-01 -9.526033

2011-12-01 -10.483917

2011-09-01 -11.432003

2013-06-01 -12.153625

2008-10-01 -18.460490| Date | Return (%) | Economic Context |

|---|---|---|

| Nov 2008 | +14.0 | Post-Lehman safe-haven surge: global credit markets frozen; investors fled to gold as systemic distrust of financial institutions peaked |

| Nov 2009 | +13.6 | QE-era rally: the Fed’s first round of quantitative easing drove real yields deeply negative and fuelled fears of dollar debasement |

| Aug 2011 | +12.3 | US debt ceiling standoff and S&P’s historic downgrade of US sovereign debt triggered a flight to gold as the ultimate safe-haven asset |

| Apr 2006 | +11.6 | Dollar weakness and rising commodity cycle; gold briefly broke through $600 for the first time since 1980 |

| Jan 2012 | +11.1 | European sovereign debt crisis peak (Greece PSI negotiations), ECB LTRO announcement; real rates negative, investors sought inflation protection |

| Jun 2013 | −12.2 | “Taper tantrum”: Bernanke’s suggestion that the Fed might taper QE sent real yields surging, eliminating the key driver of gold’s post-2008 rally |

| Sep 2011 | −11.4 | Violent reversal after August’s peak; profit-taking and margin calls following gold’s near-$1,900 high; CME raised margin requirements |

| Dec 2011 | −10.5 | Dollar strengthening on Eurozone crisis contagion fears; year-end deleveraging and tax-loss harvesting |

| Oct 2008 | −18.5 | The single worst month in our sample: immediate post-Lehman liquidation panic. Gold was sold aggressively for cash as institutions faced margin calls — a temporary but violent reversal of its safe-haven role |

| Apr 2004 | −9.5 | Unexpectedly strong US non-farm payrolls raised rate hike expectations; dollar strengthened, gold sold off |

These extreme observations reinforce the patterns visible in the volatility analysis. Most of the largest return movements occur during the 2008–2012 crisis period, when financial stress, central-bank interventions, and sharp shifts in real interest rates generated unusually turbulent market conditions.

Two mechanisms appear repeatedly. Safe-haven demand during systemic stress produces large positive gold returns. Rising real interest rates or liquidity shocks can trigger sharp sell-offs.

The most extreme observation — October 2008 (−18.5%) — highlights an important nuance: although gold is widely viewed as a safe-haven asset, it can still experience severe losses during acute liquidity crises when investors sell assets indiscriminately to raise cash.

These episodes emphasise the regime-dependent nature of gold returns, suggesting that different macroeconomic mechanisms may dominate in different periods, which is a fundamental challenge for any pooled linear model. Importantly, the predictors in our dataset (real yields, financial conditions, MOVE index) are precisely the variables that spiked or collapsed during these episodes. This motivates their inclusion in Section 1.5.2, and their appearance in the residuals during these months (large spikes in Section 1.5.3) is expected rather than a model failure.

Plot 5: Correlations with gold returns

Before constructing predictive models, we examine simple pairwise correlations between each predictor and next-month gold returns. This step is exploratory: correlations do not establish causality, but they provide a quick indication of which variables may contain useful signal.

all_predictors = ['real_yield', 'usd_broad_index', 'nfci_monthly', 'MOVE_index', 'gscpi',

'msci_world', 'msci_emerging_markets', 'brent_price',

'us_10y_breakeven_inflation', 'us_10y_yield',

'commodity_equity_volatility', 'us_inflation_expectations',

'euro_area_inflation_expectations', 'world_trade_volume',

'world_industrial_production', 'sp_gsci']

corr_all = modelling_df[['target'] + all_predictors].corr()['target'].drop('target').sort_values()

fig, ax = plt.subplots(figsize=(9, 6))

colors = ['#c0392b' if c < 0 else '#27ae60' for c in corr_all]

ax.barh(corr_all.index, corr_all.values, color=colors, edgecolor='white')

ax.axvline(0, color='black', linewidth=0.8)

ax.set_title('All macro predictors have weak bivariate correlations (|r| ≤ 0.22): no single variable dominates',

fontsize=11)

ax.set_xlabel('Pearson Correlation')

plt.tight_layout()

plt.savefig('w08-correlations.png', dpi=150)

plt.show()

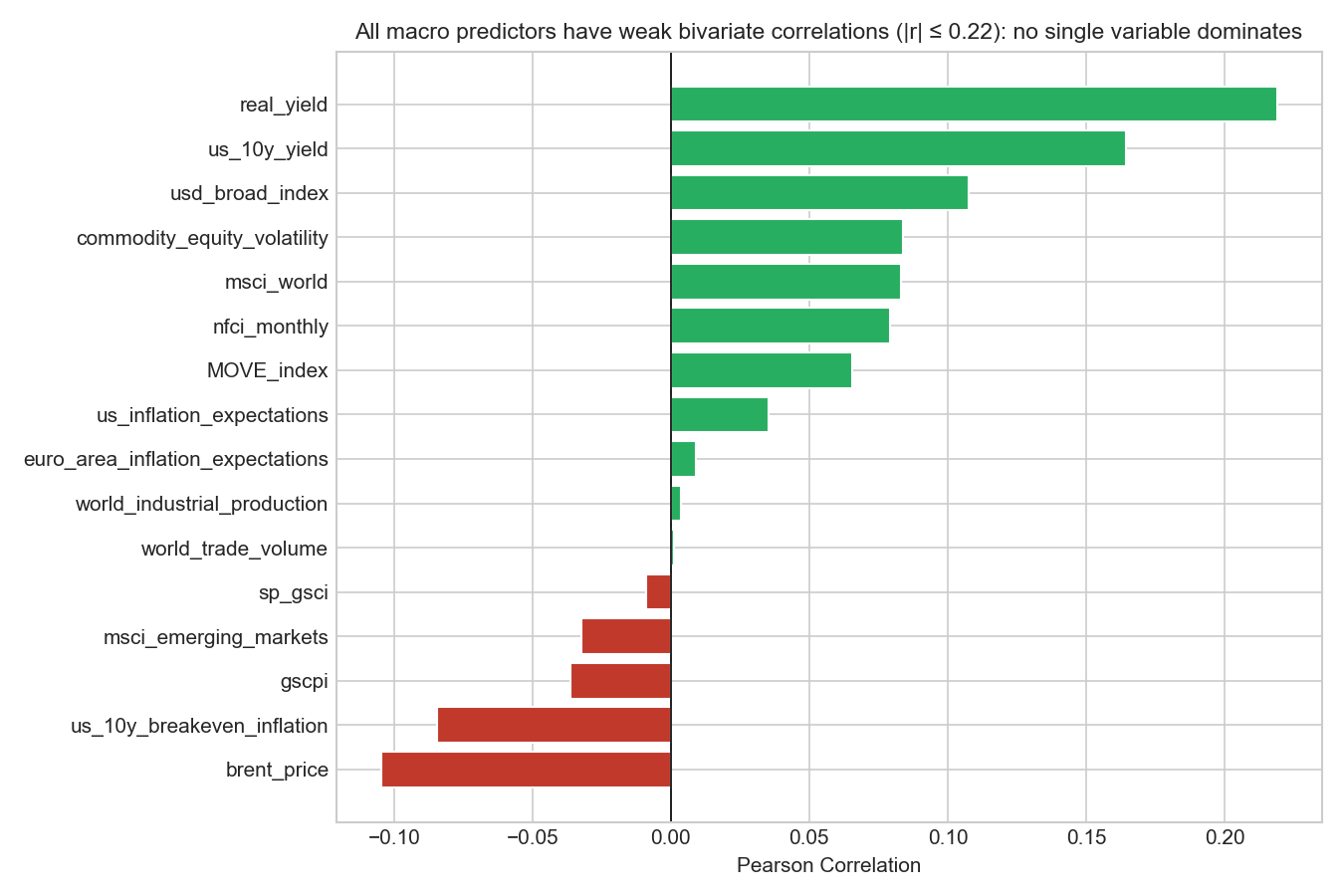

Overall strength of correlations

The first observation is that all correlations are relatively small, with absolute values below about 0.22. The strongest relationship is between real_yield and gold returns (r ≈ 0.22), followed by us_10y_yield (r ≈ 0.16) and usd_broad_index (r ≈ 0.11).

These modest values reinforce a key feature of financial markets: short-horizon asset returns are extremely noisy. Most month-to-month variation in gold returns cannot be explained by a single macroeconomic variable.

The real yield relationship

The strongest correlation is with real yields (r ≈ 0.22). Economic theory typically predicts a negative relationship between real interest rates and gold prices, because gold does not pay interest. When real yields rise, investors can earn higher inflation-adjusted returns on bonds, making gold relatively less attractive. However, the positive correlation observed here likely reflects specific macroeconomic episodes in the sample. During 2019–2020, gold prices rose strongly even as real yields increased due to large fiscal stimulus and financial uncertainty. During some tightening cycles, rising interest rates coincided with broader commodity price rallies or currency movements that supported gold. These examples illustrate an important statistical point: bivariate correlations can mask more complex relationships that depend on the broader macroeconomic environment. The sign of the coefficient in a multivariate model may differ from the bivariate correlation.

Financial stress indicators

Variables measuring financial stress show small but positive correlations with gold returns: nfci_monthly (r ≈ 0.08) and MOVE_index (r ≈ 0.06). Although these relationships are weak, they are consistent with gold’s reputation as a partial safe-haven asset. When financial conditions tighten or market volatility rises, investors sometimes shift toward assets perceived as stores of value, including gold. However, the correlations are small because this relationship does not hold in all situations. In severe liquidity crises such as October 2008, gold can actually fall as investors sell assets to raise cash.

Commodity and inflation variables

Some variables show small negative correlations with gold returns, such as brent_price (r ≈ −0.10) and us_10y_breakeven_inflation (r ≈ −0.08). These values are small enough that they should not be interpreted as strong economic relationships. Instead, they likely reflect sample-specific dynamics, such as periods when oil prices rose due to supply shocks while gold prices moved differently. The key takeaway is that inflation-related variables do not appear to provide strong short-horizon predictive power for gold returns in this dataset, despite the common narrative that gold acts as an inflation hedge.

Checking for multicollinearity among predictors

Before estimating models, we examine whether some predictors are strongly correlated with each other, which can lead to multicollinearity, inflated coefficient variance and unstable estimates.

predictors_for_corr = ['real_yield', 'nfci_monthly', 'MOVE_index', 'gscpi',

'usd_broad_index', 'us_10y_yield', 'msci_world',

'brent_price', 'us_10y_breakeven_inflation',

'commodity_equity_volatility', 'us_inflation_expectations',

'world_trade_volume', 'world_industrial_production', 'sp_gsci']

corr_matrix = modelling_df[predictors_for_corr].corr()

mask = np.triu(np.ones_like(corr_matrix, dtype=bool))

fig, ax = plt.subplots(figsize=(10, 8))

sns.heatmap(

corr_matrix, mask=mask, cmap="coolwarm",

vmin=-1, vmax=1, center=0,

annot=True, fmt=".2f", linewidths=0.5,

cbar_kws={"shrink": .8}, ax=ax

)

ax.set_title("Several clusters of correlated predictors, including near-perfect collinearity in activity indices")

plt.tight_layout()

plt.savefig('w08-predictor-corr-matrix.png', dpi=150)

plt.show()

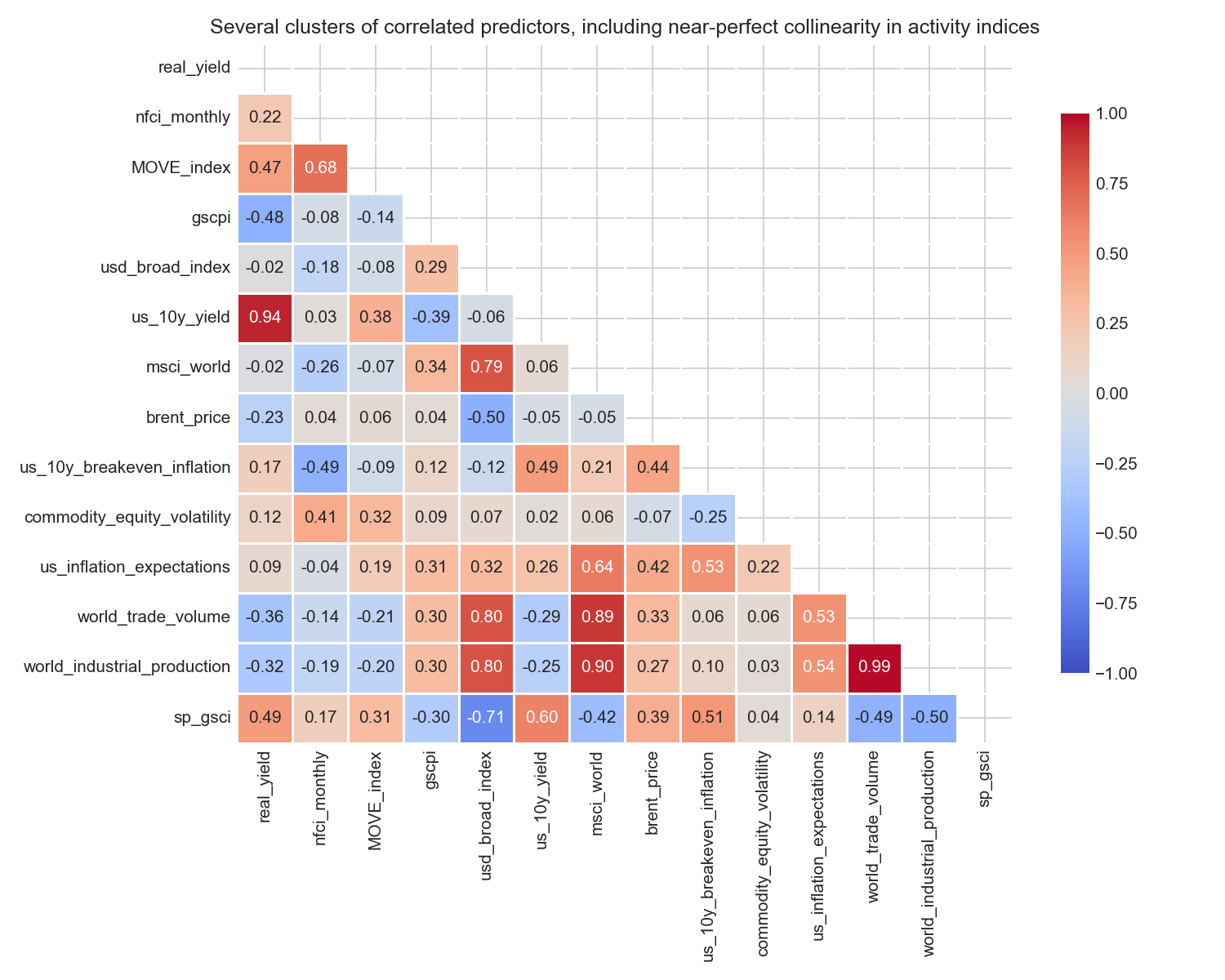

The heatmap reveals several important clusters.

Interest-rate variables: real_yield and us_10y_yield are very strongly correlated (r ≈ 0.94). This is expected: real yield is derived directly from nominal yield by subtracting breakeven inflation. Including both would be severely redundant. We retain real_yield as the economically more meaningful measure.

Financial stress: MOVE_index and nfci_monthly are substantially correlated (r ≈ 0.75). Both measure aspects of financial market stress; NFCI is the more comprehensive composite. The moderate (not extreme) correlation means we can include both without severe multicollinearity, as confirmed by the VIF analysis below.

Global economic activity: world_trade_volume and world_industrial_production are almost perfectly correlated (r ≈ 0.99); they are measuring the same global business cycle. Including both would be completely redundant. MSCI World is also highly correlated with both (r ≈ 0.90), confirming they all capture the same underlying growth signal.

Commodity cycles: brent_price and sp_gsci show a moderate positive correlation (~0.39), both reflecting commodity price movements. Neither shows strong correlation with the target.

These clusters directly motivate our parsimonious feature set: we select at most one variable from each strongly correlated group, prioritising theoretical relevance and coverage of distinct economic channels.

Summary of the correlation analysis

The correlation analysis highlights three important points:

- Predictive relationships are weak. No single macroeconomic indicator strongly predicts next-month gold returns.

- Some economic signals exist. Real yields, financial conditions, and currency movements show modest relationships with gold returns.

- Relationships are context-dependent. The sign and strength of correlations may change across different macroeconomic regimes.

For these reasons, correlations should be viewed as exploratory diagnostics rather than definitive evidence of predictive relationships. Variables with weak pairwise correlations may still contribute useful information when combined in a multivariate model, particularly when they capture different dimensions of the macroeconomic environment. The correlation analysis also serves as the first filter in our feature selection workflow in Section 1.5.2: predictors with very weak bivariate correlations (|r| < 0.05) and no compelling theoretical rationale will be dropped there as part of the iterative VIF-based pruning process.

Plot 6: Multi-variable visualisation

plot_df = modelling_df[['date', 'target', 'real_yield', 'usd_broad_index', 'nfci_monthly']].dropna()

fig, axes = plt.subplots(1, 2, figsize=(14, 5))

sc = axes[0].scatter(plot_df['real_yield'], plot_df['target'],

c=plot_df['usd_broad_index'], cmap='RdYlGn_r',

alpha=0.7, edgecolors='grey', linewidths=0.3, s=40)

plt.colorbar(sc, ax=axes[0], label='USD Broad Index')

axes[0].axhline(0, color='black', linewidth=0.7, linestyle='--')

axes[0].axvline(0, color='black', linewidth=0.7, linestyle='--')

axes[0].set_xlabel('Real Yield (10Y Nominal − Breakeven, %)')

axes[0].set_ylabel('Next-Month Gold Return (%)')

axes[0].set_title('Negative real yields associate with positive gold returns;\nbut the relationship is noisy and regime-dependent',

fontsize=9)

sc2 = axes[1].scatter(plot_df['nfci_monthly'], plot_df['target'],

c=plot_df['real_yield'], cmap='coolwarm',

alpha=0.7, edgecolors='grey', linewidths=0.3, s=40)

plt.colorbar(sc2, ax=axes[1], label='Real Yield (%)')

axes[1].axhline(0, color='black', linewidth=0.7, linestyle='--')

axes[1].set_xlabel('NFCI (monthly mean)')

axes[1].set_ylabel('Next-Month Gold Return (%)')

axes[1].set_title('Largest gold rallies occur when financial conditions are tight\nand real rates are low (blue dots)',

fontsize=9)

plt.tight_layout()

plt.savefig('w08-gold-multivariable-plot.png', dpi=150)

plt.show()

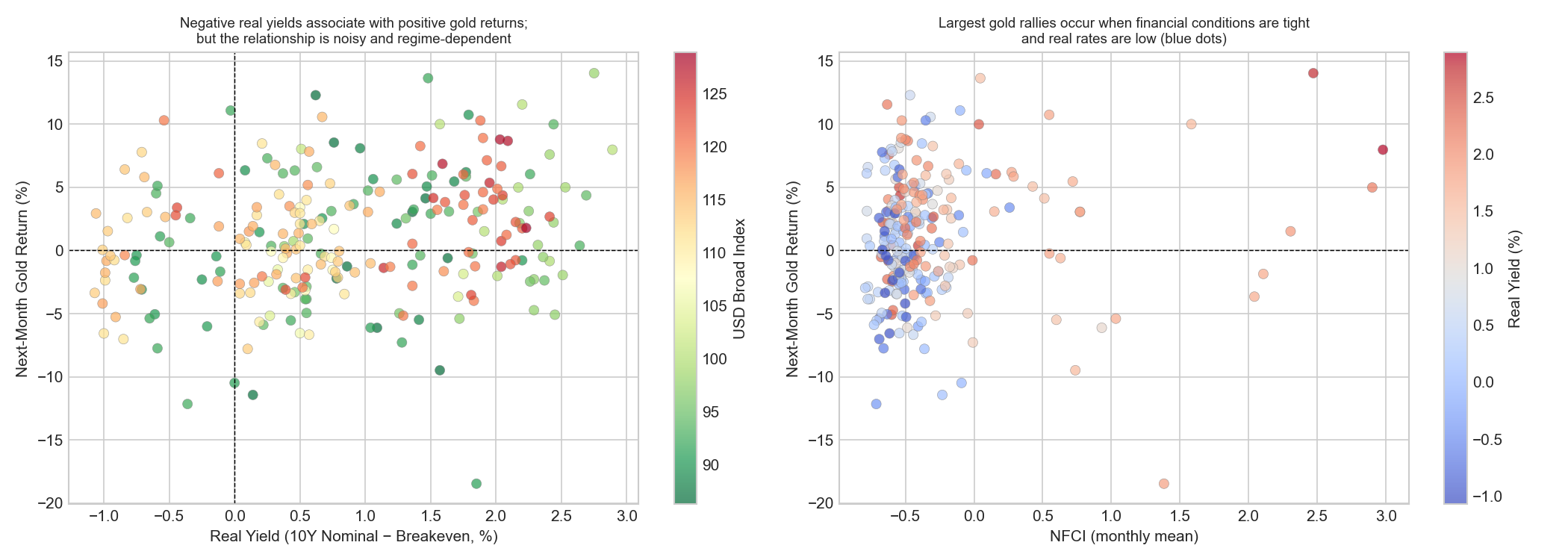

These plots extend the earlier correlation analysis by examining how gold returns behave when multiple macro variables interact simultaneously.

Real yields, the US dollar, and gold returns

The left panel plots real yields against next-month gold returns, with the colour of each point representing the strength of the US dollar.

The scatter shows substantial dispersion, confirming that the relationship between real yields and gold returns is weak. Even when real yields are very low or negative, gold returns can vary widely. This reinforces the conclusion from the correlation analysis that no single macro variable provides strong predictive power for monthly gold returns.

The colour scale reveals that movements in gold returns often coincide with changes in the US dollar. Many observations with positive gold returns are associated with lighter green colours, indicating periods when the dollar was relatively weak. This suggests that some of the apparent relationship between real yields and gold returns may actually reflect interactions between interest rates and currency movements, a reminder that, when multiple forces operate simultaneously, simple pairwise correlations can give a misleading picture.

There is also a visible concentration of observations in the upper-left quadrant (negative real yields and positive gold returns). This pattern is consistent with the theoretical view that gold performs well when real interest rates are low or negative, because the opportunity cost of holding a non-interest-bearing asset decreases.

Financial conditions and safe-haven behaviour

The right panel shows the relationship between financial conditions (nfci_monthly) and gold returns, while the colour scale now represents real yields.

Most observations cluster around moderate financial conditions and near-zero returns. However, some of the largest positive gold returns appear when financial conditions tighten sharply. The colour scale provides additional insight: many of the strongest positive gold returns occur when financial conditions are tight and real yields are relatively low (blue-coloured points). This combination is typical of periods of financial stress, when investors seek assets perceived as safe stores of value.

Linking the multivariate view to the earlier diagnostics

Taken together, these plots help reconcile several findings from the previous analysis. Gold returns are influenced by multiple macroeconomic forces simultaneously: interest rates, currency movements, financial stress, and commodity market dynamics. When these factors interact, simple pairwise statistics may not capture the full structure of the relationships. This observation reinforces an important point for the modelling stage: predictive relationships are likely to be multivariate and regime-dependent, rather than driven by a single macroeconomic variable. It also foreshadows why the LightGBM model in Section 1.5.4, despite its flexibility to capture interactions, does not substantially outperform the linear baseline. The joint non-linear structure visible in these plots is real, but not consistent enough across time windows to be reliably exploited with 221 training observations.

Implications for modelling

These observations highlight an important challenge for predictive modelling.

Monthly gold returns are noisy and centred around zero, meaning that most of the variation in the series is difficult to explain using simple linear relationships. The volatility analysis reveals that market behaviour changes across distinct macro-financial regimes, with periods of heightened turbulence associated with major economic shocks and policy uncertainty.

The correlation diagnostics further suggest that, while several macro-financial variables contain modest predictive signal, many predictors are also correlated with each other, reflecting overlapping economic information.

Taken together, these findings imply that predictive performance may be limited and that modelling results should be interpreted cautiously. Nevertheless, macro-financial indicators may still provide partial information about the economic environment in which gold returns are generated. The modelling exercise that follows should therefore be viewed as an exploratory attempt to extract any systematic signal from these macro indicators, rather than an expectation that gold returns can be forecast with high precision.

1.5 Modelling

1.5.1 Train/test split

# Ensure chronological ordering

modelling_df = modelling_df.sort_values('date').reset_index(drop=True)

# Temporal 80/20 split

split_idx = int(len(modelling_df) * 0.8)

train = modelling_df.iloc[:split_idx].copy()

test = modelling_df.iloc[split_idx:].copy()

print(f"Training: {train['date'].min().date()} to {train['date'].max().date()} ({len(train)} obs)")

print(f"Test: {test['date'].min().date()} to {test['date'].max().date()} ({len(test)} obs)")Training: 2003-01-01 to 2021-05-01 (221 obs)

Test: 2021-06-01 to 2026-01-01 (56 obs)Justification

Because the dataset is a time series, the training and test sets must respect the chronological order of observations. Random cross-validation would mix past and future observations, allowing the model to learn from information that would not have been available at the time predictions were made, a classic form of data leakage.

We therefore use a strict temporal split, training the model on earlier observations and evaluating its performance on later data.

Using an 80/20 chronological split ensures that:

- the model is trained on the majority of the available historical data,

- the test set remains a genuinely unseen out-of-sample period, and

- the evaluation reflects the model’s ability to generalise to new macro-financial conditions.

The test set (June 2021–January 2026) is particularly demanding: it covers the post-pandemic commodity rally, the 2022 inflation surge and the most aggressive Fed tightening cycle in 40 years, and elevated geopolitical risk following Russia’s invasion of Ukraine. This is a genuine test of whether the relationships estimated on the pre-2021 sample generalise to a structurally different macro regime.

The test set is not used for any modelling decisions. It is reserved solely for final performance evaluation.

1.5.2 Feature selection

The dataset contains a fairly large number of macro-financial indicators, and many of them capture related underlying economic forces, e.g interest-rate conditions, global activity, inflation pressures, commodity cycles, and financial market stress. Including all available predictors simultaneously therefore risks multicollinearity, where predictors contain overlapping information. Multicollinearity can inflate standard errors, make coefficient estimates unstable and difficult to interpret, and weaken out-of-sample performance.

Feature selection therefore proceeds in three steps:

- Structural filtering: predictors that are mechanically derived from variables already included are dropped first. For example,

us_10y_yieldandus_10y_breakeven_inflationare excluded becausereal_yieldalready combines them. - Sample-cost filtering:

usd_broad_indexis excluded as discussed in Section 1.3.4. Although economically relevant, it would substantially reduce the usable sample because of missing early observations. - Multicollinearity diagnostics (VIF): we compute VIFs on the remaining candidate set and iteratively remove redundant predictors, re-checking after each round until the remaining set is acceptably stable.

Step 1 & 2: Structural filtering and candidate set

After the above exclusions, the initial candidate predictor set is:

candidate_features = [

'MOVE_index', 'msci_emerging_markets', 'msci_world', 'sp_gsci',

'euro_area_inflation_expectations', 'us_inflation_expectations',

'commodity_equity_volatility', 'brent_price', 'world_trade_volume',

'world_industrial_production', 'gscpi', 'real_yield', 'nfci_monthly'

]Step 3: Iterative VIF pruning

def compute_vif(df, features):

vif_data = df[features].dropna()

return pd.DataFrame({

"feature": features,

"VIF": [variance_inflation_factor(vif_data.values, i)

for i in range(len(features))]

}).sort_values("VIF", ascending=False)

# Round 1: VIF on full candidate set

print("=== Round 1: Full candidate set ===")

print(compute_vif(train, candidate_features).round(2).to_string(index=False))=== Round 1: Full candidate set ===

feature VIF

world_trade_volume 4124.51

world_industrial_production 3483.54

msci_world 130.95

msci_emerging_markets 114.44

us_inflation_expectations 101.86

brent_price 69.10

sp_gsci 65.46

MOVE_index 40.18

euro_area_inflation_expectations 12.91

commodity_equity_volatility 11.62

real_yield 5.91

nfci_monthly 5.08

gscpi 2.58The full candidate set shows extreme multicollinearity. The clearest redundancy appears in three blocks. First, the global-cycle block — world_trade_volume, world_industrial_production, msci_world, and msci_emerging_markets — contains several variables that all move with the same broad world business cycle. Second, the commodity block — brent_price and sp_gsci — contains two closely related measures of commodity-market conditions. Third, the inflation-expectations block — us_inflation_expectations and euro_area_inflation_expectations — risks overlapping both with each other and with real_yield.

We begin by pruning the commodity block.

# Round 2: Remove brent_price

cands_r2 = [f for f in candidate_features if f != 'brent_price']

print("\n=== Round 2: After removing brent_price ===")

print(compute_vif(train, cands_r2).round(2).to_string(index=False))=== Round 2: After removing brent_price ===

feature VIF

world_trade_volume 3455.07

world_industrial_production 3030.78

us_inflation_expectations 94.12

msci_emerging_markets 85.11

msci_world 77.00

sp_gsci 58.96

MOVE_index 39.91

euro_area_inflation_expectations 11.90

commodity_equity_volatility 10.96

nfci_monthly 5.06

real_yield 4.90

gscpi 2.53brent_price is removed first because it is the narrower of the two commodity proxies. sp_gsci is retained at this stage because it is broader and better aligned with a general commodity-cycle interpretation, whereas brent_price is more oil-specific.

The next step is to collapse the global-cycle block by removing variables that duplicate the same underlying world business-cycle signal.

# Round 3: Collapse the global-cycle block

cands_r3 = [

'MOVE_index', 'msci_world', 'sp_gsci',

'euro_area_inflation_expectations', 'us_inflation_expectations',

'commodity_equity_volatility', 'gscpi', 'real_yield', 'nfci_monthly'

]

print("\n=== Round 3: After collapsing the global cycle block ===")

print(compute_vif(train, cands_r3).round(2).to_string(index=False))=== Round 3: After collapsing the global cycle block ===

feature VIF

us_inflation_expectations 77.35

sp_gsci 45.37

MOVE_index 27.62

msci_world 23.03

euro_area_inflation_expectations 10.67

commodity_equity_volatility 9.95

nfci_monthly 4.15

real_yield 3.92

gscpi 1.56In this round, world_trade_volume, world_industrial_production, and msci_emerging_markets are removed. The first two both proxy global real activity, while msci_emerging_markets overlaps strongly with msci_world. We retain msci_world as the broadest and cleanest representative of global market conditions. This substantially reduces the most extreme collinearity, but the model is still unstable: us_inflation_expectations, sp_gsci, MOVE_index, and msci_world all remain highly collinear.

We next address the inflation-expectations block.

# Round 4a: Remove euro-area inflation expectations

cands_r4a = [f for f in cands_r3 if f != 'euro_area_inflation_expectations']

print("\n=== Round 4: After removing inflation expectations (EU) ===")

print(compute_vif(train, cands_r4a).round(2).to_string(index=False))=== Round 4: After removing inflation expectations (EU) ===

feature VIF

us_inflation_expectations 77.26

sp_gsci 36.60

MOVE_index 24.13

msci_world 21.46

commodity_equity_volatility 9.09

nfci_monthly 4.08

real_yield 3.75

gscpi 1.50euro_area_inflation_expectations is removed first because, in a USD gold-pricing setting, it is less central than the US measure. However, this change barely affects the collinearity problem: us_inflation_expectations remains extremely high.

We therefore remove the US inflation-expectations series as well.

# Round 4b: Remove US inflation expectations

cands_r4b = [f for f in cands_r4a if f != 'us_inflation_expectations']

print("\n=== Round 4: After removing inflation expectations (US) ===")

print(compute_vif(train, cands_r4b).round(2).to_string(index=False))=== Round 4: After removing inflation expectations (US) ===

feature VIF

MOVE_index 22.22

msci_world 13.32

sp_gsci 11.73

commodity_equity_volatility 9.02

nfci_monthly 3.85

real_yield 3.19

gscpi 1.47This confirms that the inflation-expectations variables were contributing substantial redundancy. Once they are removed, the model is much improved, but not yet fully stable: MOVE_index, msci_world, and sp_gsci still remain above the threshold of 10.

The final pruning step is therefore a stabilisation round.

# Round 5: Remove sp_gsci and MOVE_index

cands_r5 = [

'msci_world', 'commodity_equity_volatility',

'real_yield', 'nfci_monthly', 'gscpi'

]

print("\n=== Round 5: After removing sp_gsci and MOVE_index ===")

print(compute_vif(train, cands_r5).round(2).to_string(index=False))=== Round 5: After removing sp_gsci and MOVE_index ===

feature VIF

msci_world 8.32

commodity_equity_volatility 7.17

real_yield 2.12

nfci_monthly 2.03

gscpi 1.27At this stage, all remaining VIFs are below 10, so the iterative pruning process stops here.

This leaves the following final feature set:

candidate_features_final = [

'msci_world',

'commodity_equity_volatility',

'real_yield',

'nfci_monthly',

'gscpi'

]

print("\n=== Final candidate set ===")

print(compute_vif(train, candidate_features_final).round(2).to_string(index=False))=== Final candidate set ===

feature VIF

msci_world 8.32

commodity_equity_volatility 7.17

real_yield 2.12

nfci_monthly 2.03

gscpi 1.27The final retained predictors capture five economically distinct channels relevant to gold returns:

real_yield: the opportunity cost of holding gold relative to inflation-protected bondsnfci_monthly: broad financial conditions, including credit and liquidity stressgscpi: global supply-chain pressure and the broader supply-side inflation environmentmsci_world: global equity-market conditions and investor risk sentimentcommodity_equity_volatility: uncertainty in commodity-linked equity markets, capturing an additional dimension of resource-sector risk

Two variables that were initially attractive on theoretical grounds (MOVE_index and sp_gsci) do not survive the final specification because, after the earlier pruning rounds, they still remain too strongly entangled with the broader market-stress and commodity/global-cycle structure. Retaining them would therefore add instability without enough distinct information to justify their inclusion.

Importantly, the decision to stop at 5 predictors is not driven by sample size alone. The training set contains 221 observations, so even a model with 10 predictors would still have about 22 observations per predictor, and a model with 12 predictors would still have about 18 observations per predictor. Those ratios are not automatically problematic. However, feature selection is not only about satisfying a minimum observations-to-predictor rule. In a relatively small macro-financial time-series setting, adding more variables that are strongly related to one another can reduce stability and make the model harder to interpret without adding much incremental signal.

For that reason, 5 predictors is a reasonable middle ground: small enough to avoid the severe redundancy seen in the full candidate set, but still rich enough to retain several distinct economic channels.

With 5 predictors on 221 training observations, the observation-to-predictor ratio is 44.2:1, which remains comfortably conservative.

1.5.3 Baseline linear regression

We begin with an ordinary least squares (OLS) regression as a baseline forecasting model.

Linear models provide a useful starting point because they are transparent, easy to interpret, and widely used as benchmarks in macro-financial forecasting. Even if the true relationship between macro variables and gold returns is nonlinear, a linear specification allows us to test whether the selected macro-financial indicators contain any systematic predictive signal.

features = candidate_features_final

train_clean = train[features + ['target']].dropna()

test_clean = test[features + ['target']].dropna()

X_train = train_clean[features]

y_train = train_clean['target']

X_test = test_clean[features]

y_test = test_clean['target']

lr = LinearRegression()

lr.fit(X_train, y_train)

y_pred_train = lr.predict(X_train)

y_pred_test = lr.predict(X_test)Evaluation metrics

Forecast accuracy is evaluated using three complementary metrics.

R² (Coefficient of Determination): R² measures the proportion of variance in the target variable explained by the model. In financial return forecasting, R² values are typically very small, because short-horizon asset returns are dominated by unpredictable news and market shocks. A negative out-of-sample R² indicates that the model performs worse than a historical mean forecast, which serves as a natural baseline benchmark.

Mean Absolute Error (MAE): MAE measures the average absolute forecast error in percentage points of return. Because financial returns occasionally exhibit large shocks, MAE provides a robust measure of the typical prediction error.

Root Mean Squared Error (RMSE): RMSE penalises large errors more heavily than MAE because errors are squared before averaging. This metric is therefore sensitive to large forecasting mistakes during crisis periods, which are economically important in financial markets.

def evaluate(y_true, y_pred, label):

r2 = r2_score(y_true, y_pred)

mae = mean_absolute_error(y_true, y_pred)

rmse = np.sqrt(mean_squared_error(y_true, y_pred))

print(f"{label}: R²={r2:.4f}, MAE={mae:.4f}%, RMSE={rmse:.4f}%")

evaluate(y_train, y_pred_train, "Train")

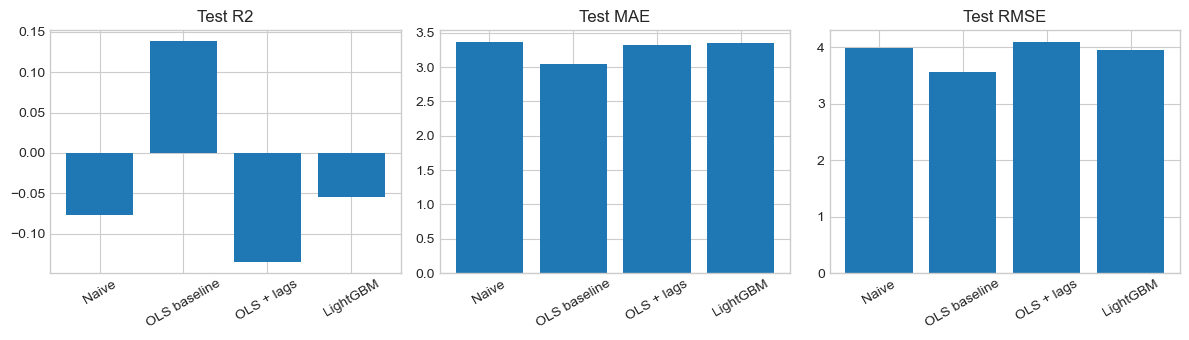

evaluate(y_test, y_pred_test, "Test ")Train: R²=0.0490, MAE=3.8366%, RMSE=4.9046%

Test : R²=0.1383, MAE=3.0510%, RMSE=3.5693%Comparison with a naïve forecast

To evaluate whether the regression model adds value, we compare it to a simple naïve benchmark that predicts the historical mean return every month.

mean_train = y_train.mean()

y_pred_naive = np.full(len(y_test), mean_train)

print(f"Training mean return: {mean_train:.4f}%")

evaluate(y_test, y_pred_naive, "Naive")Training mean return: 0.8506%

Naive: R²=-0.0752, MAE=3.3639%, RMSE=3.9871%| Model | Test R² | Test MAE | Test RMSE |

|---|---|---|---|

| Naïve (historical mean) | −0.075 | 3.36% | 3.99% |

| OLS baseline | +0.138 | 3.05% | 3.57% |

The regression model outperforms the naïve forecast across all three evaluation metrics. Relative to the historical-mean benchmark, it reduces the mean absolute error from 3.36% to 3.05% and the root mean squared error from 3.99% to 3.57%, while also achieving a positive out-of-sample \(R^2\) of 0.138. Because the target variable is the monthly gold return measured in percentage points, these errors can be read directly on the scale of the outcome: on average, the model’s predictions miss the realised next-month return by about 3.05 percentage points, and larger misses are substantial enough to produce an RMSE of 3.57 percentage points. An MAE of 3.05 percentage points is non-trivial given that monthly gold returns themselves are often only a few percentage points in magnitude.

This suggests that the selected macro-financial variables contain some genuine predictive information about next-month gold returns, since the model improves consistently on the historical-mean benchmark. However, the gains remain modest. In practical terms, the model is somewhat better at forecasting monthly returns than simply predicting the average, but forecast errors are still large relative to the scale of month-to-month gold-price movements. The results should therefore be interpreted as evidence of limited but real predictive value, rather than strong forecasting power.

Adjusted R²

Because the regression model includes several predictors, it is useful to compute the adjusted R², which penalises the inclusion of unnecessary variables.

n, p = len(X_train), len(features)

adj_r2_train = 1 - (1 - r2_score(y_train, y_pred_train)) * (n - 1) / (n - p - 1)

print(f"Adjusted R² (train): {adj_r2_train:.4f}")Adjusted R² (train): 0.0247The adjusted R² of 0.0247 is smaller than the raw in-sample R² of 0.049, reflecting the penalty for using 5 predictors on 221 observations. The model explains less than 5% of gold return variation in the training period. This is a key finding: macro indicators explain very little of month-to-month gold return variance, consistent with the near-efficiency of commodity futures markets.

Coefficient interpretation

coef_df = pd.DataFrame({'Feature': features, 'Coefficient': lr.coef_})

coef_df = coef_df.reindex(coef_df['Coefficient'].abs().sort_values(ascending=False).index)

print(coef_df.to_string(index=False))

print(f"\nIntercept: {lr.intercept_:.4f}") Feature Coefficient

real_yield 1.207170

gscpi 0.342028

nfci_monthly 0.285436

commodity_equity_volatility 0.024486

msci_world 0.000333

Intercept: -0.7898We interpret each coefficient as the predicted change in next-month gold return (in percentage points) associated with a one-unit increase in the predictor, holding the other included predictors constant. Because these predictors are measured in different units, the coefficients are most informative for their sign and conditional role in the model, rather than as directly comparable effect sizes. More broadly, these are conditional predictive associations, not isolated causal effects.

| Feature | Coefficient | Economic Interpretation |

|---|---|---|

real_yield |

+1.21 | A 1 percentage point increase in real yield is associated with a 1.21 percentage point increase in next-month gold return, holding the other predictors fixed. This sign is counterintuitive relative to standard theory, which would usually predict a negative relationship because higher real yields raise the opportunity cost of holding gold. In this sample, however, real_yield appears to be capturing more than the textbook opportunity-cost channel. In particular, some high-real-yield observations occur in stressed macro-financial environments or in the aftermath of major shocks, when gold subsequently performed strongly. In the multivariable regression, real_yield may therefore be acting partly as a broader regime indicator rather than a pure measure of carrying cost. This coefficient should be interpreted cautiously. |

gscpi |

+0.34 | Higher global supply-chain pressure is associated with slightly higher next-month gold returns. This is directionally consistent with the idea that supply disruptions raise inflationary pressure and macro uncertainty, both of which can support demand for gold. The coefficient is positive and economically plausible, though modest in size. |

nfci_monthly |

+0.29 | Tighter financial conditions are associated with slightly higher next-month gold returns. This is consistent with gold’s safe-haven role: when credit conditions deteriorate and broader financial stress rises, demand for defensive assets may increase. The effect is not large, but the sign is theoretically sensible. |

commodity_equity_volatility |

+0.02 | The coefficient is very small, suggesting that commodity-linked equity volatility adds little incremental predictive power once broader financial conditions, supply-chain pressure, and real yields are already controlled for. Its positive sign is not implausible.Greater uncertainty in commodity-related sectors could coincide with stronger demand for real assets, but its contribution appears limited. |

msci_world |

+0.0003 | The coefficient is positive but economically tiny on its raw scale, indicating that global equity-market conditions add very little marginal predictive signal once the other macro-financial variables are already in the model. In practice, this variable may still help absorb some broad market-cycle variation, but the coefficient suggests only a minimal direct association with next-month gold returns in this specification. |

The intercept is −0.7898, meaning that when all predictors are set to zero, the model predicts a next-month gold return of about −0.79 percentage points. Because zero is not necessarily a substantively meaningful value for every predictor, the intercept is mainly a calibration term rather than an economically important parameter.

Flag on counterintuitive signs: The positive coefficient on real_yield goes against the usual theoretical expectation and should not be over-interpreted. Rather than invalidating the model, it suggests that simple macro levels may proxy broader crisis or regime conditions, especially in a relatively small historical sample. This is an important reminder that predictive regression coefficients in macro-financial settings do not always map cleanly onto textbook comparative statics.

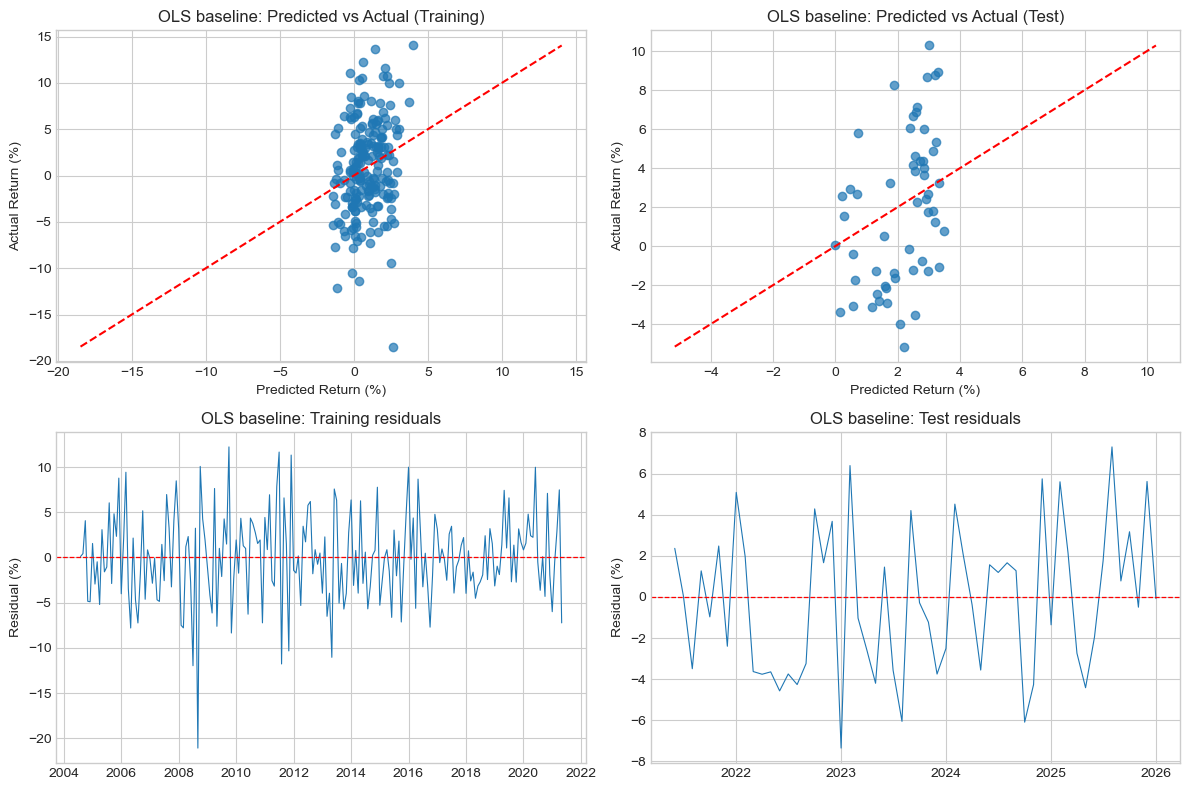

Predicted vs actual returns

fig, axes = plt.subplots(1, 2, figsize=(12, 5))

for ax, y_true, y_pred, title in [

(axes[0], y_train, y_pred_train, "Training"),

(axes[1], y_test, y_pred_test, "Test")

]:

ax.scatter(y_pred, y_true, alpha=0.7)

min_val = min(y_true.min(), y_pred.min())

max_val = max(y_true.max(), y_pred.max())

ax.plot([min_val, max_val], [min_val, max_val], linestyle="--", color='red')

ax.set_xlabel("Predicted Return (%)")

ax.set_ylabel("Actual Return (%)")

ax.set_title(f"Model predictions span a narrow band; actual returns span much wider: {title}",

fontsize=9)

plt.tight_layout()

plt.savefig('w08-gold-baseline-predicted-vs-actual.png', dpi=150)

plt.show()

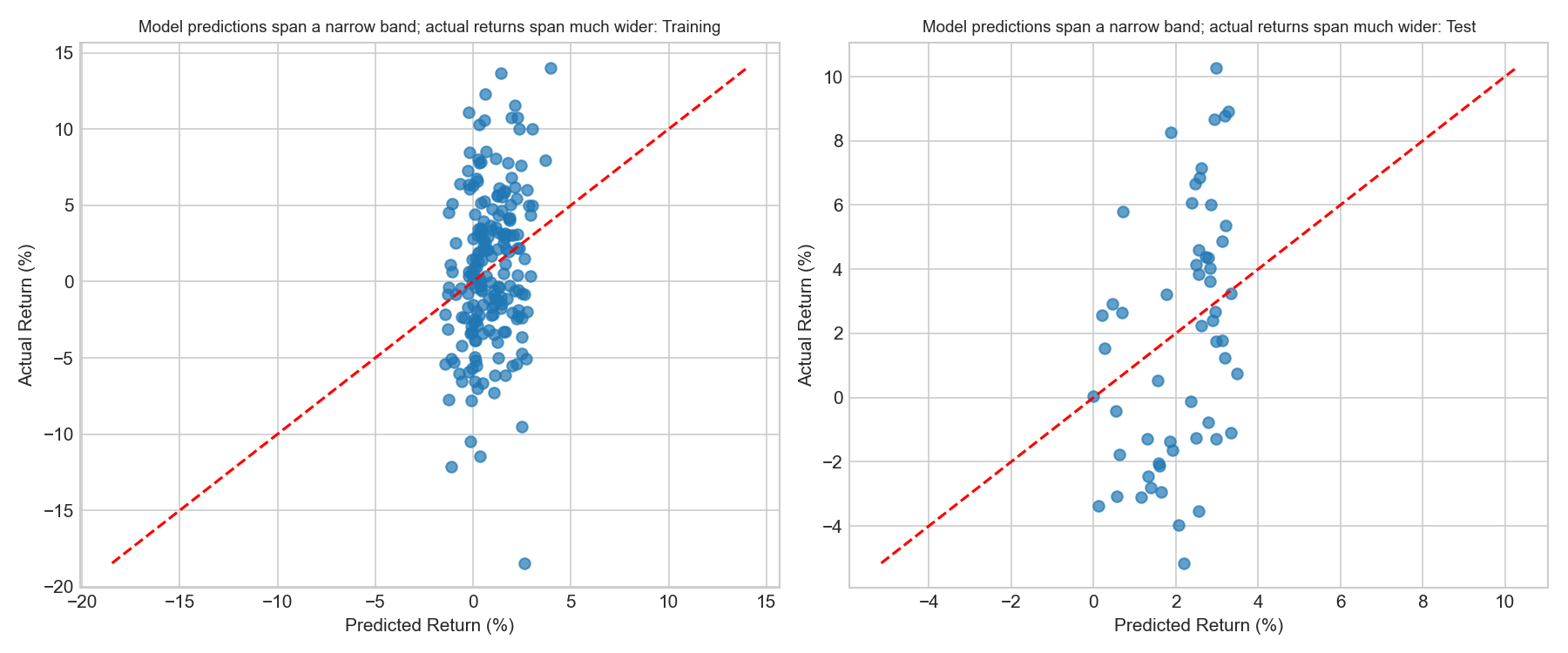

Several patterns are visible.

First, predicted returns lie in a much narrower range than actual returns. While realised monthly gold returns range roughly from −18% to +14%, the model’s predictions cluster between approximately −2% and +5%. This behaviour is typical in financial forecasting: because extreme market movements are usually driven by unexpected events, macro-financial predictors explain only a small portion of return variation.

Second, the points are broadly aligned with the 45-degree line, indicating that higher predicted returns tend to correspond to higher realised returns, although the relationship remains weak. The positive out-of-sample R² of 0.138 confirms that the model extracts a genuine signal, even if it cannot predict the magnitude of extreme events.

Residual analysis

# Build aligned residual tables from the cleaned samples actually used by the model

train_results = train_clean.copy()

train_results["date"] = train.loc[train_clean.index, "date"].values

train_results["y_true"] = y_train.values

train_results["y_pred"] = y_pred_train

train_results["residual"] = train_results["y_true"] - train_results["y_pred"]

train_results["abs_residual"] = train_results["residual"].abs()

test_results = test_clean.copy()

test_results["date"] = test.loc[test_clean.index, "date"].values

test_results["y_true"] = y_test.values

test_results["y_pred"] = y_pred_test

test_results["residual"] = test_results["y_true"] - test_results["y_pred"]

test_results["abs_residual"] = test_results["residual"].abs()

print("Largest training residuals:")

print(

train_results.sort_values("abs_residual", ascending=False)[

["date", "y_true", "y_pred", "residual"]

].head(8).to_string(index=False)

)

print("\nLargest test residuals:")

print(

test_results.sort_values("abs_residual", ascending=False)[

["date", "y_true", "y_pred", "residual"]

].head(8).to_string(index=False)

)Largest training residuals:

date y_true y_pred residual

2008-09-01 -18.460490 2.616010 -21.076500

2009-10-01 13.638985 1.411985 12.227000

2008-07-01 -9.483039 2.483617 -11.966656

2011-08-01 -11.432003 0.342359 -11.774362

2011-07-01 12.291564 0.634369 11.657195

2011-12-01 11.079908 -0.243265 11.323173

2013-05-01 -12.153625 -1.113672 -11.039953

2011-11-01 -10.483917 -0.155337 -10.328580

Largest test residuals:

date y_true y_pred residual

2023-01-01 -5.158525 2.192199 -7.350724

2025-08-01 10.284738 2.985871 7.298867

2023-02-01 8.264823 1.874202 6.390621

2024-10-01 -3.528171 2.561332 -6.089502

2023-08-01 -3.980483 2.070039 -6.050522

2024-12-01 8.674229 2.929658 5.744571

2025-12-01 8.905006 3.286736 5.618269

2025-02-01 8.784834 3.182157 5.602676train_dates = train_results["date"]

test_dates = test_results["date"]

fig, axes = plt.subplots(2, 2, figsize=(12, 8))

# Residuals vs predicted

for ax, results, label in [

(axes[0,0], train_results, "Training"),

(axes[0,1], test_results, "Test")

]:

ax.scatter(results["y_pred"], results["residual"],

alpha=0.6, s=30, color="steelblue")

ax.axhline(0, color="red", linestyle="--", linewidth=0.9)

ax.set_xlabel("Predicted Gold Return (%)")

ax.set_ylabel("Residual (%)")

ax.set_title(f"Residuals vs Predicted: {label}")

# Residuals over time

axes[1,0].plot(train_dates, train_results["residual"],

color="steelblue", linewidth=0.8)

axes[1,0].axhline(0, color="red", linestyle="--", linewidth=0.9)

axes[1,0].set_title("Training residuals: largest misses cluster around crisis episodes")

axes[1,0].set_ylabel("Residual (%)")

axes[1,1].plot(test_dates, test_results["residual"],

color="steelblue", linewidth=0.8)

axes[1,1].axhline(0, color="red", linestyle="--", linewidth=0.9)

axes[1,1].set_title("Test residuals: large misses remain episodic rather than systematic")

axes[1,1].set_ylabel("Residual (%)")

plt.tight_layout()

plt.savefig("w08-residuals-baseline.png", dpi=150)

plt.show()

# Distribution of test residuals

fig, ax = plt.subplots(figsize=(7, 3))

ax.hist(test_results["residual"], bins=15, color="steelblue", alpha=0.8, edgecolor="white")

ax.axvline(0, color="red", linestyle="--")

mean_resid = test_results["residual"].mean()

ax.axvline(mean_resid, color="orange", linestyle="--",

label=f"Mean residual = {mean_resid:.2f}%")

ax.set_title("Test residual distribution: approximately centred but fat-tailed")

ax.set_xlabel("Residual (%)")

ax.legend(fontsize=8)

plt.tight_layout()

plt.savefig("w08-residuals-dist-baseline.png", dpi=150)

plt.show()

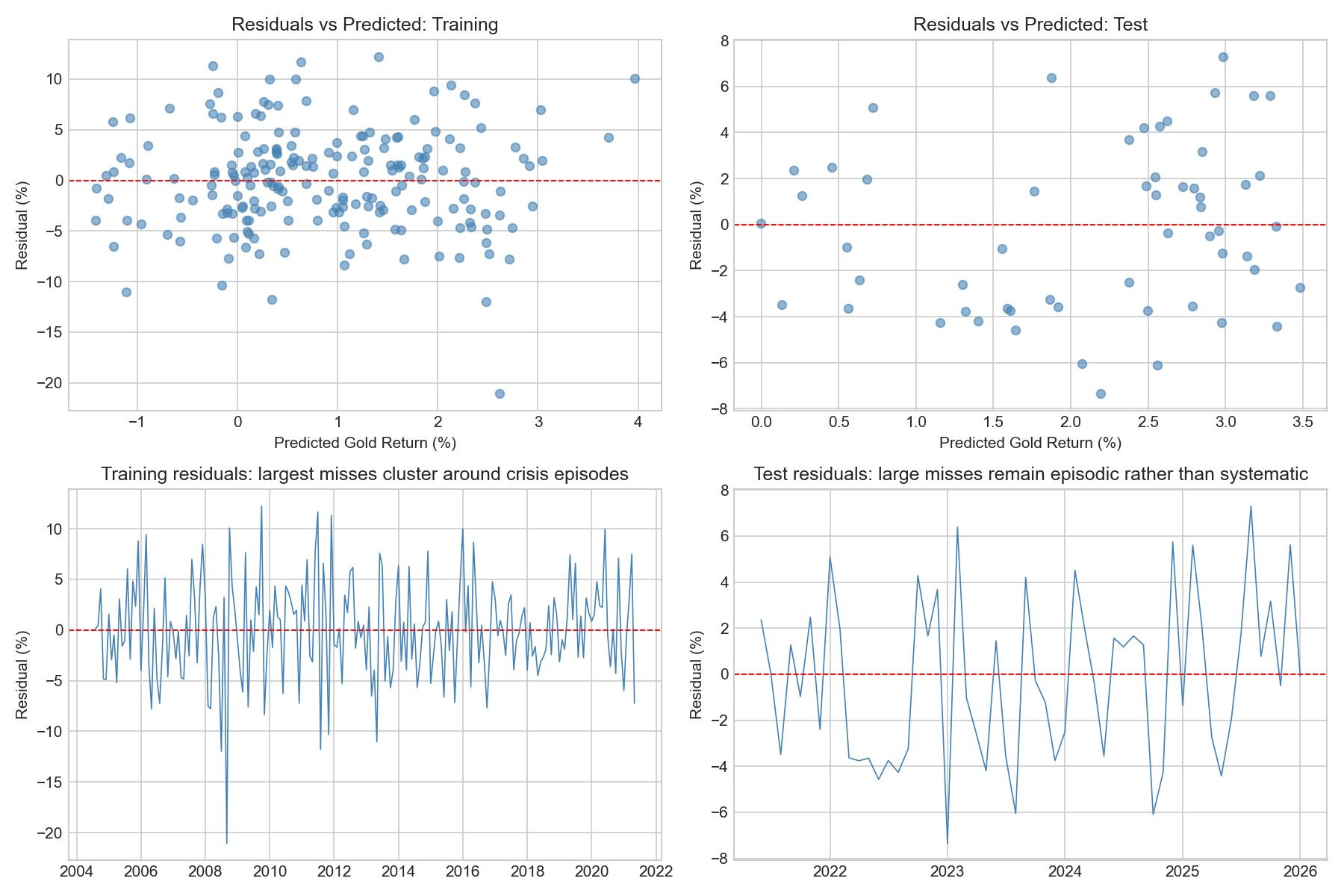

Residuals fluctuate around zero in both the training and test samples, suggesting that the model does not exhibit strong systematic bias overall. The residual-versus-predicted plots also do not show a strong remaining linear pattern, which is reassuring: the model captures some broad macro-financial structure, even though a substantial share of return variation remains unexplained.

The table of largest residuals shows that the biggest misses are concentrated in identifiable historical episodes rather than scattered randomly across time. In the training sample, the most extreme errors occur in July–September 2008, October 2009, July–December 2011, and May 2013. These periods correspond to the Global Financial Crisis, its aftermath, the Eurozone sovereign-debt turmoil, and the 2013 taper-period market dislocation. In such episodes, gold moved abruptly because of crisis dynamics, safe-haven flows, and rapid repricing in inflation and risk expectations, forces that a linear macro-financial model can only capture imperfectly.

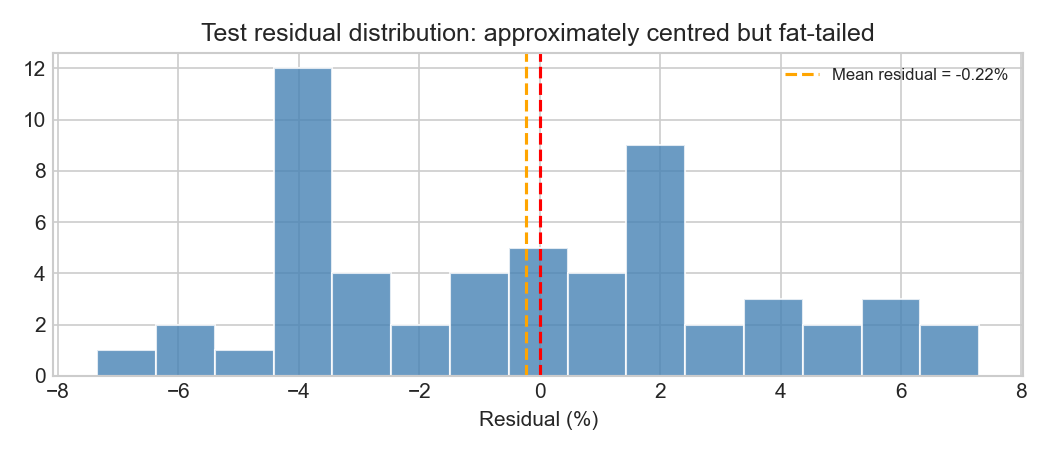

In the test sample, the average residual is close to zero (around −0.22 percentage points), so there is little evidence of a strong overall tendency to over-predict or under-predict returns across the holdout period as a whole. The largest misses are instead episodic. The biggest residuals occur in January–February 2023, August 2023, October 2024, and several months in 2025. This suggests that the model performs reasonably on average but still struggles during months dominated by abrupt repricing, geopolitical uncertainty, or sharp shifts in safe-haven demand.

The residual distribution is centred close to zero but exhibits fat tails, meaning that large forecast errors occur more frequently than would be expected under a normal distribution. This is characteristic of financial return data and reflects the importance of occasional large market shocks. Overall, the residual diagnostics suggest that the model is reasonably well calibrated on average, but still struggles with the shock-driven and episodic nature of gold returns.

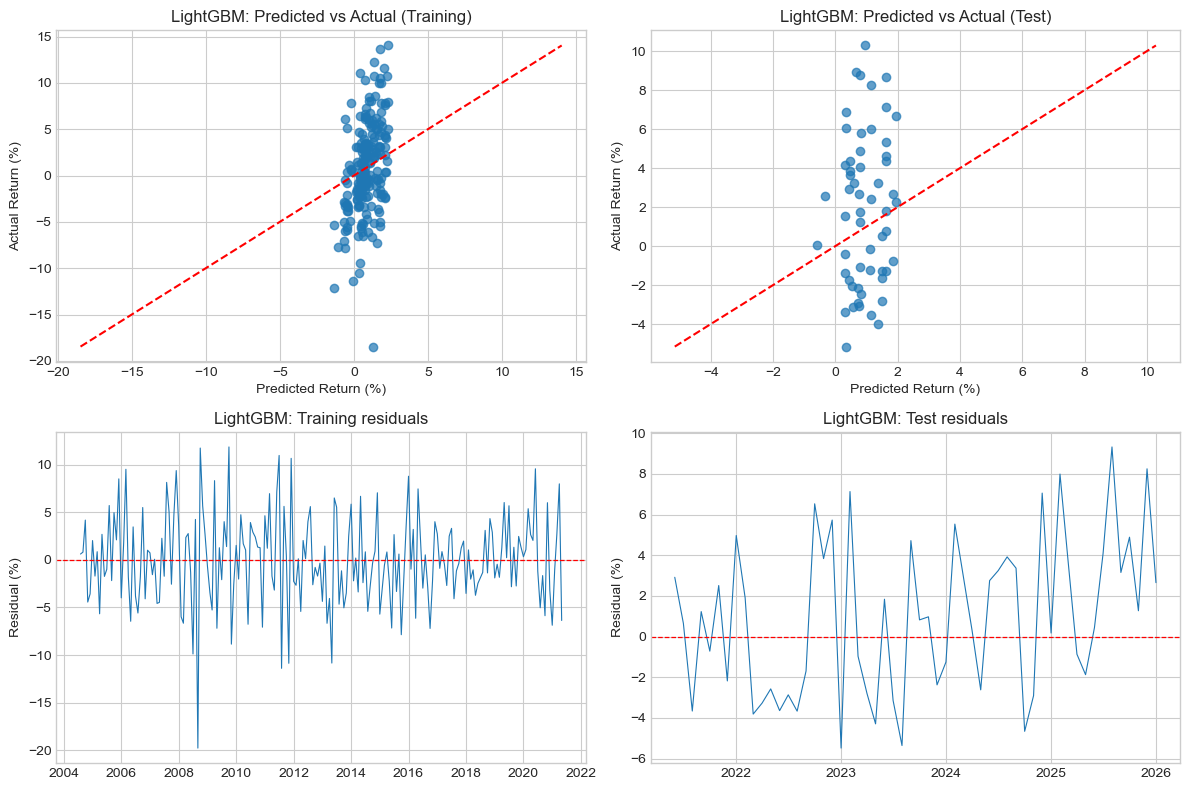

1.5.4 Improving the baseline model

The baseline OLS model outperformed the naïve historical-mean forecast on the final holdout test set, but its predictive power remained limited. It achieved a positive test \(R^2\) of 0.138, reduced MAE from 3.37% to 3.05%, and reduced RMSE from 3.99% to 3.57%. These are encouraging results, but they do not imply that the model is strong in an absolute sense: a typical forecast error of around 3 percentage points remains large relative to the scale of monthly gold returns.

The earlier diagnostics also showed that the baseline model’s largest misses occur during episodic shock periods, such as the Global Financial Crisis, the Eurozone stress period, and several months in 2023–2025. This suggests that the main weakness of the linear baseline is not strong average bias, but difficulty in dealing with regime shifts, delayed macro transmission, and abrupt non-linear repricing.

On that basis, three extensions are worth testing:

- Time-aware cross-validation to assess whether the apparent baseline signal is stable across historical subperiods

- Lagged predictors to test whether macro-financial effects transmit with delay

- A non-linear tree-based model (LightGBM) to test whether threshold effects and interactions improve forecasting accuracy

The aim is not simply to search for a slightly higher \(R^2\), but to determine whether a richer model can improve forecasting consistently across multiple metrics and in a way that remains economically meaningful.

Step 1: Time-Aware cross-validation on the baseline

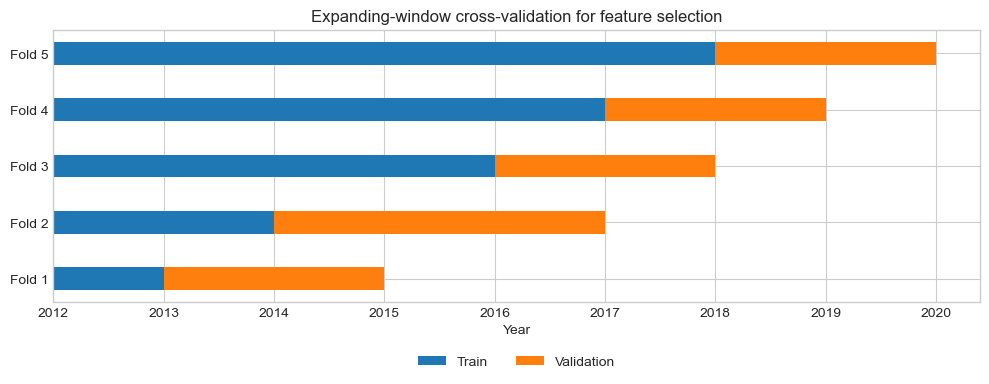

Before modifying the model, we evaluate the baseline OLS specification using expanding-window cross-validation within the training sample.

Why TimeSeriesSplit rather than random k-fold? In a time series, future observations must never be used to predict the past. Random k-fold cross-validation would mix different time periods and introduce look-ahead bias. TimeSeriesSplit respects chronology by ensuring that each validation window comes strictly after its training window.

Why this matters here: the sample spans several very different regimes, i.e the post-dot-com period, the Global Financial Crisis, the Eurozone crisis, the post-2013 adjustment, and the inflation/geopolitical environment of the 2020s. A model that performs well in one train/test split may simply be benefitting from a favourable historical window.

To keep the evaluation consistent with the holdout analysis, we compute \(R^2\), MAE, and RMSE in each fold.

tscv = TimeSeriesSplit(n_splits=5)

def cv_evaluate_ols(X, y, splitter):

r2_scores, mae_scores, rmse_scores = [], [], []

for idx_tr, idx_val in splitter.split(X):

model = LinearRegression()

model.fit(X.iloc[idx_tr], y.iloc[idx_tr])

y_val_true = y.iloc[idx_val]

y_val_pred = model.predict(X.iloc[idx_val])

r2_scores.append(r2_score(y_val_true, y_val_pred))

mae_scores.append(mean_absolute_error(y_val_true, y_val_pred))

rmse_scores.append(np.sqrt(mean_squared_error(y_val_true, y_val_pred)))

return r2_scores, mae_scores, rmse_scores

cv_baseline_r2, cv_baseline_mae, cv_baseline_rmse = cv_evaluate_ols(X_train, y_train, tscv)

print(f"Baseline OLS — CV R² per fold: {[round(s,4) for s in cv_baseline_r2]}")

print(f"Baseline OLS — CV MAE per fold: {[round(s,4) for s in cv_baseline_mae]}")

print(f"Baseline OLS — CV RMSE per fold: {[round(s,4) for s in cv_baseline_rmse]}")

print(f"Mean CV R²: {np.mean(cv_baseline_r2):.4f} ± {np.std(cv_baseline_r2):.4f}")

print(f"Mean CV MAE: {np.mean(cv_baseline_mae):.4f}% ± {np.std(cv_baseline_mae):.4f}")

print(f"Mean CV RMSE: {np.mean(cv_baseline_rmse):.4f}% ± {np.std(cv_baseline_rmse):.4f}")Baseline OLS — CV R² per fold: [-26.7551, -0.5363, -0.2154, -0.1248, -0.7991]

Baseline OLS — CV MAE per fold: [26.6544, 5.7512, 4.3148, 3.1111, 4.2859]

Baseline OLS — CV RMSE per fold: [34.9858, 6.7938, 5.2032, 4.0605, 5.5343]

Mean CV R²: -5.6861 ± 10.5372

Mean CV MAE: 8.8234% ± 8.9547

Mean CV RMSE: 11.3155% ± 11.8670To understand where this instability comes from, we inspect the date ranges of the folds.

def inspect_tscv_folds(X, y, dates, splitter, model_class=LinearRegression, model_kwargs=None):

if model_kwargs is None:

model_kwargs = {}

fold_info = []

for fold, (idx_tr, idx_val) in enumerate(splitter.split(X), start=1):

model = model_class(**model_kwargs)

model.fit(X.iloc[idx_tr], y.iloc[idx_tr])

y_val_pred = model.predict(X.iloc[idx_val])

y_val_true = y.iloc[idx_val]

val_dates = dates.iloc[idx_val]

fold_info.append({

"fold": fold,

"train_start": dates.iloc[idx_tr].min(),

"train_end": dates.iloc[idx_tr].max(),

"val_start": val_dates.min(),

"val_end": val_dates.max(),

"r2": r2_score(y_val_true, y_val_pred),

"mae": mean_absolute_error(y_val_true, y_val_pred),

"rmse": np.sqrt(mean_squared_error(y_val_true, y_val_pred))

})

return pd.DataFrame(fold_info)

baseline_fold_df = inspect_tscv_folds(

X_train,

y_train,

train.loc[train_clean.index, "date"].reset_index(drop=True),

tscv

)

print(baseline_fold_df.to_string(index=False)) fold train_start train_end val_start val_end r2 mae rmse

1 2004-08-01 2007-08-01 2007-09-01 2010-05-01 -26.755052 26.654353 34.985844

2 2004-08-01 2010-05-01 2010-06-01 2013-02-01 -0.536315 5.751158 6.793764

3 2004-08-01 2013-02-01 2013-03-01 2015-11-01 -0.215379 4.314753 5.203226

4 2004-08-01 2015-11-01 2015-12-01 2018-08-01 -0.124761 3.111065 4.060525

5 2004-08-01 2018-08-01 2018-09-01 2021-05-01 -0.799089 4.285863 5.534280These cross-validation results are much weaker than the single holdout test result. The mean CV \(R^2\) is strongly negative, and the fold-to-fold variation is extremely large. The most striking case is Fold 1, whose validation window runs from September 2007 to May 2010. That period covers the onset of the Global Financial Crisis and its immediate aftermath. The model is trained only on pre-crisis data and then evaluated in a radically different regime characterised by extreme stress, collapsing inflation expectations, emergency monetary easing, and unusually large gold moves. The very poor fold performance therefore reflects a genuine regime break, not merely ordinary forecast noise.

Later folds are less catastrophic but still weak. Fold 2 covers June 2010 to February 2013, a period overlapping with Eurozone sovereign-debt stress and elevated safe-haven demand, while Fold 5 covers September 2018 to May 2021, spanning the COVID shock and its aftermath. Together, these results suggest that the macro-financial relationship captured by the baseline model is fragile and time-varying. The positive holdout result remains encouraging, but it should be interpreted as period-specific rather than universally stable.

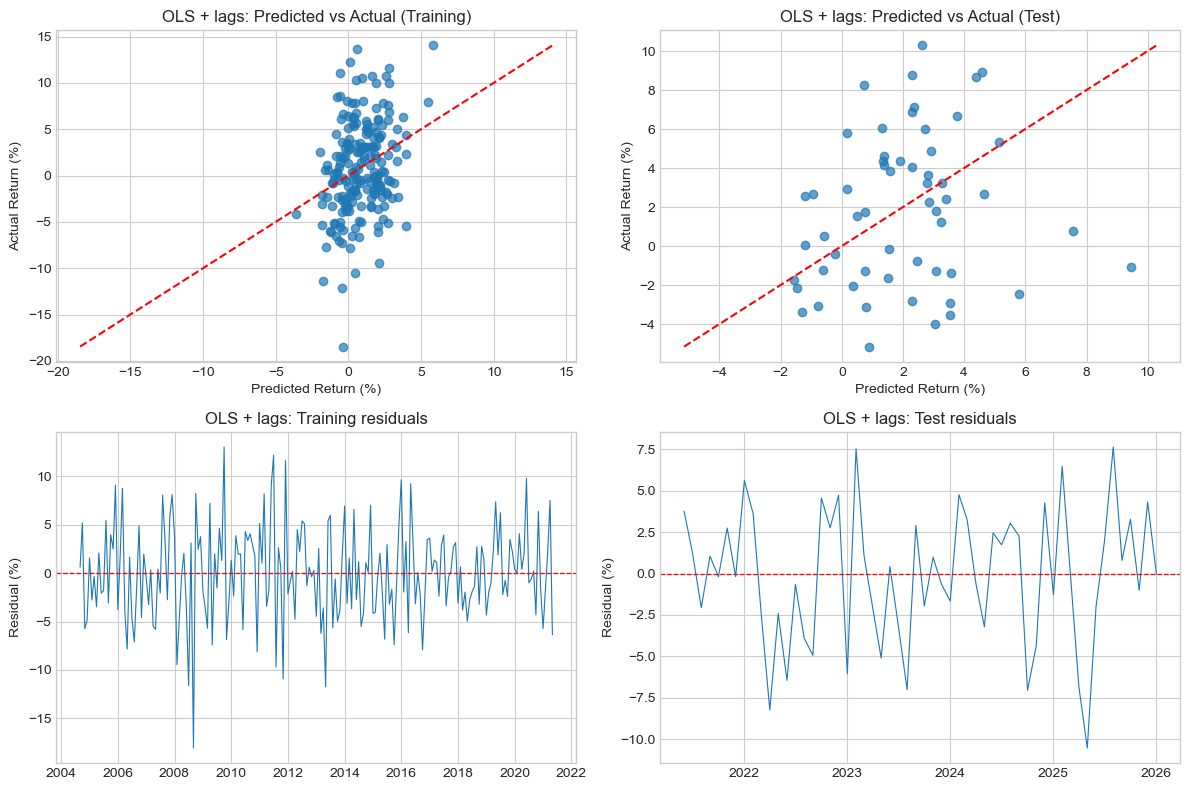

Step 2: Linear regression with lagged features

The baseline model already uses predictors observed in month \(t\) to forecast the gold return in month \(t+1\). Adding lags therefore tests a more specific question:

Do macro-financial conditions from month \(t-1\) contain useful information for forecasting returns at month \(t+1\), beyond the information already present at month \(t\)?

This is plausible for both economic and empirical reasons.

From an economic perspective, some channels may operate with delay:

- tighter financial conditions may influence portfolio allocation gradually rather than instantly

- inflationary pressure may feed through to gold demand over more than one month

- shifts in global sentiment may diffuse across markets with a lag

From an empirical perspective, the baseline residuals suggest that the model sometimes captures the right broad macro backdrop, but misses the timing of gold’s response. Lagged variables therefore test whether part of the baseline weakness reflects delayed transmission rather than complete absence of signal.

We augment the baseline feature set with one-month lags of each predictor.

for f in features:

modelling_df[f + "_lag1"] = modelling_df[f].shift(1)

features_lagged = features + [f + "_lag1" for f in features]

train_lag = modelling_df.iloc[:split_idx].copy()

test_lag = modelling_df.iloc[split_idx:].copy()

train_lag = train_lag[["date"] + features_lagged + ["target"]].dropna()

test_lag = test_lag[["date"] + features_lagged + ["target"]].dropna()

X_train_lag = train_lag[features_lagged]

y_train_lag = train_lag["target"]

X_test_lag = test_lag[features_lagged]

y_test_lag = test_lag["target"]

print(f"Lagged model training: {len(X_train_lag)} obs, {len(features_lagged)} features")

print(f"Obs-to-predictor ratio: {len(X_train_lag) / len(features_lagged):.1f}:1")Lagged model training: 201 obs, 10 features

Obs-to-predictor ratio: 20.1:1The first observation is lost mechanically because lagged values are unavailable for the first month. This is deterministic missingness, not a data-quality problem, and is handled appropriately by dropna().

lr_lag = LinearRegression()

lr_lag.fit(X_train_lag, y_train_lag)

evaluate(y_train_lag, lr_lag.predict(X_train_lag), "Lagged Train")

evaluate(y_test_lag, lr_lag.predict(X_test_lag), "Lagged Test ")Lagged Train: R²=0.0837, MAE=3.8229%, RMSE=4.8257%

Lagged Test : R²=-0.1353, MAE=3.3143%, RMSE=4.0970%The lagged model improves in-sample fit slightly relative to the baseline, but performs materially worse out of sample. On the holdout test set, its \(R^2\) turns negative, its MAE rises from 3.05% to 3.31%, and its RMSE rises from 3.57% to 4.10%. This is strong evidence that the additional lagged predictors are adding noise rather than useful predictive information at the one-month horizon.

We confirm this with expanding-window CV.

cv_lagged_r2, cv_lagged_mae, cv_lagged_rmse = cv_evaluate_ols(X_train_lag, y_train_lag, tscv)

print(f"Lagged OLS — CV R² per fold: {[round(s,4) for s in cv_lagged_r2]}")

print(f"Lagged OLS — CV MAE per fold: {[round(s,4) for s in cv_lagged_mae]}")

print(f"Lagged OLS — CV RMSE per fold: {[round(s,4) for s in cv_lagged_rmse]}")

print(f"Mean CV R²: {np.mean(cv_lagged_r2):.4f} ± {np.std(cv_lagged_r2):.4f}")

print(f"Mean CV MAE: {np.mean(cv_lagged_mae):.4f}% ± {np.std(cv_lagged_mae):.4f}")

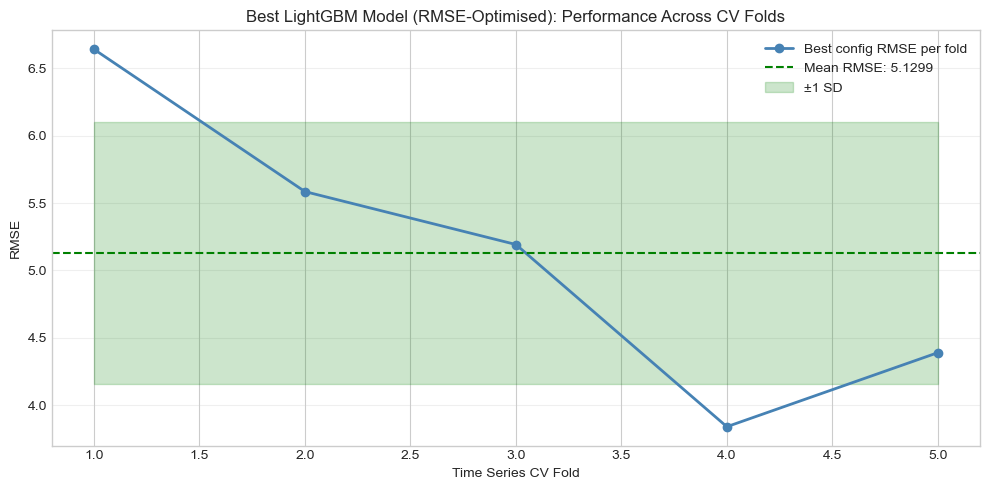

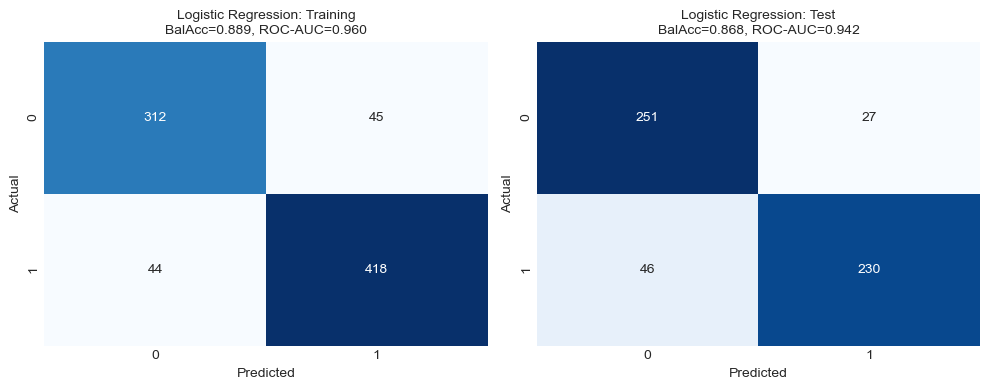

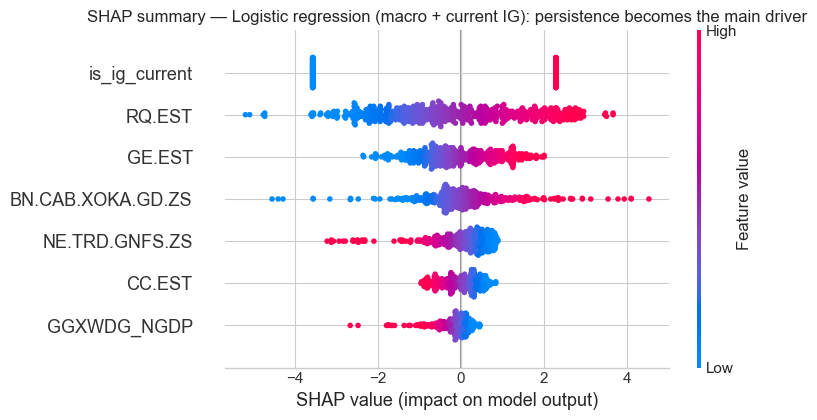

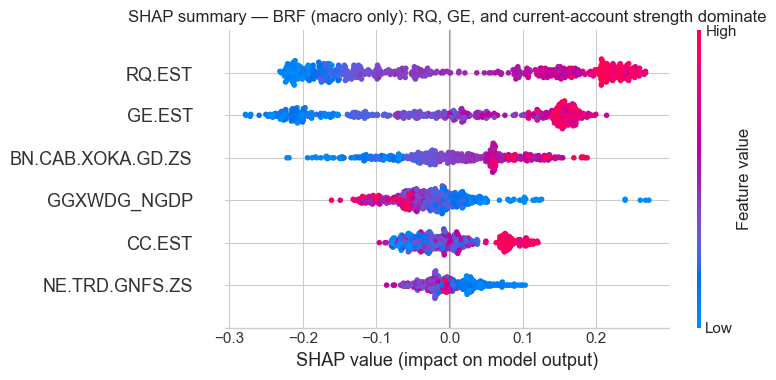

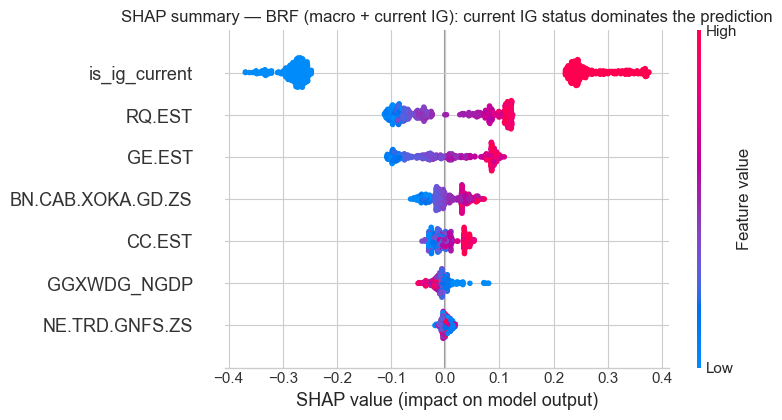

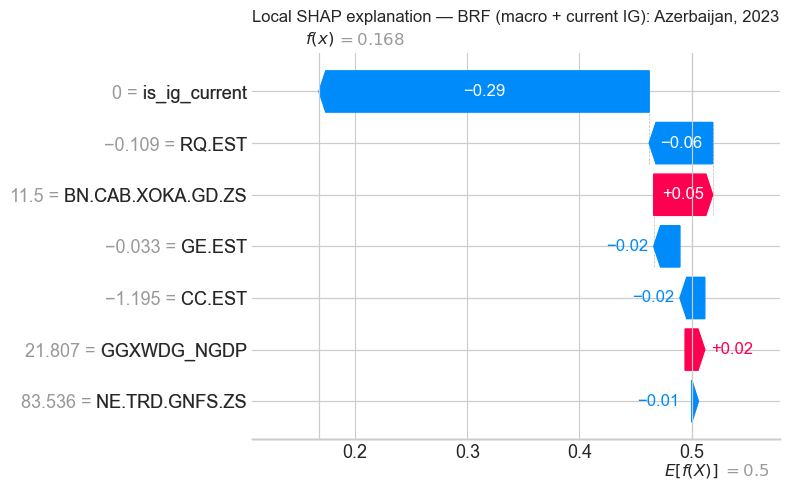

print(f"Mean CV RMSE: {np.mean(cv_lagged_rmse):.4f}% ± {np.std(cv_lagged_rmse):.4f}")Lagged OLS — CV R² per fold: [-7.9355, -0.2412, -0.2180, -0.2637, -0.7112]