flowchart LR

scrape["scrapy crawl"]:::process

scraped[("data/scraped/")]:::data

off(["OpenFoodFacts API"]):::external

enrich["enrich"]:::process

enriched[("data/enriched/")]:::data

serve["uvicorn + FastAPI"]:::process

scrape --> scraped

scraped --> enrich

off --> enrich

enrich --> enriched

enriched --> serve

classDef process fill:#0C56AA,stroke:#0B3175,color:#fff

classDef data fill:#fff,stroke:#0B3175,color:#0B3175,stroke-width:2px

classDef external fill:#FFF0E6,stroke:#F33463,color:#212121,stroke-width:2px

🖥️ Week 07 Lecture

From Food to Climate: Pipelines, Automation, and TPI

This week, we talk about common practices for automating data processing pipelines but this time we shift our attention to a new data source and a new domain!

In this lecture, you will hear from former DS205 students about their experience building data products for themselves and/or their employers. You will also meet our collaborators from the Transition Pathway Initiative Centre (TPI Centre) who will talk about their work assessing how banks, companies, and countries are managing their transition to a low-carbon economy. They will share how they are using the data pipeline built by the first DS205 cohort (2024/2025) to automate some of their own data processing workflows and how your work in the ✍️ Problem Set 2 will fit into their research.

📍 Session Details

- Date: Monday, 02 March 2026

- Time: 16:00 - 18:00

- Location: SAL.G.03

📋 Preparation

- If you want to get some initial experience with GitHub Actions, skim the GitHub Actions quickstart so the vocabulary is not entirely new.

- Browse a few company pages on the TPI Centre corporates page before the lecture. This will help you follow the guest segment.

Section A: Recap of the Food Engine

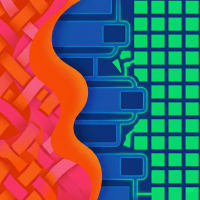

The data pipeline you built in W01-W05 for your first problem sethad three stages:

The spider collected raw product data into data/scraped/. From there, a separate step queried OpenFoodFacts for NOVA classifications and wrote the combined result to data/enriched/, which your FastAPI application then served through typed Pydantic models.

In data engineering, this is an ETL pipeline (Extract, Transform, Load): extract data from Waitrose, transform it by matching against OpenFoodFacts, load the enriched result into a format your API can serve.

There is also ELT (Extract, Load, Transform): you load the raw data first and transform it in place afterwards. ELT lets you keep raw data intact and re-run transformations as your logic improves. Your ✍️ Problem Set 2 pipeline will likely follow an ETLT pattern: extract raw PDFs/websites and load them into a structured database, then read from that data storage, chunk and embed the text in Python, and load the results into a vector database. Storing the raw data first means you can re-run the embedding step with different parameters without re-fetching everything from source.

👉 If you want to go deeper

Tools like Apache Airflow and Prefect implement these patterns at production scale. While we won’t use them in this course (they require cloud infrastructure that adds complexity at this stage), everything we build in this course will mirror the principles used by these tools. If you end up building larger and more complex pipelines on the cloud (AWS or Google Cloud or Azure), these tools will be a great way to automate your workflows.

📖 Read more: Astronomer: Best Practices for ETL and ELT Pipelines (for Apache Airflow).

Three design principles

To make things easier to debug and maintain on large-scale pipelines, it helps to keep these principles in mind when you design your data processing pipelines:

Atomicity: each stage of the pipeline should do one thing only.

Translated to our Problem Set 1 pipeline, the scraper stage should only scrape data from the source URLs and save it to a file, the enrichment step should only match products against the OpenFoodFacts API and save the result to a (new) file, and the API should only serve the data from the enriched file.

Idempotency: running the same step twice with the same input should produce the same output.

Translated to our Problem Set 1 pipeline, re-running the enrichment step should either skip products already matched or re-process them and arrive at the same result. Either way, the output doesn’t change between runs.

Modularity: each stage should be independent of the internal logic of other stages.

Translated to our Problem Set 1 pipeline, the scraper didn’t need to know about NOVA classifications, and the API didn’t need to know how to scrape. Each piece could be tested and modified on its own.

📖 Source: Astronomer: Best Practices for ETL and ELT Pipelines

These three principles should guide your ✍️ Problem Set 2 design.

🤔 Why bother?

You may feel that creating a pipeline is completely overkill for the type of things we build in this course. You may wonder “I could write all this code in a single Python script! Why bother with a pipeline?!”

It is true that our projects in this course are of a smaller scale of complexity and volume than what the data pipeline tools we’re getting inspiration from are designed for. However, we want to train you to be able to perform advanced data manipulation should the need for large scale arise.

This is why, for example, I teach scrapy projects in this course as opposed to using just a single Python script with requests and BeautifulSoup.

Section B: Automated Workflows with GitHub Actions

The core idea

So far, you have been running pipeline steps manually in your terminal. In the 🖥️ W05 Lecture, you saw how to develop a common interface for running the pipeline steps from the terminal but that still requires you to run the commands manually.

There are tools that allow you to define your pipeline steps in a configuration file that runs automatically on a remote machine for you. Today we will practice using GitHub Actions because it is free for public repositories and has a generous free tier for private ones.

Defining your pipeline steps in a GitHub Actions workflow

A GitHub Actions workflow is a list of commands that run on a machine you do not own. You define them in a configuration file (YAML format) and GitHub executes them on a remote machine when triggered. Each of your local terminal commands becomes a few lines in that file:

Local

GitHub Actions YAML

python pipeline.py scrape➜

- name: Scrape products

run: python pipeline.py scrapepython pipeline.py enrich➜

- name: Enrich products

run: python pipeline.py enrich

env:

OFF_API_KEY: ${{ secrets.OFF_API_KEY }}python pipeline.py serve➜

- name: Serve API

run: python pipeline.py serveWe also need to tell the remote machine how to set up the Python environment (pretty much like how we need to tell others in our README or CONTRIBUTING files):

Local

GitHub Actions YAML

# If using just pip (acceptable):

pip install -r requirements.txt➜

- name: Set up Python

uses: actions/setup-python@v5

with:

python-version: '3.11'

cache: 'pip'

- name: Install dependencies

run: pip install -r requirements.txt# If using conda:

conda env create -f environment.yml

conda activate food➜

- name: Set up conda

uses: conda-incubator/setup-miniconda@v3

with:

miniforge-version: latest

activate-environment: food

environment-file: environment.yml

- name: Cache conda packages

uses: actions/cache@v4

with:

path: ~/conda_pkgs_dir

key: conda-${{ runner.os }}-${{

hashFiles('environment.yml') }}Notice that pip install maps to a run: line, while conda setup and caching map to pre-built actions with uses:. The cache lines tell GitHub to save downloaded packages between workflow runs, so subsequent runs skip re-downloading dependencies that haven’t changed. The cache key includes a hash of your dependency file (requirements.txt or environment.yml), which means the cache refreshes automatically whenever you add or update a package.

📖 Read more: Dependency caching in GitHub Actions

The minimal workflows below show both options end to end.

Minimal working workflows

name: Food Engine pipeline

on:

push:

branches: [main]

schedule:

- cron: '0 2 * * 1' # Every Monday at 2am UTC

jobs:

pipeline:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

- name: Set up Python

uses: actions/setup-python@v5

with:

python-version: '3.11'

cache: 'pip'

- name: Install dependencies

run: pip install -r requirements.txt

- name: Scrape products

run: python pipeline.py scrape

- name: Enrich products

run: python pipeline.py enrich

env:

OFF_API_KEY: ${{ secrets.OFF_API_KEY }}

- name: Serve API

run: python pipeline.py serveThe cache: 'pip' line tells setup-python to cache downloaded packages between runs, so subsequent installs are faster. GitHub manages the cache automatically based on your requirements.txt.

name: Food Engine pipeline

on:

push:

branches: [main]

schedule:

- cron: '0 2 * * 1' # Every Monday at 2am UTC

jobs:

pipeline:

runs-on: ubuntu-latest

defaults:

run:

shell: bash -el {0}

steps:

- uses: actions/checkout@v4

- name: Set up conda

uses: conda-incubator/setup-miniconda@v3

with:

miniforge-version: latest

activate-environment: food

environment-file: environment.yml

- name: Cache conda packages

uses: actions/cache@v4

with:

path: ~/conda_pkgs_dir

key: conda-${{ runner.os }}-${{ hashFiles('environment.yml') }}

- name: Scrape products

run: python pipeline.py scrape

- name: Enrich products

run: python pipeline.py enrich

env:

OFF_API_KEY: ${{ secrets.OFF_API_KEY }}

- name: Serve API

run: python pipeline.py serveThe defaults: run: shell: bash -el {0} block is required for conda activation to work in GitHub Actions (without it, conda activate silently fails). setup-miniconda replaces what you do locally with conda env create -f environment.yml && conda activate food: it creates the environment and activates it for all subsequent steps.

The actions/cache step caches downloaded conda packages between workflow runs. The cache key includes a hash of environment.yml, so the cache refreshes whenever your dependencies change.

Both workflows share the same on: block, which sets two triggers: pushes to main and a weekly cron schedule. The three pipeline stages (scrape, enrich, serve) run in sequence, matching what you would type locally.

The actions/checkout, actions/setup-python, actions/cache, and conda-incubator/setup-miniconda steps are all pre-built actions from the GitHub Marketplace. The Marketplace has thousands of community-maintained actions for common tasks, so you rarely need to write setup logic from scratch.

📖 Read more: Writing GitHub Actions workflows | GitHub Actions Marketplace

Cron syntax

The line cron: '0 2 * * 1' in the workflow above is a cron expression. Cron is a scheduling format from Unix systems, and GitHub Actions uses it for the schedule trigger. The five fields represent:

| Field | Value | Meaning |

|---|---|---|

| Minute | 0 |

At minute 0 |

| Hour | 2 |

At 2am (UTC) |

| Day of month | * |

Every day |

| Month | * |

Every month |

| Day of week | 1 |

Monday |

A few more examples: '0 9 * * *' runs daily at 9am UTC, '0 0 1 * *' runs at midnight on the first of every month, and '*/15 * * * *' runs every 15 minutes.

You don’t need to memorise the format. crontab.guru lets you type an expression and see what it means in plain English, and crontab-generator.com builds expressions from dropdowns.

Secrets management

The line OFF_API_KEY: ${{ secrets.OFF_API_KEY }} pulls a secret from your repository settings. You set secrets in Settings > Secrets and variables > Actions on GitHub. The value never appears in logs or in the YAML file itself.

Secrets must never be hardcoded in YAML files or committed to git. If you accidentally commit an API key, rotate it immediately: the key is in your git history even if you delete the file.

📖 Read more: GitHub encrypted secrets

💡 In the 🖥️ W05 Lecture, you saw how click can wrap pipeline steps into a single script with subcommands. If you wrote a pipeline.py (or run_pipeline.py) with click, those same commands work identically inside a GitHub Actions workflow. The workflow just calls them on a remote machine instead of your laptop.

Section C: Debugging with VS Code

You have been writing increasingly complex scripts since W02. If something goes wrong, you probably add print() statements, re-run, read the output, add more prints, and repeat. This works, but it scales poorly. The VS Code debugger lets you pause execution at any line and inspect every variable without modifying your code.

A concrete scenario

Suppose a script that matches products against an external API silently returns None for half the records and you do not know why. Instead of sprinkling print statements, you can:

- Set a breakpoint inside the matching logic by clicking in the gutter (the space to the left of the line numbers) in VS Code. A red dot appears.

- Run in debug mode by pressing

F5or opening the Run and Debug panel (the play button with a bug icon in the sidebar). - Step through with

F10(step over the current line) andF11(step into a function call). - Inspect variables in the Variables panel on the left. You can see every local and global variable and their current values.

- Watch specific expressions by adding them to the Watch panel. For example, watch

resp.status_codeorproduct.get("barcode")to track values as you step through.

The debugger shows you the actual state of your programme at each line, which is faster and more reliable than guessing from print output.

If you are in a pure terminal environment without VS Code, ipdb does the same thing. Install with pip install ipdb, then add import ipdb; ipdb.set_trace() at the point you want to inspect.

📖 Read more: VS Code: Debugging Python | Debugging Scrapy in VS Code (for tools that require running terminal commands like scrapy crawl)

🔧 Optional Live Activity

If we have the time, we will try creating a GitHub Actions workflow in a private repo to see the green tick. If we run out of time, you can try this at home: create a repo, add a simple workflow that runs python --version, push it, and check the Actions tab.

Section D: Guest Segment, Ruikai Liu (Jorb.ai)

Ruikai Liu is a former DS205 student (Final-year BSc Economics, LSE, cohort 1 of this course) who built Jorb.ai, a job search and application tracker for finance recruiting. The product tracks 500+ mainstream firms and 3,000+ boutique firms.

Jorb.ai does what this course teaches: scrape career pages, structure the data, and serve it through a searchable interface. Ruikai will walk through his architecture and discuss the engineering decisions he faced when scaling from a personal project to a product with real users.

Section E: Guest Segment, TPI Centre (Sylvan + team)

![]()

The Transition Pathway Initiative (TPI) Centre evaluates how companies are managing their transition to a low-carbon economy. Their analysts score companies on Carbon Performance and Management Quality using extensive analysis of messy, unstructured data.

Guest Speaker:

Sylvan Lutz

Policy Officer, ASCOR Analyst

Sylvan and his team will explain what TPI analysts do day-to-day and how they are currently using pipeline outputs from DS205 cohort 1 in their research. Their workflows directly inform what you’ll build for ✍️ Problem Set 2.

📋 Before the lab tomorrow: Browse a few company pages on the TPI corporates page. Look at what information is available for each company, how assessments are structured, and what a Carbon Performance score looks like. This will help you engage with the lab discussion.

🎥 Session Recording

The lecture recording will be available on Moodle by the afternoon of the lecture.

Appendix | Reference Links

Course links

- 💻 W07 Lab

- 📓 Syllabus

- 🤖 DS205 AI Tutor

Slack

GitHub Actions

Pipeline concepts

Guest speakers