🗓️ Week 01

Welcome to the course

LSE DS202 – Data Science for Social Scientists

19 Jan 2026

Who we are

Your lecturer

Assist. Prof. (Education)

LSE Data Science Institute

lecturer

course convenor

- PhD in Computer Science (University of Twente, Netherlands)

- Background: Engineering, Databases, Health Informatics, ML for cybersecurity

- Formerly Research Associate at King’s College London and the University of Edinburgh (School of Informatics)

decision support systems

machine learning applications

databases

provenance

ethical AI/XAI

Teaching Assistants

Guest Lecturer

Data Science Institute

DPhil in Politics (Oxford University)

guest teacher

Data Scientist

MSc in Statistical Science (Oxford University)

guest teacher

MPhil/PhD candidate in Health Policy and Health Economics (LSE)

Research Consultant: Development Impact Evaluations (World Bank Group)

guest teacher

Administrative Support

Teaching and Learning Administrator

LSE Data Science Institute

Write an e-mail to Kevin:

- if you cannot find the lecture recording on Moodle

- when you need an extension for an assignment

(👉 check LSE’s extension policy) - to request a class group change

(you will be asked to provide a reason for this) - to inform us of any other issues that may affect your studies

The Data Science Institute

- This course is offered by the LSE Data Science Institute (DSI).

- DSI is the hub for LSE’s interdisciplinary collaboration in data science

- ⏭️ Let’s see a few activities that might be of interest to you

Sign up for DSI events at lse.ac.uk/DSI/Events

CIVICA Seminar Series

Follow the seminar series: 🔗 Link

Careers in Data Science

Hear from alumni or industry experts about their career paths and how they got to where they are today.

Latest events:

🗓️ Data Science across industries (03 December 2024- 4.00 to 5.30pm)

Machine learning is transforming large parts of the economy, and data scientists have the opportunity of to apply their skills in an incredibly broad variety of domains. The technical field is in rapid progress and professional roles in continuous development as companies navigate successive waves of technological and economic change. Data scientists must therefore craft skill paths which balance focus on rapid learning with capabilities complementing their domain, organisations and wider industry.

Drawing on his experience from startups, consulting and tech, Christian Svalesen, Senior Machine Learning Engineer at SoundCloud will provide insights into what data science roles and projects can involve across industries. He will share advice on how students can prepare and develop through their professional journey.

Read more about this series of events: 🔗 Link

Careers in Data Science

Hear from alumni or industry experts about their career paths and how they got to where they are today.

Latest events:

🗓️ Navigating Data Science from Academia to Media, and Beyond (23 October 2024 - 4.30 to 6pm)

With the rise in adoption of AI/ML technologies and the increasing demand for data-driven decision-making, data science has become a vital component across many industries, including media. As data science transforms the media landscape - enhancing content personalisation, optimising conversion strategies, and improving audience engagement, it is also becoming an increasingly popular tool for addressing complex business challenges. Navigating a role in this field can be both exciting and challenging.

Tabtim Duenger, Senior Data Scientist at The Economist and Riya Chhikara, Data Scientist at the Economist, both LSE graduates1, will offer insights into their paths to entering the field of data science. They will discuss their experiences in landing their first roles, negotiating their functions and responsibilities within the media sector, and how they use these experiences and networks to continue guiding their careers.

Read more about this series of events: 🔗 Link

Industry “field trips”

‘Winners’ of the upcoming Bank of England trip will be announced soon!

Sign up for DSI events at lse.ac.uk/DSI/Events

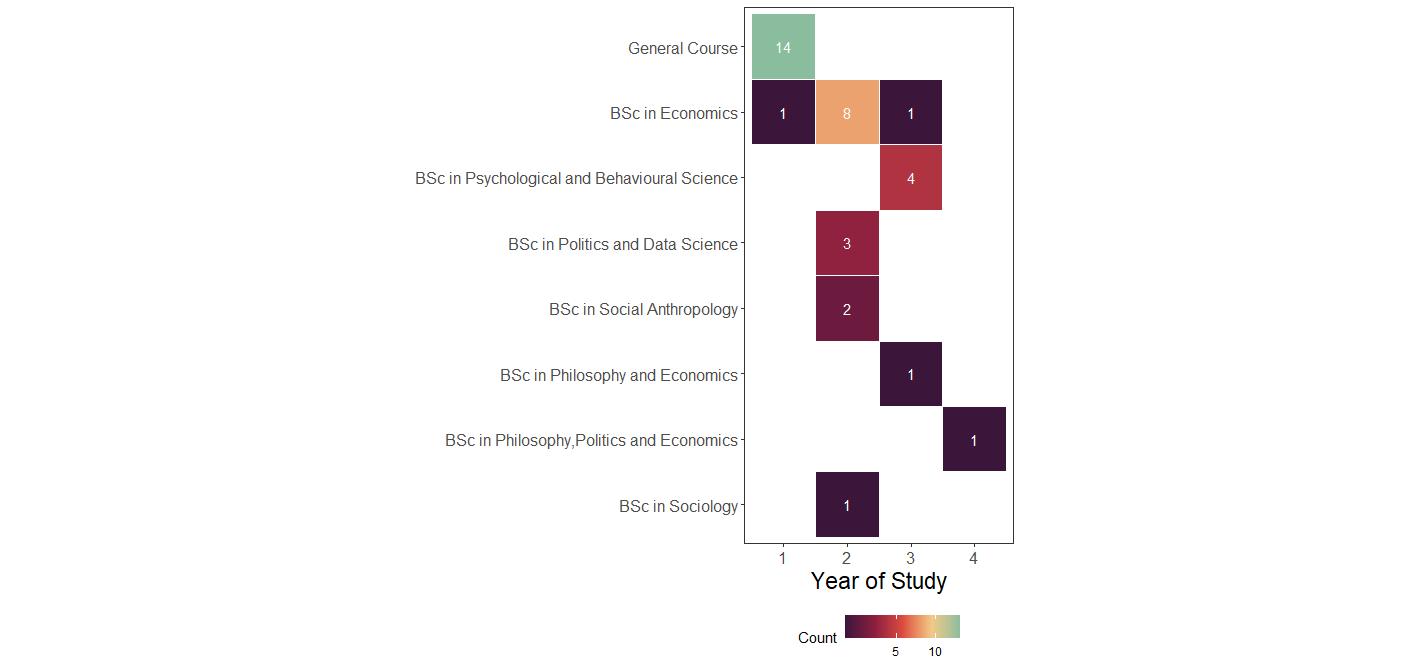

Who are you?

| Programme | Freq |

|---|---|

| General Course | 14 |

| BSc in Economics | 10 |

| BSc in Psychological and Behavioural Science | 4 |

| BSc in Politics and Data Science | 3 |

| BSc in Social Anthropology | 2 |

| BSc in Philosophy and Economics | 1 |

| BSc in Philosophy,Politics and Economics | 1 |

| BSc in Sociology | 1 |

| Year | Count |

|---|---|

| 1 | 17 |

| 2 | 10 |

| 3 | 11 |

| 4 | 2 |

Who are you? (cont.)

Key insight: Diverse backgrounds → diverse perspectives on DS problems

Course Rep Selection:

- We’ll elect a course representative in Week 2

- Important role: Represent student voice, provide feedback to teaching team

- Think about whether you’d like to nominate yourself!

What is this course about?

![]() Course Brief

Course Brief

What is this course about?

Focus: learn and understand the most fundamental machine learning algorithms

How: practical use of machine learning techniques and its metrics, applied to relevant data sets

![]() Course Brief

Course Brief

What is this course about?

- Focus: learn and understand the most fundamental machine learning algorithms

- No neural networks, no deep learning, no large-scale data

- How: practical use of machine learning techniques and its metrics, applied to relevant data sets

- Some but not a lot of theory, math proofs and derivations

- Lots of coding, examples and exercises

![]() Course Brief

Course Brief

Two Critical Principles:

1. Learn to Learn

- We provide essential building blocks, not everything on a platter

- You’ll need to read documentation, explore independently

- Homework is not optional - it’s where deep learning happens

- Ask questions, but try to solve problems first

2. No Single “Right Answer”

- Data science is about justified choices, not just code

- You must explain WHY you chose a model, parameter, metric

- Interpretation matters as much as implementation

- Context of the problem/dataset shapes your decisions

🎯 Learning Objectives

- Understand the fundamentals of the data science approach, with an emphasis on social scientific analysis and the study of the social, political, and economic worlds;

- Understand how classical methods such as regression analysis or principal components analysis can be treated as machine learning approaches for prediction or data mining.

- Know how to fit and apply supervised machine learning models for classification and prediction.

- Know how to evaluate and compare fitted models, and improve model performance.

- Use applied computer programming, including the hands-on use of programming through course exercises.

- Apply the methods learned to real data through hands-on exercises.

- Integrate the insights from data analytics into knowledge generation and decision-making.

- Understand an introductory framework for working with natural language (text) data using techniques of machine learning.

- Learn how data science methods have been applied to a particular domain of study (applications).

📚 Course Structure

How will this course be taught?

How do I prepare for this course?

🧑🏻💻 Labs (90 min each week)

- Purpose: introduce new concepts and tools which will only be explored in more detail in the lectures

- Why? So you can come to the lectures with good questions!

- Typically:

- your class teacher might give you some context about the new tools/algorithms

- you will be given time to work on something by yourself

- there will be moments to share your interpretation of the results of algorithms with the classroom

- You have to attend the lab you are enrolled in. You can’t switch on the day

Important

There might be some preparatory work to do before each lab!

Always check Moodle/the webpage at least a day before coming to the lab.

More about 🧑🏻💻 Labs (90 min each week)

Each week, you will have a roadmap of what to do.

The roadmap will typically contain the following elements:

| Type of activity | Description |

|---|---|

| 🧑🏻🏫 TEACHING MOMENT | Your class teacher deserves your full attention |

| 🎯 ACTION POINTS | Time to follow the steps in the roadmap. Try it for a bit, but if you get stuck, call your class teacher. |

| 👥 IN PAIRS/GROUPS | You will benefit from completing that task with your peers more than doing it alone |

| 🗣️ CLASSROOM DISCUSSION | Your class teacher will facilitate a discussion about the task |

| 📝 SUBMISSION | Submit your work |

👉 Now, let’s navigate our Moodle page to see the 📓 Syllabus and to talk about ✍️ Assessments & Feedback.

If you are reading this but you are not an LSE student, the same content is available on the course’s 🌐 public website

👩🏻🏫 Lectures (2 hours per week)

- The first sessions will have slides, but mostly, it will be live coding

- Feel free to code along with your lecturer

- Pair/group exercises and discussions to interpret results

- Bring a laptop if you can! (💡 you can borrow one from the library)

- Recorded sessions will be available on Moodle on the next working day

Programming

Pre-requisites and assumptions

We assume that you have some basic knowledge of:

Pre-requisites and assumptions

We assume that you have some basic knowledge of:

- Descriptive Statistics

- Some linear algebra

- Programming

- If you took ST102, you should be fine.

- Nothing crazy, mostly matrix operations (simpler than MA107)

- It’s ok if you are new to Python, but do reserve some extra hours in the first weeks to practice the basics.

New to Python?

- LSE Digital Skills Lab offers pre-sessional Python workshops

- Check: lse.ac.uk/dsl

- Highly recommended if you’re starting from scratch!

Python Environment Management

Why it matters:

- Different packages need different Python versions

- ML/AI packages often lag behind latest Python release

Our approach in this course:

Weeks 1-3: Python 3.13 - Latest Anaconda distribution - Great for pandas, numpy, matplotlib, seaborn - Basic data science work

Week 4 onwards: Python 3.12 - Better support for scikit-learn, statsmodels - Some advanced ML packages not yet on 3.13 - We’ll guide you through the switch

Generative AI Tools Policy

There are three official positions at LSE:

Position 1: No authorised use of generative AI in assessment. (Unless your Department or course convenor indicates otherwise, the use of AI tools for grammar and spell-checking is not included in the full prohibition under Position 1.)

Position 2: Limited authorised use of generative AI in assessment.

Position 3: Full authorised use of generative AI in assessment.

👉 This is the position we adopt in this course

Source: School position on generative AI, LSE Website, September 2024

Responsible Use of AI - REQUIRED

Our Policy - Responsible Use (NOT Optional!):

✅ You CAN use: - ChatGPT, Copilot, Claude, etc. for lectures, labs, assignments

⚠️ You MUST: - Acknowledge every use in your submissions - Explain HOW you used it (see examples below) - Check and understand all AI-generated code/content - Critically evaluate AI suggestions against course materials

❌ You CANNOT: - Use AI when explicitly told not to - Submit AI output without understanding it - Claim AI work as entirely your own

Example acknowledgment:

“I used ChatGPT to debug my pandas merge operation. It suggested using pd.merge() with on='date', but this produced duplicates. I revised it to include how='left' after reviewing the pandas documentation.”

Why this matters:

- Part of our GenAI Learning research project

- Learning to use AI responsibly is a key skill

- We’re studying how it affects your learning

👉 Full policy on Moodle - read it carefully!

Teaching Philosophy

Empirical, experience-focused learning:

- Learning by doing (trial and error is encouraged!)

- You’ll often encounter new concepts before formal explanations

- Struggle is part of the process - ask “dumb questions”

- Help and learn from each other

This is not a “spoon-feeding” course:

- We provide building blocks and guidance

- You build understanding through practice and exploration

- Read documentation, try things, bring us your questions

Python Comfort Check

Quick Poll (Mentimeter):

How comfortable are you with Python basics? (1-5 scale)

- 1 = Never used it

- 5 = Very comfortable

Have you used pandas before? (Yes/No/A little)

Have you used numpy before? (Yes/No/A little)

Results will guide our Python review depth

- If >70% comfortable: Quick 10-min refresher, focus on pandas nuances

- If 40-70% comfortable: Moderate pace (15 min), emphasize pandas

- If <40% comfortable: Full review (25 min) - we’ll catch you up!

What do we mean by data science?

Data science is…

“[…] a field of study and practice that involves the collection, storage, and processing of data in order to derive important 💡 insights into a problem or a phenomenon.

Such data may be generated by humans (surveys, logs, etc.) or machines (weather data, road vision, etc.),

and could be in different formats (text, audio, video, augmented or virtual reality, etc.).”

The academic possibilities

- Humans and machines nowadays generate A LOT of data ALL THE TIME

- It has become very cheap to collect and store this data

- This abundance of data opens up new possibilities for research & policy-making

New data to answer old questions:

- How do rumours spread?

- How can we predict unemployment rates accurately?

New questions enabled by new data/new technologies:

- Is social media a threat to democracy/public order?

- Is generative AI a threat to the job market?

We hope that in this reformulated version of the DS202 course, you will learn how to tackle similar questions that are relevant to your field of study.

Applications in YOUR Fields

For Economics students (10) & General Course (14, mostly business/econ): - Predicting UK inflation trends using consumer spending data - Analyzing income inequality patterns across London boroughs - Forecasting housing market shifts using property transaction data

For Politics & Data Science students (3): - Tracking public sentiment on Brexit using survey data over time - Predicting UK election outcomes at the constituency level - Analyzing parliamentary voting patterns to identify party factions

For Psychology & Behavioural Science students (4): - Understanding mental health trends among university students from NHS data - Predicting therapy dropout rates based on early session patterns - Analyzing social media usage and wellbeing correlations

For Sociology & Anthropology students (3): - Mapping gentrification patterns in East London using census data - Understanding migration flows and integration outcomes - Analyzing cultural consumption patterns across UK demographics

Data Science and Social Science

👉 Traditional Statistics in the social sciences: the goal is typically explanation

👉 Data science: the focus is frequently put more on data exploration and prediction

- Data science is heavily influenced by computer science and engineering

- There is a strong emphasis on computational efficiency and scalability (due to big data)

- Many of the algorithms and methods you will learn in this course can be used in both contexts (explanation vs prediction)

- We will try to highlight the differences in these approaches throughout the course

The Data Science Workflow

The Data Science Workflow

It is often said that 80% of the time and effort spent on a data science project goes to data gathering, cleaning, and preparation.

In this course:

- We focus mainly on the “20%” (exploration → ML → insights)

- But you’ll definitely see the “80%” reality too!

- Most datasets we give you are already clean…

- …but you’ll experience the data wrangling challenge in assignments

Note

If you want to practice the “80%” more, check out our other course: DS105.

Real Example: Bank of England Interest Rates

The Challenge

The Question: “Will the Bank of England raise, lower, or hold interest rates next month?”

Why it matters: - Affects mortgages for homeowners - Changes savings account interest - Impacts business loan costs - Influences overall UK economic growth

This was a real assignment from last year

By the end of this course, you’ll be able to tackle this problem yourself!

Step 0: What Data Do We Need?

The research phase (requires domain knowledge!):

What factors influence BoE decisions?

Identify relevant indicators:

- Consumer Confidence Index (CCI): Are people optimistic about spending?

- Inflation (CPIH): How fast are prices rising?

- GDP Growth: Is the economy expanding or contracting?

- Exchange Rates: GBP vs EUR/USD strength

- 10-year Gilt Yields: What do bond markets expect?

- Unemployment Rate: Labor market health

The reality check: - Is this data available? Where? - What sources: ONS, OECD, Bank of England, Federal Reserve - In what format? How far back does it go? - Can we legally use it?

Important

This IS data science: Finding the right data to answer your question comes first!

Step 1: Gather the Data

Download from multiple sources:

Bank of England: Interest rate decisions - First discovery: Decisions don’t happen every month! - They occur roughly every 6-8 weeks

Economic indicators from different sources:

| Indicator | Source |

|---|---|

| Consumer Confidence Index (CCI) | OECD |

| CPIH inflation | ONS |

| GDP monthly estimates | ONS |

| GBP/EUR and GBP/USD exchange rates | Bank of England |

| 10-year gilt yields | Federal Reserve Bank of St. Louis |

| Unemployment rate | ONS |

Warning

The challenge: Different sources = different formats, different frequencies, different date conventions!

Step 2: The Alignment Challenge

The tricky bit:

For each BoE decision date, calculate 3-month average of each indicator

Example: - Decision date: 06/05/1997 - Calculate average GDP for: May 1997, April 1997, March 1997 - Repeat separately for all 6 other indicators - Align everything to the decision date

Why this matters: - BoE makes decisions based on recent economic context - Need to capture the “state of the economy” at decision time - Must handle: - Monthly vs quarterly data - Missing values - Different date formatting (UK vs US formats!) - Alignment of different time series

Tip

This is the 80%: Getting data into the right shape for analysis

Step 3: Only NOW Can You Predict

After all that preparation:

- Explore patterns: Which indicators correlate with rate increases?

- Build models: Learn from historical decisions (Weeks 5-10 of this course)

- Make predictions: Up, down, or hold?

- Evaluate: How accurate were we?

A major challenge - Distribution Shift: - Models learn from past BoE decisions - But what if the economic environment changes fundamentally? - Examples: Post-2008 financial crisis, COVID-19 pandemic, Brexit - Past patterns may not apply to new contexts - We’ll discuss this more in Week 11

Important

The reality: No model is perfect. Your job is to:

- Build the best model you can with available data

- Understand its limitations

- Communicate uncertainty clearly

- Justify your modeling choices

The Journey Ahead

Key insight: “By the time you’re ready to do ‘machine learning,’ you’ve already done the hard work”

Your journey in this course:

Weeks 1-4: Data Foundation - Data handling with pandas - Data cleaning and transformation - Exploratory data analysis - Visualization

Weeks 5-10: Machine Learning - Classification algorithms - Regression models - Model evaluation - Parameter tuning - Interpreting results

Week 11: Advanced Topics - Distribution shift and model limitations - Ethical considerations - Real-world deployment challenges

The 80/20 Reality

Before: Messy reality

- Merged cells, inconsistent formatting

- Multiple sheets with different structures

- Date formats: “Jan-97”, “01/1997”, “1997-01”

- Missing values marked as “..”, “N/A”, or blank

- Column names with special characters

- Footnotes mixed with data

After: Clean and ready

- Consistent date format (YYYY-MM-DD)

- No missing values (or properly handled)

- Clean column names

- One observation per row

- Ready for analysis!

Note

The message: Even government data needs serious cleaning. You’ll spend most of your time here, but that’s where real insights emerge.

☕️ Time for a break

Coming up next: Python Review & Setup

Take a 10-minute break, then we’ll dive into Python!

Let’s get more technical

Python Review & Environment Setup

Python vs R

A few stats

- Python ranked number 1 in the TIOBE Programming Community index of January 2025 (rating of 23.28% and change of +9.32% compared to January 2024) vs R at number 18 (rating of 1.00% and change of +0.27%)

- Python at the top of the IEEE Spectrum rankings of programming languages of 2024, in two aspects of popularity measured. R sits at rank 20 in the “Spectrum” ranking, rank 17 in the “Trending” one and at rank 21 for the “Jobs” ranking

- the PYPL PopularitY of Programming Language index also has Python at the top in January 2025 (share 29.8% with +1.7% increase). R ranks at number 6 (share 4.63% and no increase)

Python vs R

- Python is a general-purpose programming language

- It is used for web development, scientific computing, data science, advanced machine learning tools (deep learning), etc.

- R is more niche. It is a programming language created for statistical computing

- You can do many other things with R, but it is mostly used for statistics and general data science (except for heavy Machine Learning)

Some Python basics

Data types

Python basics

Python lists

- We can put basic data types (i.e strings, integers, floats) in collections of data (e.g lists, dictionaries, tuples)

returns:

[1, 2, 3, 4]You could use the append method to add elements to a list:

returns:

[1, 2, 3, 4, 5]Python basics

Python lists (cont.)

- Lists don’t need to contain a single data type

- You can define lists of lists and lists of lists of lists, etc…

Python basics

Other types of data collections

- Aside from lists, you also have tuples

returns

(1, 2, 3, 4)returns

2What do you think is the difference here?

Python basics

Other types of data collections

Aside from lists and tuples, you also have dictionaries and other more complex data collection types (see the documentation).

A Python dictionary is a collection of key-value pairs:

returns:

{'first_name': 'Jane', 'last_name': 'Doe', 'city': 'London'}Python basics

Some basic operations

- If you run:

you will get the type of your Python object.

The above returns:

<class 'dict'>

<class 'float'> # since var = float(2.0) Python basics

Sometimes, we need to perform operations repeatedly

We have loops (for or while loops):

(Note that Python needs indentation and you absolutely can’t mix tabs and spaces!)

And you have list comprehensions (as well as dictionary comprehensions):

returns

{0: 0, 1: 1, 2: 4, 3: 9, 4: 16, 5: 25,

6: 36, 7: 49, 8: 64, 9: 81}{'b': 2, 'd': 4}Python basics

Custom functions definition

Python basics

Custom functions definition

Let’s define functions based on the loops and list comprehension from before. We’ll do some code profiling!

import cProfile

def for_loop_example():

result = []

for i in range(100000):

result.append(i * 2)

def while_loop_example():

result = []

i = 0

while i < 100000:

result.append(i * 2)

i += 1

def list_comprehension_example():

result = [i * 2 for i in range(100000)]

# Profile each function

print("Profiling for loop:")

cProfile.run("for_loop_example()")

print("\nProfiling while loop:")

cProfile.run("while_loop_example()")

print("\nProfiling list comprehension:")

cProfile.run("list_comprehension_example()")Python basics

Results from the loops and list comprehension profiling

Profiling for loop:

100004 function calls in 0.022 seconds

ncalls tottime percall cumtime percall filename:lineno(function)

1 0.013 0.013 0.021 0.021 <python-input>:3(for_loop_example)

100000 0.008 0.000 0.008 0.000 {method 'append' of 'list' objects}

Profiling while loop:

100004 function calls in 0.021 seconds

ncalls tottime percall cumtime percall filename:lineno(function)

1 0.014 0.014 0.020 0.020 <python-input>:8(while_loop_example)

100000 0.006 0.000 0.006 0.000 {method 'append' of 'list' objects}

Profiling list comprehension:

4 function calls in 0.003 seconds

ncalls tottime percall cumtime percall filename:lineno(function)

1 0.002 0.002 0.002 0.002 <python-input>:15(list_comprehension_example)Takeaway: List comprehensions are much faster! (~7x speedup)

pandas and scikit-learn (briefly)

- Python has a base set of functions and libraries that come with the installation (e.g

os,collections,math, etc. - see the Python documentation)

The

pandas,numpyandscikit-learnlibraries are not part of the standard Python libraries, but they are very popular and actively maintained packagesThese packages contain most of the functionality needed to handle datasets, manipulate them (

pandasmainly), perform statistical operations on them and apply machine learning modelsThese are the libraries we will rely on most in this course

Note to R users

Think of pandas as what tidyverse is to R and to some extent of scikit-learn, as what tidymodels (and perhaps caret) are to R.

A touch of pandas

Example: reading a csv file

A touch of pandas

- In

pandas, you write multiple functions in succession or use method chaining:

Without method chaining

A touch of pandas

Example: filtering rows

Filtering when the values are integers

Filtering when the values are strings

Example: concatenating dataframes

Say we have two random datasets:

If we want to concatenate vertically:

which returns

Quick Hands-on - Real Data

Let’s load some real economic data:

Try it yourself:

- What patterns do you see?

- When did inflation spike?

- What might explain those spikes?

This connects immediately to the BoE example and gets you coding!

Coming Up

Next Week’s Lab: - Hands-on with pandas - Data cleaning exercises - Prep work: Check Moodle by Wednesday

Resources: - Former DS105 students: Check ME204 for more pandas practice - New to Python: Budget extra time for basics, use office hours/Digital Skills Lab

Office Hours: Check Moodle for schedule

References

![]()

LSE DS202A (2025/26) – Week 01